QoS and QoS policies

This chapter provides an overview of the 7705 SAR Quality of Service (QoS) and information about QoS policy management.

Topics in this chapter include:

QoS overview

This section contains the following overview topics related to QoS:

Overview

In order to provide what network engineers call Quality of Service (QoS), the flow of data in the form of packets must be predetermined and resources must be somehow assured for that predetermined flow. Simple routing does not provide a predetermined path for the traffic, and priorities that are described by Class of Service (CoS) coding simply increase the odds of successful transit for one packet over another. There is still no guarantee of service quality. The guarantee of service quality is what distinguishes QoS from CoS. CoS is an element of overall QoS.

By using the traffic management features of the 7705 SAR, network engineers can achieve a QoS for their customers. Multiprotocol label switching (MPLS) provides a predetermined path, while policing, shaping, scheduling, and marking features ensure that traffic flows in a predetermined and predictable manner.

There is a need to distinguish between high-priority (that is, mission-critical traffic like signaling) and best-effort traffic priority levels when managing traffic flow. Within these priority levels, it is important to have a second level of prioritization, that is, between a certain volume of traffic that is contracted/needed to be transported, and the amount of traffic that is transported if the system resources allow. Throughout this guide, contracted traffic is referred to as in-profile traffic. Traffic that exceeds the user-configured traffic limits is either serviced using a lower priority or discarded in an appropriate manner to ensure that an overall quality of service is achieved.

The 7705 SAR must be properly configured to provide QoS. To ensure end-to-end QoS, each and every intermediate node together with the egress node must be coherently configured. Proper QoS configuration requires careful end-to-end planning, allocation of appropriate resources and coherent configuration among all the nodes along the path of a given service. Once properly configured, each service provided by the 7705 SAR will be contained within QoS boundaries associated with that service and the general QoS parameters assigned to network links.

The 7705 SAR is designed with QoS mechanisms at both egress and ingress to support different customers and different services per physical interface or card, concurrently and harmoniously (see Egress and ingress traffic direction for a definition of egress and ingress traffic). The 7705 SAR has extensive and flexible capabilities to classify, police, shape and mark traffic to make this happen.

The 7705 SAR supports multiple forwarding classes (FCs) and associated class-based queuing. Ingress traffic can be classified to multiple FCs, and the FCs can be flexibly associated with queues. This provides the ability to control the priority and drop priority of a packet while allowing the fine-tuning of bandwidth allocation to individual flows.

Each forwarding class is important only in relation to the other forwarding classes. A forwarding class allows network elements to weigh the relative importance of one packet over another. With such flexible queuing, packets belonging to a specific flow within a service can be preferentially forwarded based on the CoS of a queue. The forwarding decision is based on the forwarding class of the packet, as assigned by the ingress QoS policy defined for the service access point (SAP).

7705 SAR routers use QoS policies to control how QoS is handled at distinct points in the service delivery model within the device. QoS policies act like a template. Once a policy is created, it can be applied to many other similar services and ports. As an example, if there is a group of Node Bs connected to a 7705 SAR node, one QoS policy can be applied to all services of the same type, such as High-Speed Downlink Packet Access (HSDPA) offload services.

There are different types of QoS policies that cater to the different QoS needs at each point in the service delivery model. QoS policies are defined in a global context in the 7705 SAR and only take effect when the policy is applied to a relevant entity.

QoS policies are uniquely identified with a policy ID number or a policy ID name. Policy ID 1 and policy ID ‟default” are reserved for the default policy, which is used if no policy is explicitly applied.

The different QoS policies within the 7705 SAR can be divided into two main types.

QoS policies are used for classification, queue attributes, and marking.

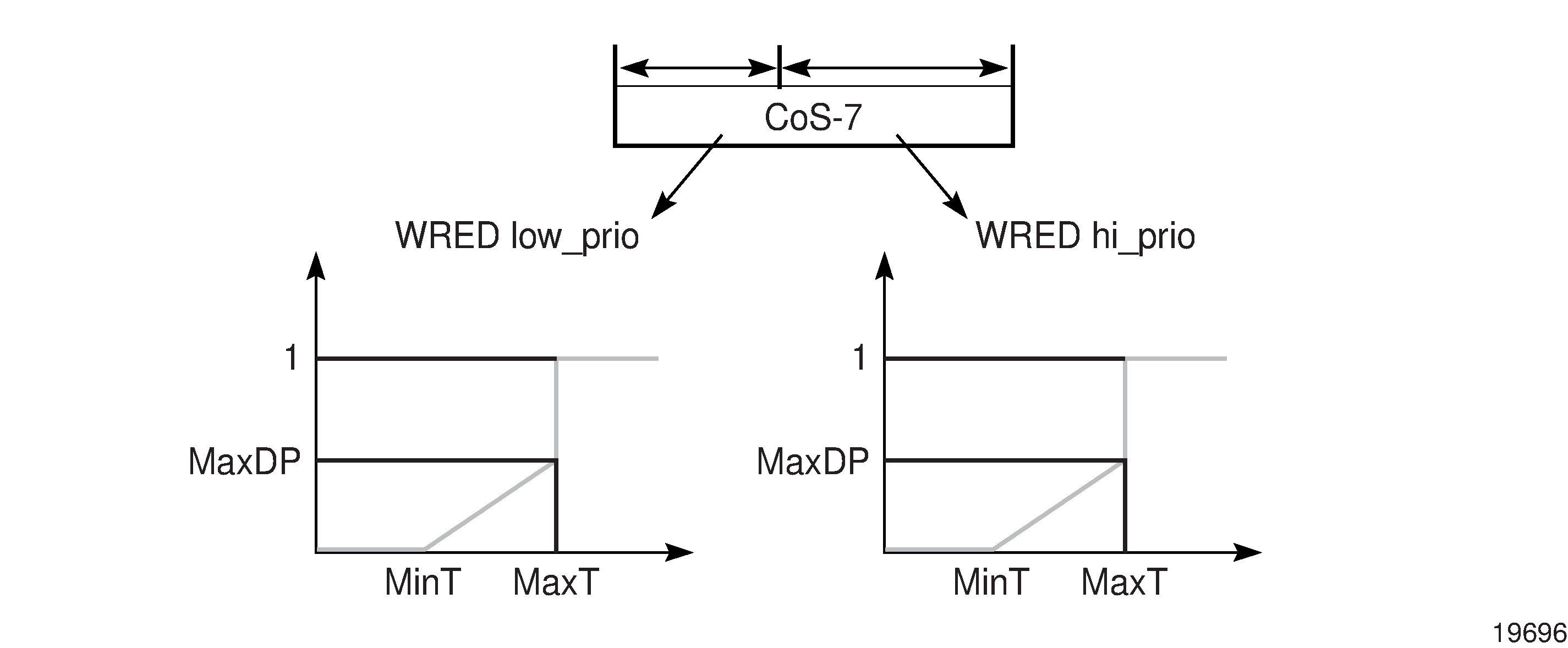

Slope policies define default buffer allocations and Random Early Discard (RED) and Weighted Random Early Discard (WRED) slope definitions.

The sections that follow provide an overview of the QoS traffic management performed on the 7705 SAR.

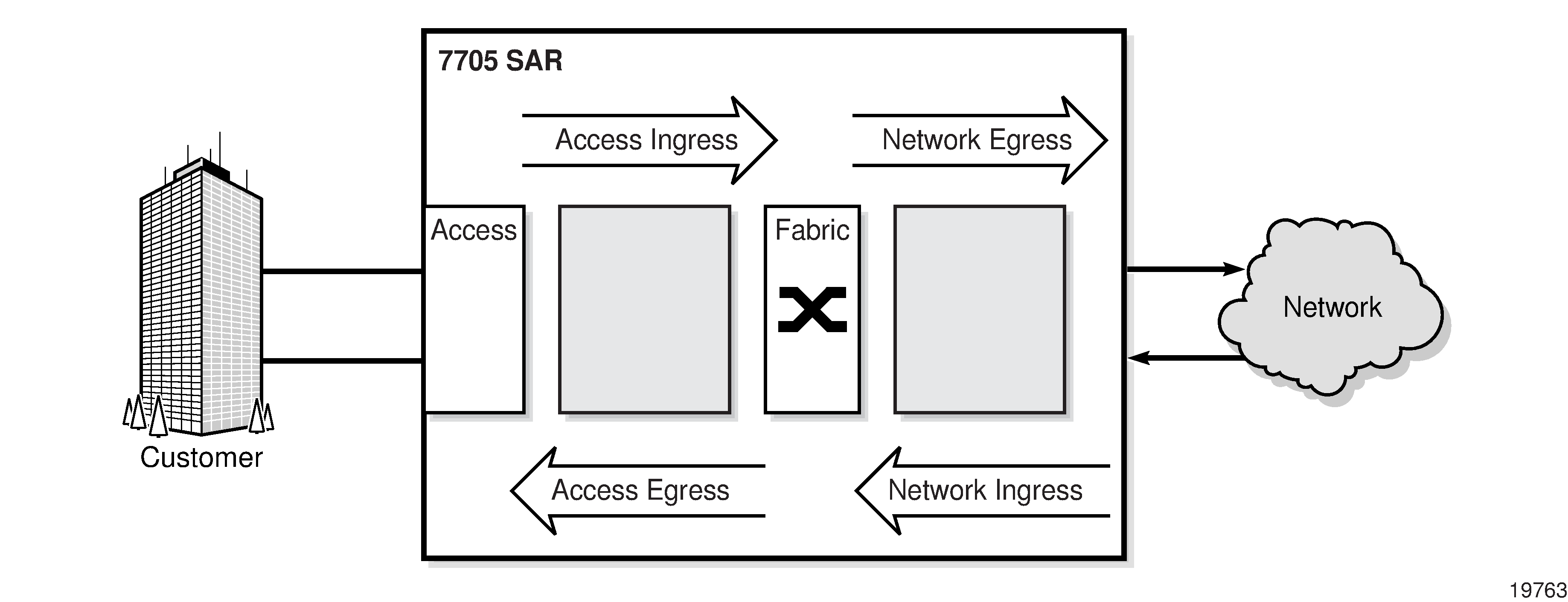

Egress and ingress traffic direction

Throughout this document, the terms ingress and egress, when describing traffic direction, are always defined relative to the fabric. For example:

ingress direction describes packets moving into the switch fabric away from a port (on an adapter card)

egress direction describes packets moving from the switch fabric and into a port (on an adapter card)

When combined with the terms access and network, which are port and interface modes, the four traffic directions relative to the fabric are (see Egress and ingress traffic direction):

access ingress direction describes packets coming in from customer equipment and switched toward the switch fabric

network egress direction describes packets switched from the switch fabric into the network

network ingress direction describes packets switched in from the network and moving toward the switch fabric

access egress direction describes packets switched from the switch fabric toward customer equipment

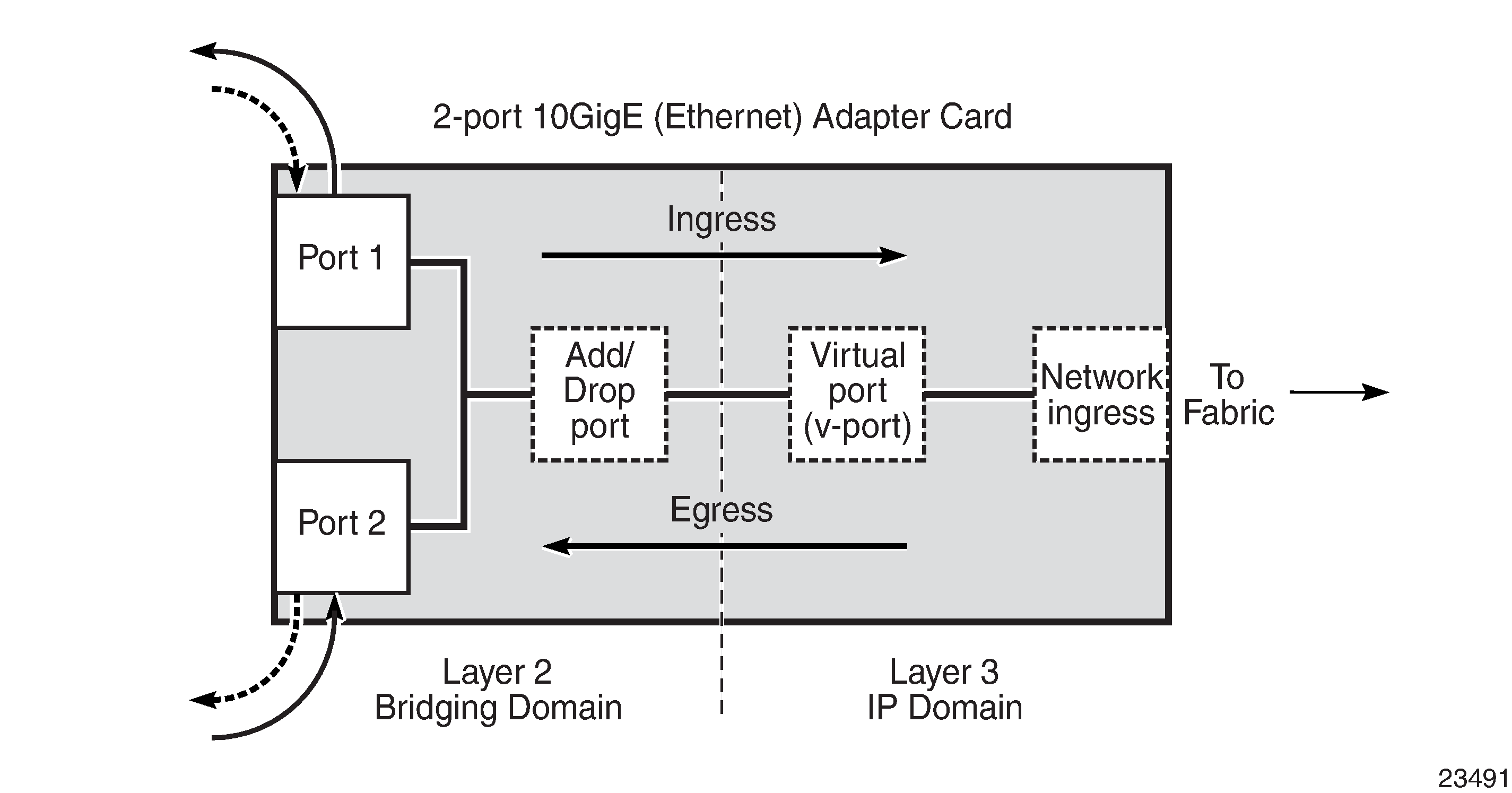

Ring traffic

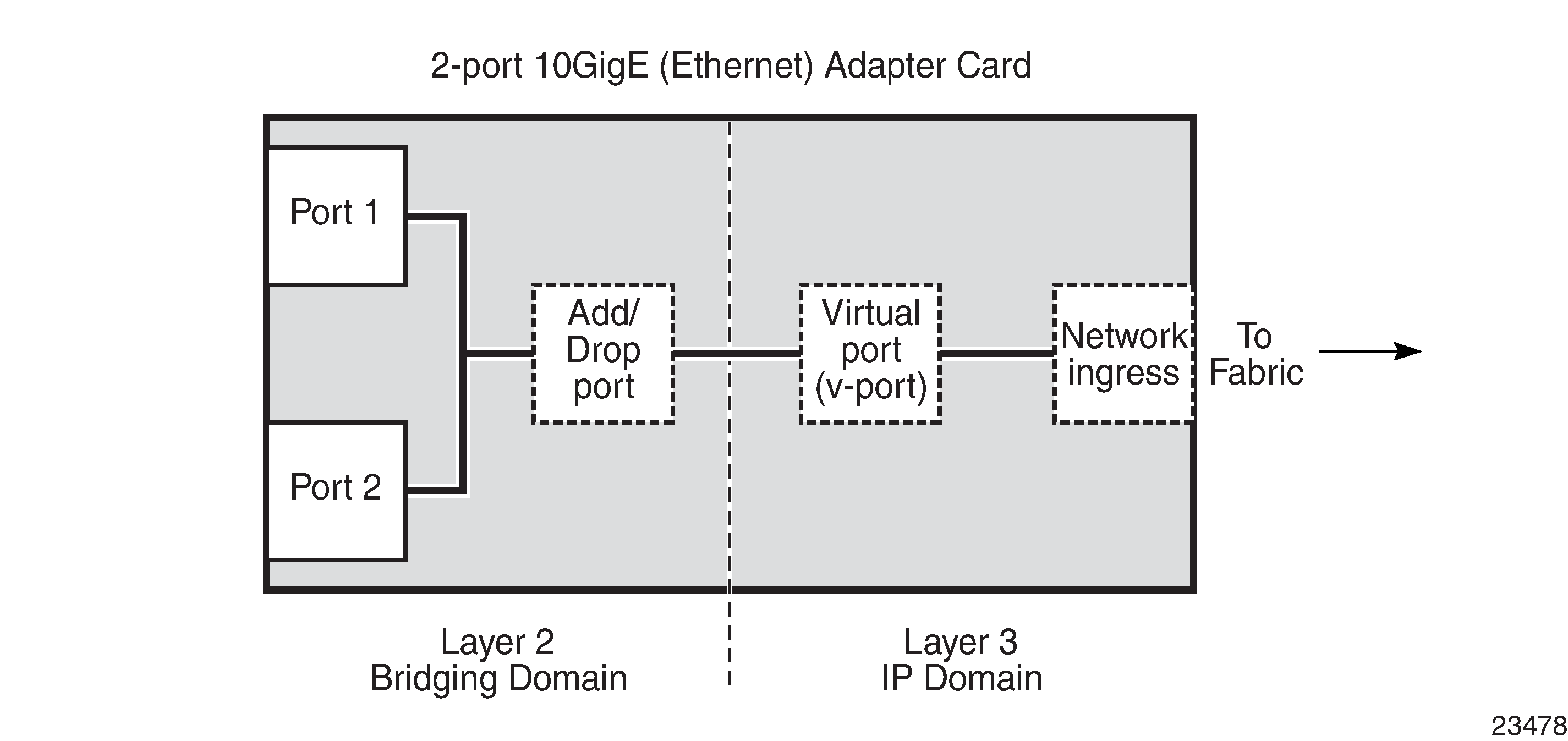

On the 2-port 10GigE (Ethernet) Adapter card and 2-port 10GigE (Ethernet) module, traffic can flow between the Layer 2 bridging domain and the Layer 3 IP domain (see Ingress and egress traffic on a 2-port 10GigE (Ethernet) Adapter card). In the bridging domain, ring traffic flows from one ring port to another, as well as to and from the add/drop port. From the network point of view, traffic from the ring toward the add/drop port and the v-port is considered ingress traffic (drop traffic). Similarly, traffic from the fabric toward the v-port and the add/drop port is considered egress traffic (add traffic).

The 2-port 10GigE (Ethernet) Adapter card or 2-port 10GigE (Ethernet) module functions as an add/drop card to a network side 10 Gb/s optical ring. Conceptually, the card or module should be envisioned as having two domains: a Layer 2 bridging domain where the add/drop function operates and a Layer 3 IP domain where the normal IP processing and IP nodal traffic flows are managed. Ingress and egress traffic flow remains in the context of the nodal fabric. The ring ports are considered to be east-facing and west-facing and are referenced as Port 1 and Port 2. A virtual port (or v-port) provides the interface to the IP domain within the structure of the card or module.

Forwarding classes

Queues can be created for each forwarding class to determine the manner in which the queue output is scheduled and the type of parameters the queue accepts. The 7705 SAR supports eight forwarding classes per SAP. The following table shows the default mapping of these forwarding classes in order of priority, with Network Control having the highest priority.

FC name |

FC designation |

Queue type |

Typical use |

|---|---|---|---|

Network Control |

NC |

Expedited |

For network control and traffic synchronization |

High-1 |

H1 |

For delay/jitter sensitive traffic |

|

Expedited |

EF |

For delay/jitter sensitive traffic |

|

High-2 |

H2 |

For delay/jitter sensitive traffic |

|

Low-1 |

L1 |

Best Effort |

For best-effort traffic |

Assured |

AF |

For best-effort traffic |

|

Low-2 |

L2 |

For best-effort traffic |

|

Best Effort |

BE |

For best-effort traffic |

The traffic flows of different forwarding classes are mapped to the queues. This mapping is user-configurable. Each queue has a unique priority. Packets from high-priority queues are scheduled separately, before packets from low-priority queues. More than one forwarding class can be mapped to a single queue. In such a case, the queue type defaults to the priority of the lowest forwarding class (see Queue type for more information about queue type). By default, the following logical order is followed:

FC-8 - NC

FC-7 - H1

FC-6 - EF

FC-5 - H2

FC-4 - L1

FC-3 - AF

FC-2 - L2

FC-1 - BE

At access ingress, traffic can be classified as unicast traffic or one of the multipoint traffic types (broadcast, multicast, or unknown (BMU)). After classification, traffic can be assigned to a queue that is configured to support one of the four traffic types, namely:

unicast (or implicit)

broadcast

multicast

unknown

Scheduling modes

The scheduler modes available on adapter cards are 4-priority and 16-priority. Which modes are supported on a particular adapter card depends on whether the adapter card is a second-generation or third-generation card.

On Gen-3 hardware, 4-priority scheduling mode is the implicit, default scheduling mode and is not user-configurable. Gen-3 platforms with a TDM block support 4-priority scheduling mode. Gen-2 adapter cards support 16-priority and 4-priority scheduling modes.

For more information about differences between Gen-2 and Gen-3 hardware related to scheduling mode QoS behavior, see QoS for Gen-3 adapter cards and platforms.

For information about scheduling modes as they apply to traffic direction, see the following sections:

Intelligent discards

Most 7705 SAR systems are susceptible to network processor congestion if the packet rate of small packets received on a node or card exceeds the processing capacity. If a node or card receives a high rate of small packet traffic, the node or card enters overload mode. Before the introduction of intelligent discards, when a node or card entered an overload state, the network processor would randomly drop packets.

The ‟intelligent discards during overload” feature allows the network processor to discard packets according to a preset priority order. In the egress direction, intelligent discards is applied to traffic entering the card from the fabric.

Traffic is discarded in the following order: low-priority out-of-profile user traffic is discarded first, followed by high-priority out-of-profile user traffic, then low-priority in-profile user traffic, high priority in-profile user traffic, and lastly control plane traffic. In the ingress direction, intelligent discards is applied to traffic entering the card from the physical ports. Traffic is discarded in the following order: low-priority user traffic is always discarded first, followed by high-priority user traffic. This order ensures that low-priority user traffic is always the most susceptible to discards.

In the egress direction, the system differentiates between high-priority and low-priority user traffic based on the internal forwarding class and queue-type fabric header markers. In the ingress direction, the system differentiates between high-priority and low-priority user traffic based on packet header bits. The following table details the classification of user traffic in the ingress direction.

Fabric header marker |

High-priority values |

Low-priority values |

|---|---|---|

MPLS TC |

7 to 4 |

3 to 0 |

IP DSCP |

63 to 32 |

31 to 0 |

Eth Dot1p |

7 to 4 |

3 to 0 |

Intelligent discards during overload ensures priority-based handling of traffic and helps existing traffic management implementations. It does not change how QoS-based classification, buffer management, or scheduling operates on the 7705 SAR. If the node or card is not in overload operation mode, there is no change to the way packets are handled by the network processor.

There are no commands to configure intelligent discards during overload; the feature is automatically enabled on the following cards, modules, and ports:

10-port 1GigE/1-port 10GigE X-Adapter card

2-port 10GigE (Ethernet) Adapter card (only on the 2.5 Gb/s v-port)

2-port 10GigE (Ethernet) module (only on the v-port)

8-port Gigabit Ethernet Adapter card

6-port Ethernet 10Gbps Adapter card

Packet Microwave Adapter card

4-port SAR-H Fast Ethernet module

6-port SAR-M Ethernet module

7705 SAR-A Ethernet ports

7705 SAR-Ax Ethernet ports

7705 SAR-Wx Ethernet ports

7705 SAR-M Ethernet ports

7705 SAR-H Ethernet ports

7705 SAR-Hc Ethernet ports

7705 SAR-X Ethernet ports

Buffering

Buffer space is allocated to queues based on the committed buffer space (CBS), the maximum buffer space (MBS) and availability of the resources, and the total amount of buffer space. The CBS and the MBS define the queue depth for a particular queue. The MBS represents the maximum buffer space that is allocated to a particular queue. Whether that much space can actually be allocated depends on buffer usage (that is, the number of other queues and their sizes).

Memory allocation is optimized to guarantee the CBS for each queue. The allocated queue space beyond the CBS is limited by the MBS and depends on the use of buffer space and the guarantees accorded to queues as configured in the CBS.

This section contains information about the following topics:

Buffer pools

The 7705 SAR supports two types of buffer pools that allocate memory as follows:

reserved pool – represents the CBS that is guaranteed for all queues. The reserved pool is limited to a maximum of 75% of the total buffer space.

shared pool – represents the buffer space that remains after the reserved pool has been allocated. The shared pool always has at least 25% of the total buffer space.

Both buffer pools can be displayed in the CLI using the show pools command.

CBS and MBS configuration

On the access side, CBS is configured in bytes and MBS in bytes or kilobytes using the CLI. See, for example, the config>qos>sap-ingress/egress>queue>cbs and mbs configuration commands.

On the network side, CBS and MBS values are expressed as a percentage of the total number of available buffers. If the buffer space is further segregated into pools (for example, ingress and egress, access and network, or a combination of these), the CBS and MBS values are expressed as a percentage of the applicable buffer pool. See the config>qos>network-queue>queue>cbs and mbs configuration commands.

The configured CBS and MBS values are converted to the number of buffers by dividing the CBS or MBS value by a fixed buffer size of 512 bytes or 2304 bytes, depending on the type of adapter card or platform. The number of buffers can be displayed for an adapter card using the show pools command.

Buffer allocation for multicast traffic

When a packet is being multicast to two or more interfaces on the egress adapter card or block of fixed ports, or when a packet at port ingress is mirrored, one extra buffer per packet is used.

In previous releases, this extra buffer was not added to the queue count. When checking CBS during multicast traffic enqueuing, the CBS was divided by two to prevent buffer overconsumption by the extra buffers. As a result, during multicast traffic enqueuing, the CBS buffer limit for the queue was considered reached when half of the available buffers were in use.

As of Release 8.0 of the 7705 SAR, the CBS is no longer divided by two. Instead, the extra buffers are added to the queue count when enqueuing, and are removed from the queue count when the multicast traffic exits the queue. The full CBS value is used, and the extra buffer allocation is visible in buffer allocation displays.

Buffer unit allocation and buffer chaining

Packetization buffers and queues are supported in the packet memory of each adapter card or platform. All adapter cards and platforms allocate a fixed space for each buffer. The 7705 SAR supports two buffer sizes: 512 bytes or 2304 bytes, depending on the type of adapter card or platform.

The adapter cards and platforms that support a buffer size of 2304 bytes do not support buffer chaining (see the description below) and only allow a 1-to-1 correspondence of packets to buffers.

The adapter cards and platforms that support a buffer of size of 512 bytes use a method called buffer chaining to process packets that are larger than 512 bytes. To accommodate packets that are larger than 512 bytes, these adapter cards or platforms divide the packet dynamically into a series of concatenated 512-byte buffers. An internal 64-byte header is prepended to the packet, so only 448 bytes of buffer space is available for customer traffic in the first buffer. The remaining customer traffic is split among concatenated 512-byte buffers.

The following table shows the supported buffer sizes on the 7705 SAR adapter cards and platforms. If a version number or variant is not specified, this implies all versions of the adapter card or variants of the platform. Adapter cards and platforms that support buffer chaining have 512 byte buffer size (‟Yes”); those that do not support buffer chaining have 2304 byte buffer size (‟No”).

Adapter card or platform |

Buffer space per card/platform (MB) |

Buffer chaining support |

|---|---|---|

2-port 10GigE (Ethernet) Adapter card |

268 201 (for L2 bridging domain) |

Yes Yes (each buffer unit is 768 bytes) |

2-port 10GigE (Ethernet) module |

201 (for L2 bridging domain) |

Yes (each buffer unit is 768 bytes) |

2-port OC3/STM1 Channelized Adapter card |

310 |

No |

4-port OC3/STM1 / 1-port OC12/STM4 Adapter card |

217 |

Yes |

4-port OC3/STM1 Clear Channel Adapter card |

352 |

No |

4-port DS3/E3 Adapter card |

280 |

No |

6-port E&M Adapter card |

38 |

No |

6-port FXS Adapter card |

38 |

No |

6-port Ethernet 10Gbps Adapter card |

1177 |

Yes |

8-port FXO Adapter card |

38 |

No |

8-port Gigabit Ethernet Adapter card |

268 |

Yes |

8-port Voice & Teleprotection card |

38 |

No |

8-port C37.94 Teleprotection card |

38 |

No |

10-port 1GigE/1-port 10GigE X-Adapter card |

537 |

Yes |

12-port Serial Data Interface card, version 2 and version 3 |

268 |

Yes |

16-port T1/E1 ASAP Adapter card |

38 |

No |

32-port T1/E1 ASAP Adapter card |

57 |

No |

Integrated Services card |

268 |

Yes |

Packet Microwave Adapter card |

268 |

Yes |

7705 SAR-A |

268 |

Yes |

7705 SAR-Ax |

268 |

Yes |

7705 SAR-H |

268 |

Yes |

7705 SAR-Hc |

268 |

Yes |

7705 SAR-M |

268 |

Yes |

7705 SAR-Wx |

268 |

Yes |

7705 SAR-X (Ethernet ports) 1 |

1177 |

Yes |

7705 SAR-X (TDM ports) 1 |

46 |

Yes |

- The 7705 SAR-X has three buffer pools. Each block of ports (MDA) has its own buffer pool.

Advantages of buffer chaining

Buffer chaining offers improved efficiency, which is especially evident when smaller packet sizes are transmitted. For example, to queue a 64-byte packet, a card with a fixed buffer of 2304 bytes allocates 2304 bytes, whereas a card with a fixed buffer of 512 bytes allocates only 512 bytes. To queue a 1280-byte packet, a card with a fixed buffer of 2304 bytes allocates 2304 bytes, whereas a card with a fixed buffer of 512 bytes allocates only 1536 bytes (that is, 512 bytes ✕ 3 buffers).

Per-SAP aggregate shapers (H-QoS) on Gen-2 hardware

This section contains overview information as well as information about the following topics:

This section provides information about per-SAP aggregate shapers for Gen-2 adapter cards and platforms. For information about Gen-3 adapter cards and platforms, see QoS for Gen-3 adapter cards and platforms.

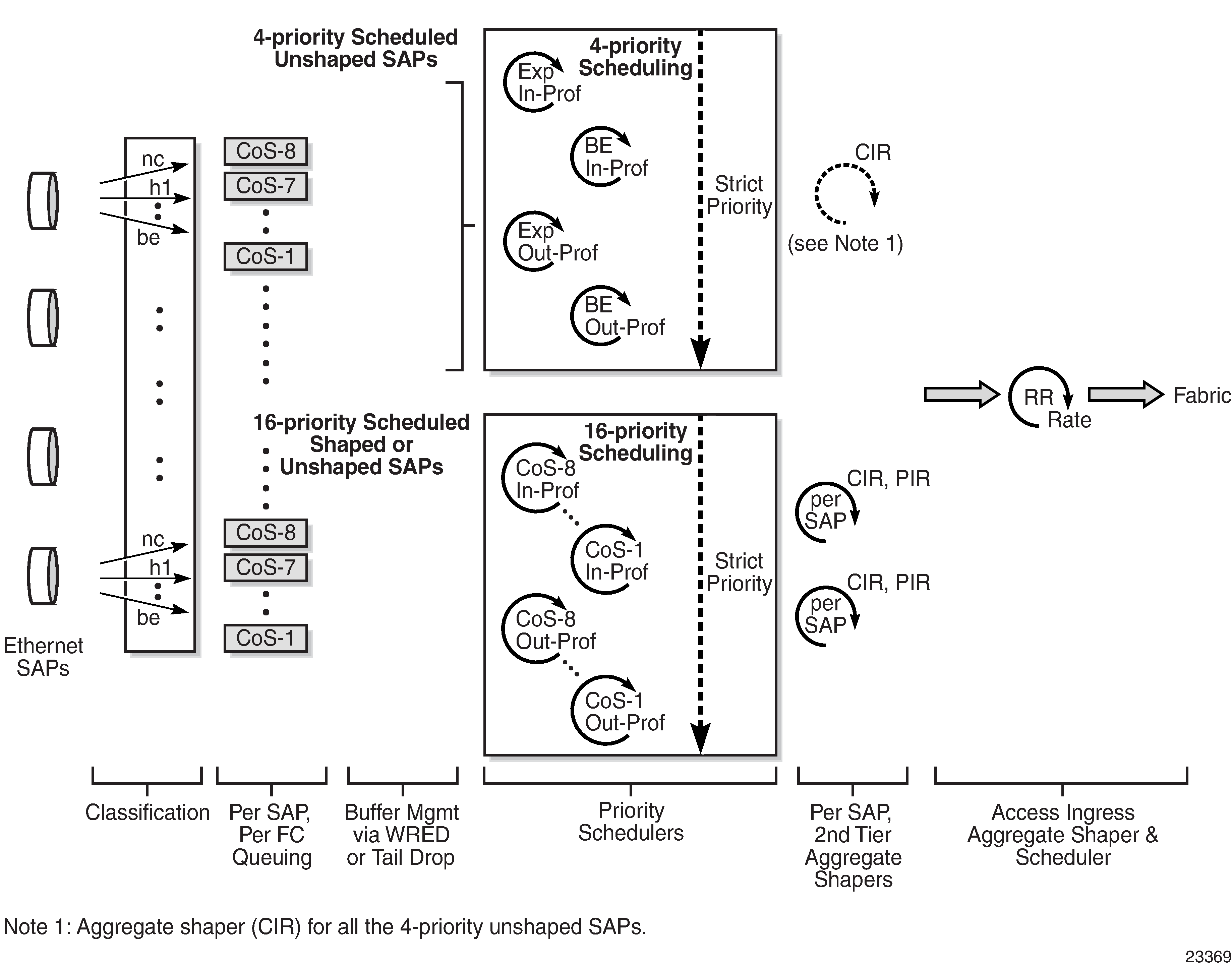

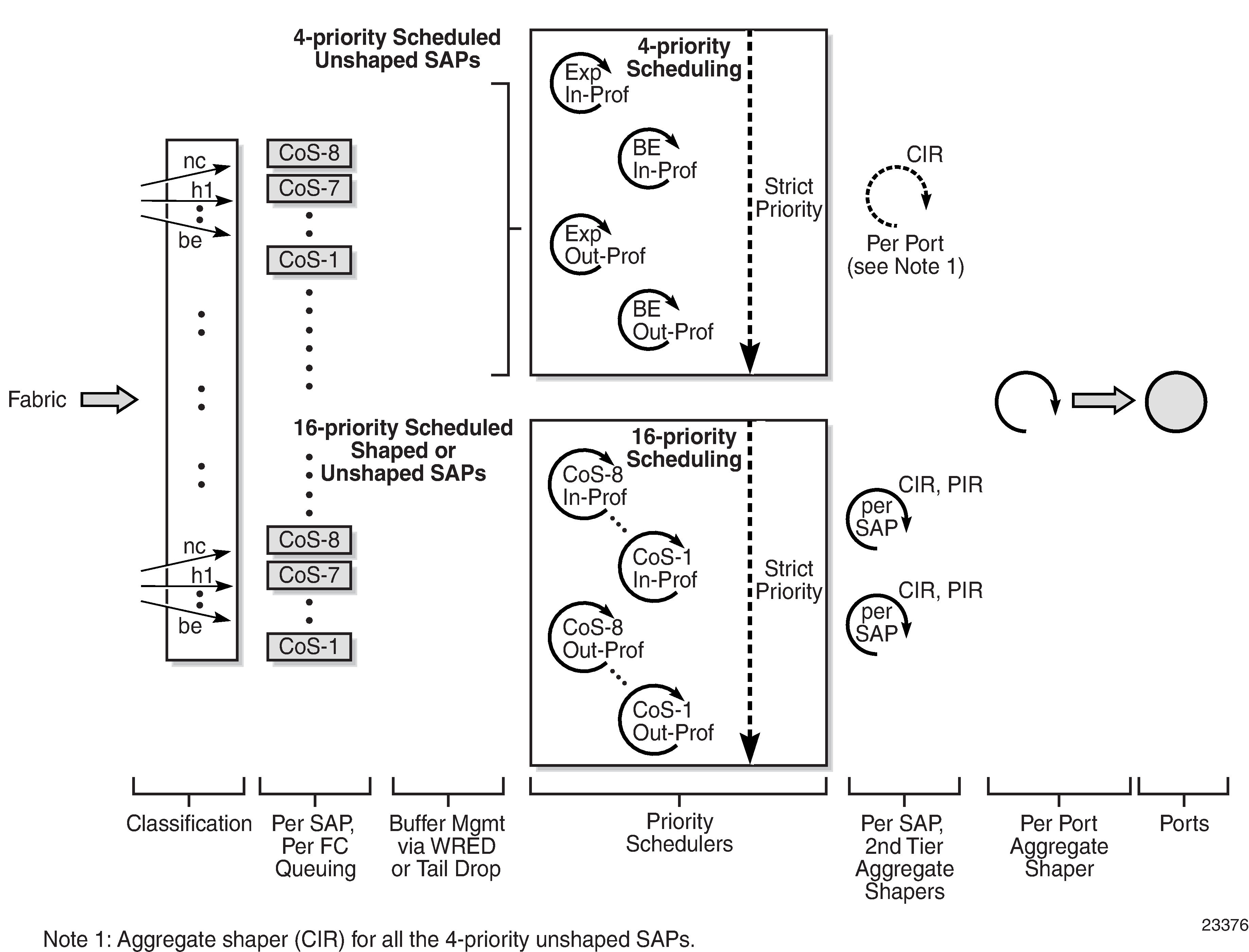

Hierarchical QoS (H-QoS) provides the 7705 SAR with the ability to shape traffic on a per-SAP basis for traffic from up to eight CoS queues associated with that SAP.

On Gen-2 hardware, the per-SAP aggregate shapers apply to access ingress and access egress traffic and operate in addition to the 16-priority scheduler, which must be used for per-SAP aggregate shaping.

The 16-priority scheduler acts as a soft policer, servicing the SAP queues in strict priority order, with conforming traffic (less than CIR) serviced prior to non-conforming traffic (between CIR and PIR). The 16-priority scheduler on its own cannot enforce a traffic limit on a per-SAP basis; to do this, per-SAP aggregate shapers are required (see H-QoS example).

The per-SAP shapers are considered aggregate shapers because they shape traffic from the aggregate of one or more CoS queues assigned to the SAP.

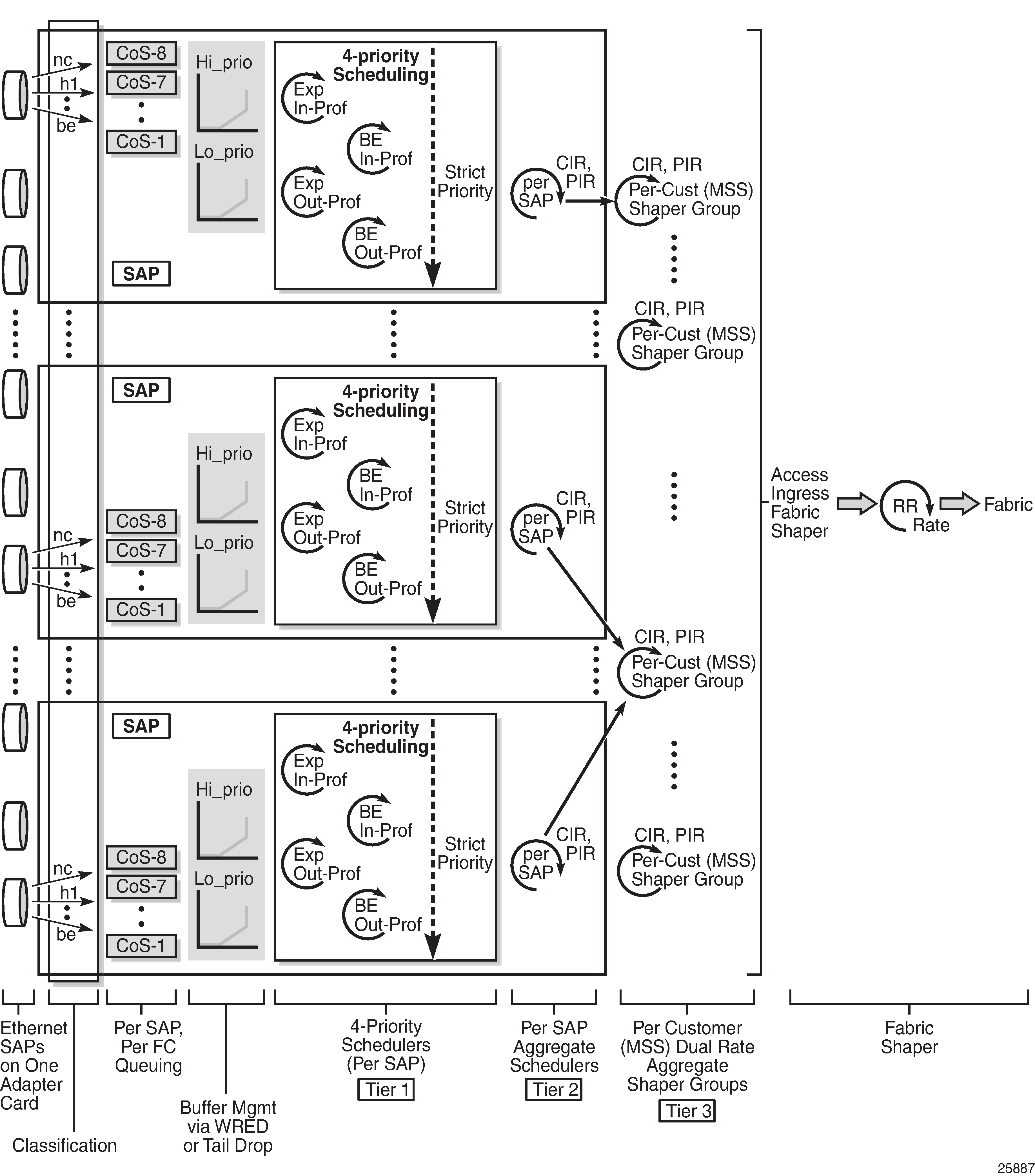

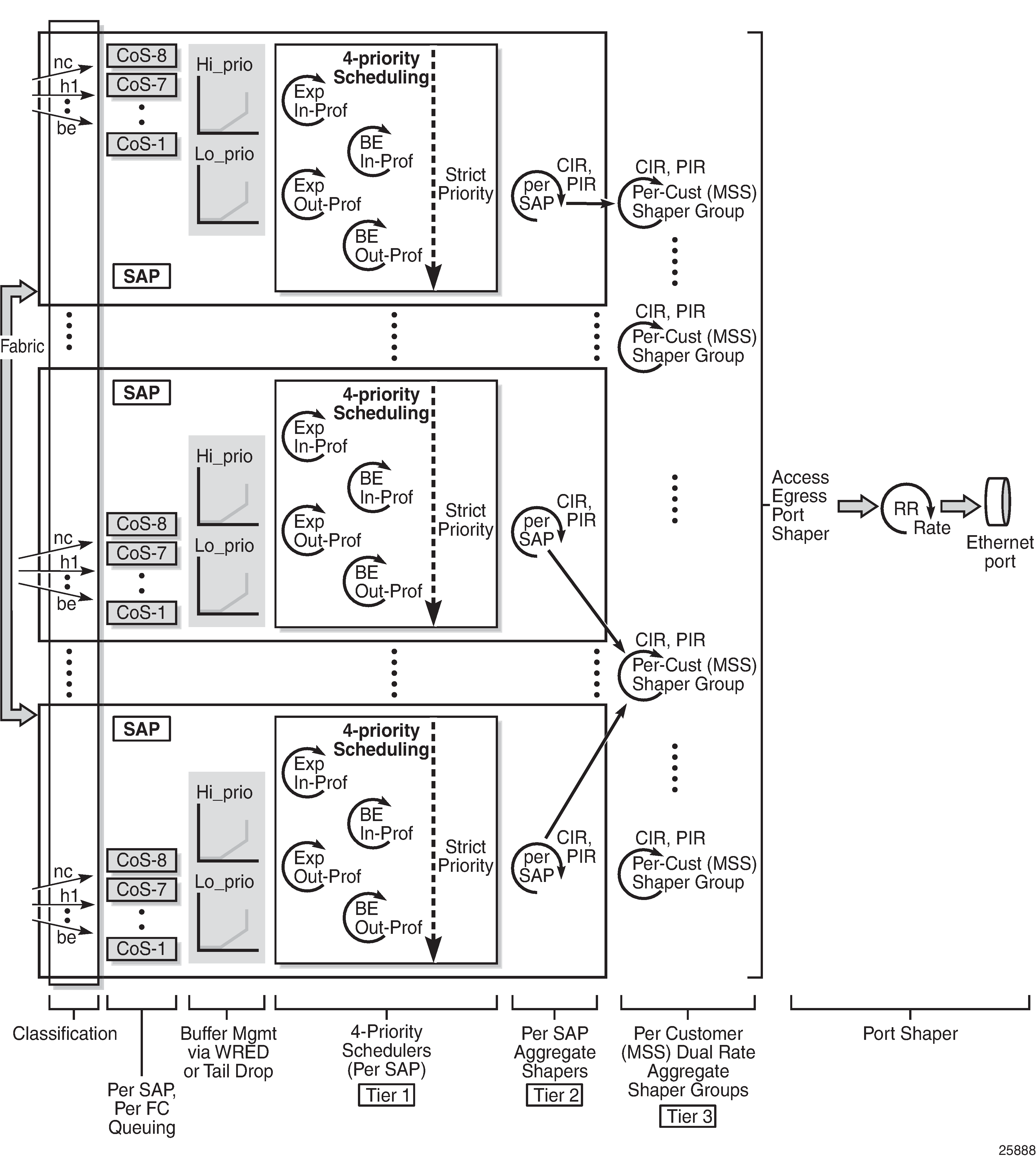

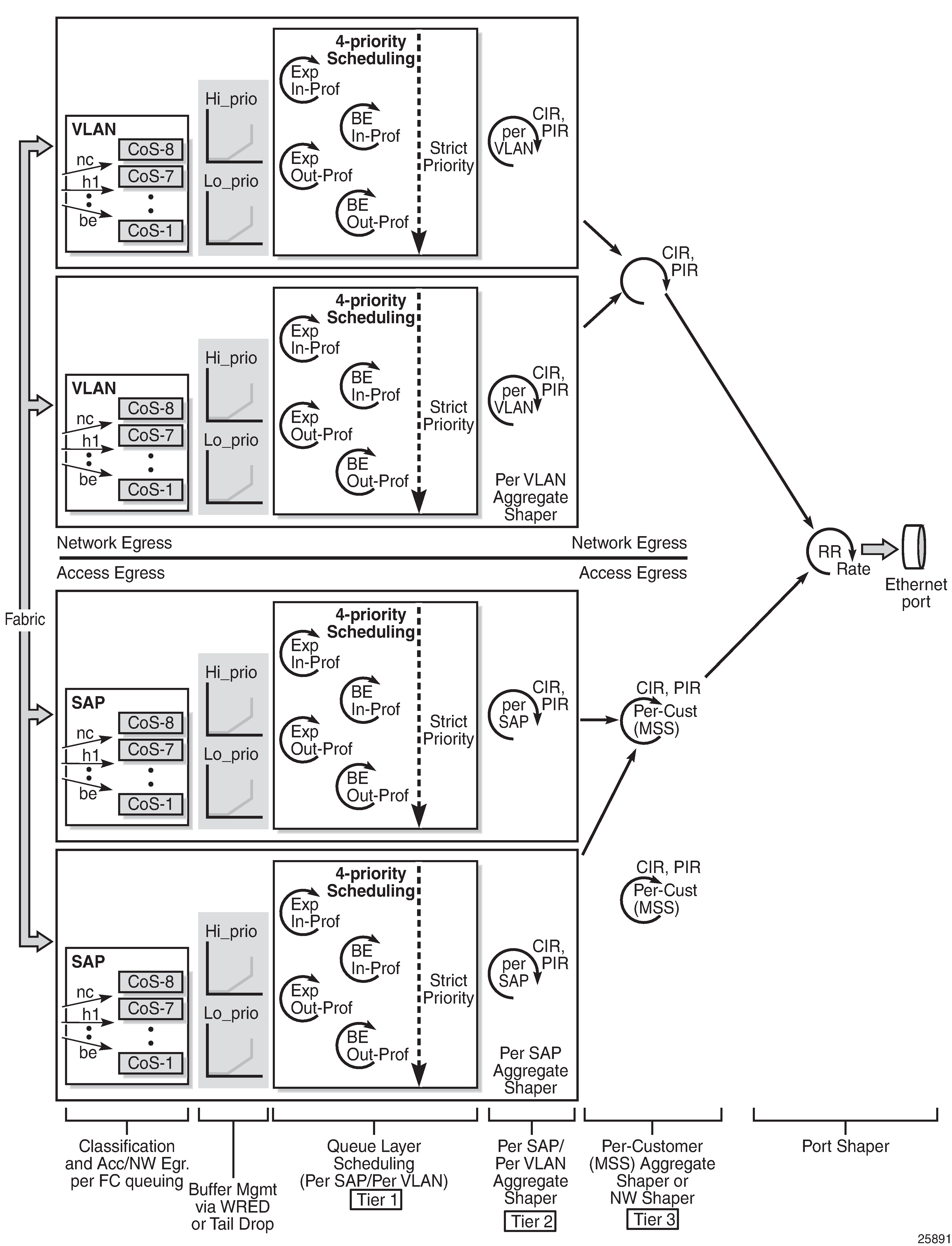

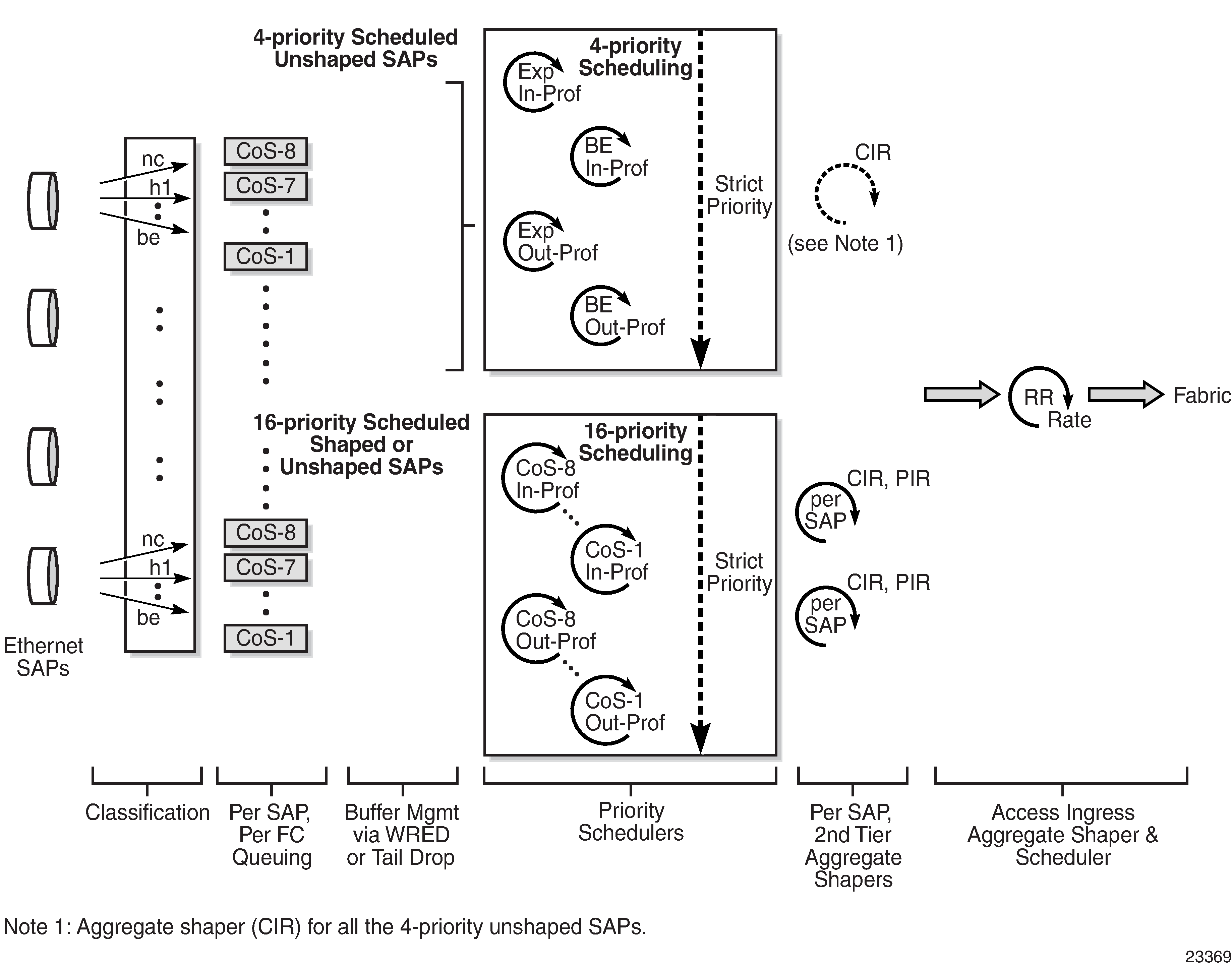

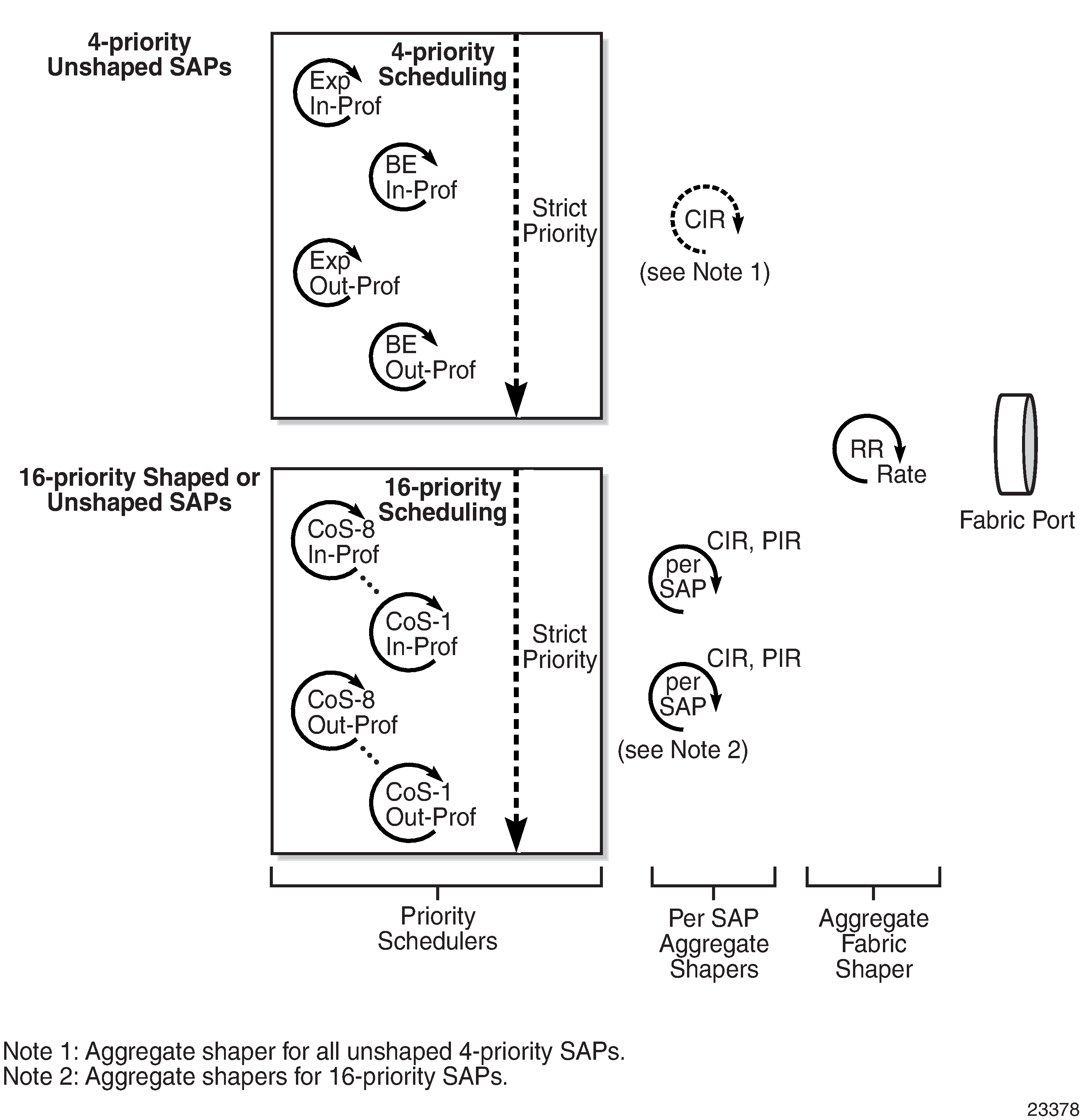

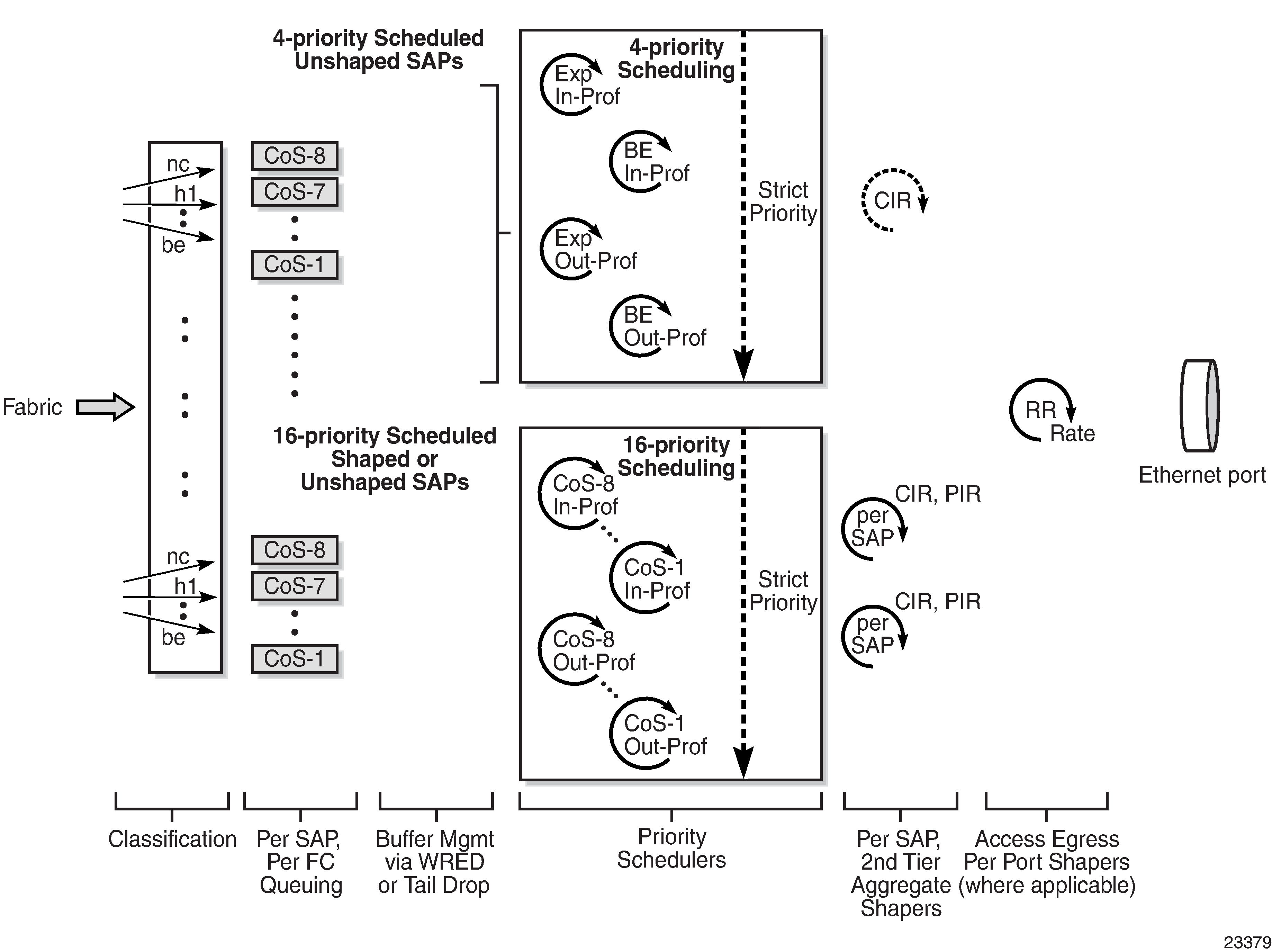

Access ingress scheduling for 4-priority and 16-priority SAPs (with per-SAP aggregate shapers) and Access egress scheduling for 4-priority and 16-priority SAPs (with per-SAP aggregate shapers) (per port) illustrate per-SAP aggregate shapers for access ingress and access egress, respectively. They indicate how shaped and unshaped SAPs are treated.

H-QoS is not supported on the 4-port SAR-H Fast Ethernet module.

Shaped and unshaped SAPs

Shaped SAPs have user-configured rate limits (PIR and CIR)—called the aggregate rate limit—and must use 16-priority scheduling mode. Unshaped SAPs use default rate limits (PIR is maximum and CIR is 0 kb/s) and can use 4-priority or 16-priority scheduling mode.

Shaped 16-priority SAPs are configured with a PIR and a CIR using the agg-rate-limit command in the config>service>service-type service-id>sap context, where service-type is epipe, ipipe, ies, vprn, or vpls (including routed VPLS). The PIR is set using the agg-rate variable and the CIR is set using the cir-rate variable.

Unshaped 4-priority SAPs are considered unshaped by definition of the default PIR and CIR values (PIR is maximum and CIR is 0 kb/s). Therefore, they do not require any configuration other than to be set to 4-priority scheduling mode.

Unshaped 16-priority SAPs are created when 16-priority scheduling mode is selected, when the default PIR is maximum and the default CIR is 0 kb/s, which are same default settings of a 4-priority SAP. The main reason for preferring unshaped SAPs using 16-priority scheduling over unshaped SAPs using 4-priority scheduling is to have a coherent scheduler behavior (one scheduling model) across all SAPs.

In order for unshaped 4-priority SAPs to compete fairly for bandwidth with 16-priority shaped and unshaped SAPs, a single, aggregate CIR for all the 4-priority SAPs can be configured. This aggregate CIR is applied to all the 4-priority SAPs as a group, not to individual SAPs. In addition, the aggregate CIR is configured differently for access ingress and access egress traffic. On the 7705 SAR-8 Shelf V2 and 7705 SAR-18, access ingress is configured in the config>qos>fabric-profile context. On the 7705 SAR-M, 7705 SAR-H, 7705 SAR-Hc, 7705 SAR-A, 7705 SAR-Ax, and 7705 SAR-Wx, access ingress is configured in the config>system>qos>access-ingress-aggregate-rate context. For all platforms, access egress configuration uses the config>port>ethernet context.

For more information about access ingress scheduling and traffic arbitration from the 16-priority and 4-priority schedulers toward the fabric, see Access ingress per-SAP aggregate shapers (access ingress H-QoS).

Per-SAP aggregate shaper support

The per-SAP aggregate shapers are supported in both access ingress and access egress directions and can be enabled on the following Ethernet access ports:

6-port Ethernet 10Gbps Adapter card

8-port Gigabit Ethernet Adapter card

10-port 1GigE/1-port 10GigE X-Adapter card (10-port GigE mode)

Packet Microwave Adapter card

7705 SAR-A

7705 SAR-Ax

7705 SAR-M

7705 SAR-H(all Ethernet access ports except those on the 4-port SAR-H Fast Ethernet module)

7705 SAR-Hc

7705 SAR-Wx

7705 SAR-X

H-QoS example

A typical example in which H-QoS is used is where a transport provider uses a 7705 SAR as a PE device and sells 100 Mb/s of fixed bandwidth for point-to-point Internet access, and offers premium treatment to 10% of the traffic. A customer can mark up to 10% of their critical traffic such that it is classified into high-priority queues and serviced prior to low-priority traffic.

Without H-QoS, there is no way to enforce a limit to ensure that the customer does not exceed the leased 100 Mb/s bandwidth, as illustrated in the following two scenarios:

If a queue hosting high-priority traffic is serviced at 10 Mb/s and the low-priority queue is serviced at 90 Mb/s, then at a moment when the customer transmits less than 10 Mb/s of high-priority traffic, the customer bandwidth requirement is not met (the transport provider transports less traffic than the contracted rate).

If the scheduling rate for the high-priority queue is set to 10 Mb/s and the rate for low-priority traffic is set to 100 Mb/s, then when the customer transmits both high- and low-priority traffic, the aggregate amount of bandwidth consumed by customer traffic exceeds the contracted rate of 100 Mb/s and the transport provider transports more traffic than the contracted rate.

The second-tier shaper—that is, the per-SAP aggregate shaper—is used to limit the traffic at a configured rate on a per-SAP basis. The per-queue rates and behavior are not affected when the aggregate shaper is enabled. That is, as long as the aggregate rate is not reached then there are no changes to the behavior. If the aggregate rate limit is reached, then the per-SAP aggregate shaper throttles the traffic at the configured aggregate rate while preserving the 16-priority scheduling priorities that are used on shaped SAPs.

Per-VLAN network egress shapers

This section provides information about per-VLAN network egress shapers for Gen-2 adapter cards and platforms. For information about Gen-3 adapter cards and platforms, see QoS for Gen-3 adapter cards and platforms.

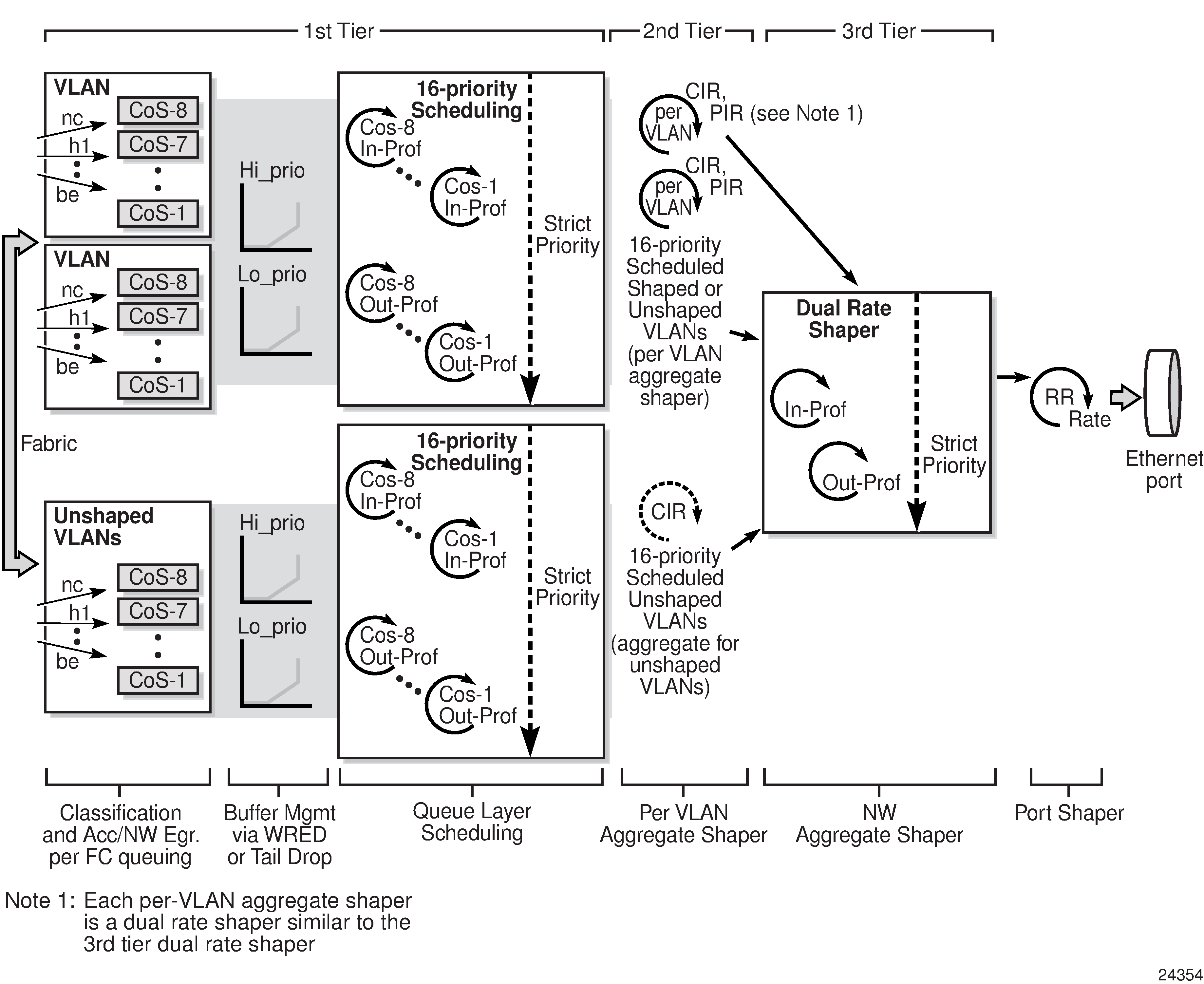

The 7705 SAR supports a set of eight network egress queues on a per-port or on a per-VLAN basis for network Ethernet ports. Eight unique per-VLAN CoS queues are created for each VLAN when a per-VLAN shaper is enabled. When using per-VLAN shaper mode, in addition to the per-VLAN eight CoS queues, there is a single set of eight queues for hosting traffic from all unshaped VLANs, if any. VLAN shapers are enabled on a per-interface basis (that is, per VLAN) when a network queue policy is assigned to the interface. See Per-VLAN shaper support for a list of cards and nodes that support per-VLAN shapers.

On a network port with dot1q encapsulation, shaped and unshaped VLANs can coexist. In such a scenario, each shaped VLAN has its own set of eight CoS queues and is shaped with its own configured dual-rate shaper. The remaining VLANs (that is, the unshaped VLANs) are serviced using the unshaped-if-cir rate, which is configured using the config>port>ethernet>network>egress>unshaped-if-cir command. Assigning a rate to the unshaped VLANs is required for arbitration between the shaped VLANs and the bulk (aggregate) of unshaped VLANs, where each shaped VLAN has its own shaping rate while the aggregate of the unshaped VLANs has a single rate assigned to it.

Per-VLAN shapers are supported on dot1q-encapsulated ports. They are not supported on null- or qinq-encapsulated ports.

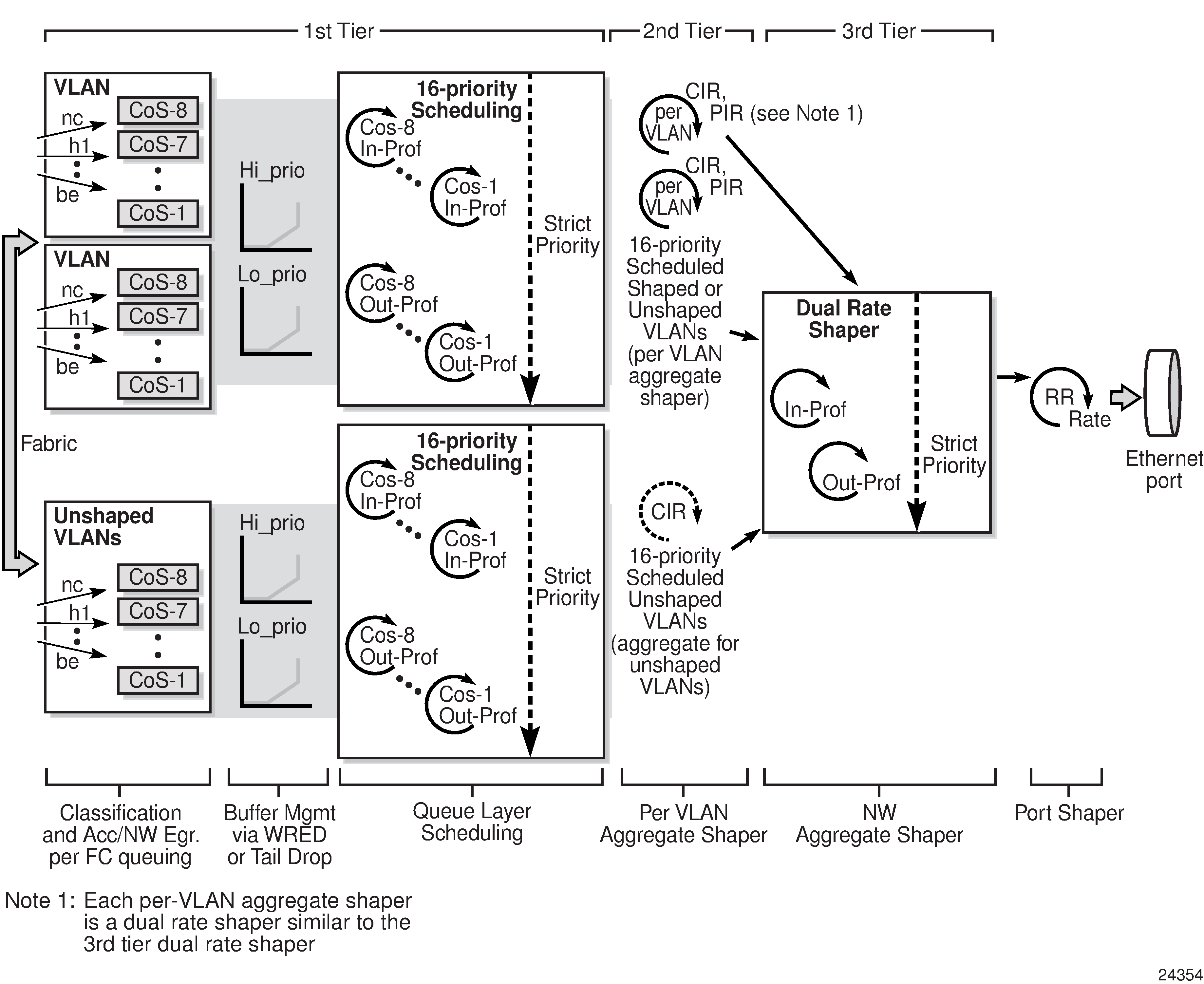

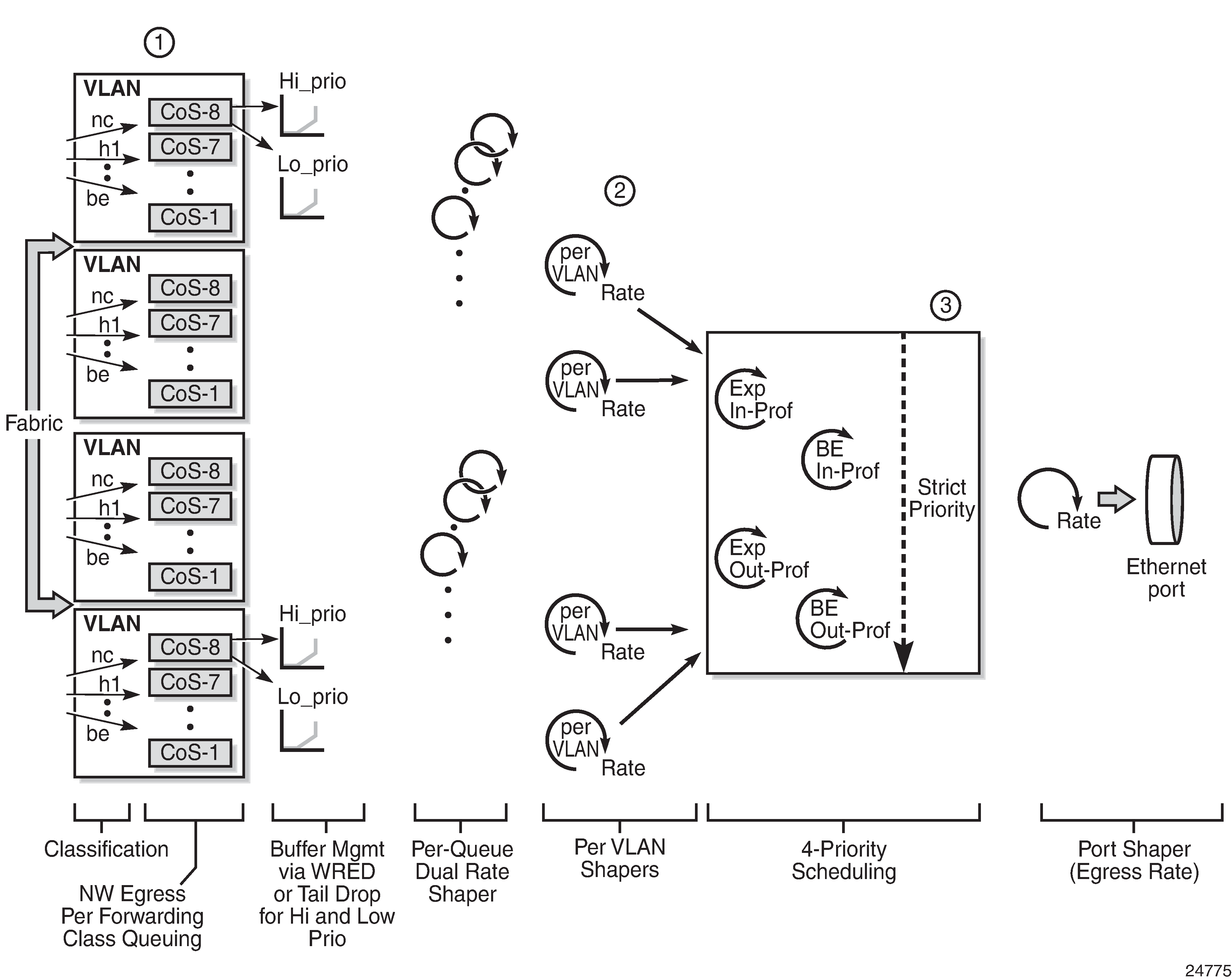

The following figure illustrates the queuing and scheduling blocks for network egress VLAN traffic.

Shaped and unshaped VLANs

Shaped VLANs have user-configured rate limits (PIR and CIR)—called the aggregate rate limit—and must use 16-priority scheduling mode. Shaped VLANs operate on a per-interface basis and are enabled after a network queue policy is assigned to the interface. If a VLAN does not have a network queue policy assigned to the interface, it is considered an unshaped VLAN.

To configure a shaped VLAN with aggregate rate limits, use the agg-rate-limit command in the config>router>if>egress context. If the VLAN shaper is not enabled, the agg-rate-limit settings do not apply. The default aggregate rate limit (PIR) is set to the port egress rate.

Unshaped VLANs use default rate limits (PIR is the maximum possible port rate and CIR is 0 kb/s) and use 16-priority scheduling mode. All unshaped VLANs are classified, queued, buffered, and scheduled into an aggregate flow that gets prepared for third-tier arbitration by a single VLAN aggregate shaper.

In order for the aggregated unshaped VLANs to compete fairly for bandwidth with the shaped VLANs, a single, aggregate CIR for all the unshaped VLANs can be configured using the unshaped-if-cir command. The aggregate CIR is applied to all the unshaped VLANs as a group, not to individual VLANs, and is configured in the config>port>ethernet> network>egress context.

Per-VLAN shaper support

The following cards and nodes support network egress per-VLAN shapers:

6-port Ethernet 10Gbps Adapter card

8-port Gigabit Ethernet Adapter card

10-port 1GigE/1-port 10GigE X-Adapter card (1-port 10GigE mode and 10-port 1GigE mode)

Packet Microwave Adapter card (includes 1+1 redundancy)

v-port on the 2-port 10GigE (Ethernet) Adapter card/module

7705 SAR-A

7705 SAR-Ax

7705 SAR-H

7705 SAR-Hc

7705 SAR-M

7705 SAR-Wx

7705 SAR-X

- Fast Ethernet ports (including ports 9 to 12 on the 7705 SAR-A)

-

Gigabit Ethernet ports in Fast Ethernet mode

-

non-datapath Ethernet ports (for example, the Management port)

VLAN shaper applications

This section describes the following two scenarios:

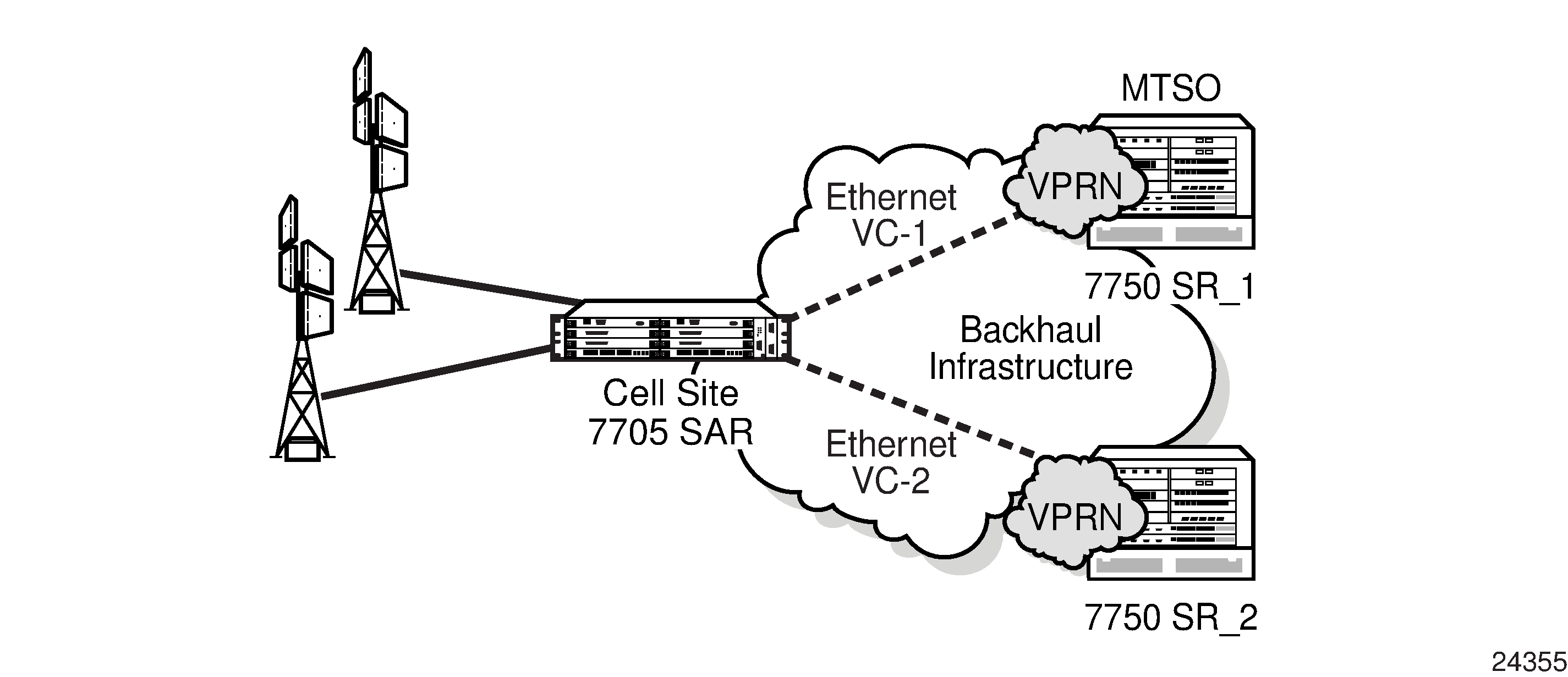

VLAN shapers for dual uplinks

One of the main uses of per-VLAN network egress shapers is to enable load balancing across dual uplinks out of a spoke site. VLAN shapers for dual uplinks represents a typical hub-and-spoke mobile backhaul topology. To ensure high availability through the use of redundancy, a mobile operator invests in dual 7750 SR nodes at the MTSO. Dual 7750 SR nodes at the MTSO offer equipment protection, as well as protection against backhaul link failures.

In this example, the cell site 7705 SAR is dual-homed to 7750 SR_1 and SR_2 at the MTSO, using two disjoint Ethernet virtual connections (EVCs) leased from a transport provider. Typically, the EVCs have the same capacity and operate in an forwarding/standby manner. One of the EVCs—the 7750 SR—transports all the user/mobile traffic to and from the cell site at any given time. The other EVC transports only minor volumes of control plane traffic between network elements (the 7705 SAR and the 7750 SR). Leasing two EVCs with the same capacity and using only one of them actively wastes bandwidth and is expensive (the mobile operator pays for two EVCs with the same capacity).

Mobile operators with increasing volumes of mobile traffic look for ways to use both of the EVCs simultaneously, in an active/active manner. In this case, using per-VLAN shapers would ensure that each EVC is loaded up to the leased capacity. Without per-VLAN shapers, the 7705 SAR supports a single per-port shaper, which does not meet the active/active requirement:

If the egress rate is set to twice the EVC capacity, either one of the EVCs can end up with more traffic than its capacity.

If the egress rate is set to the EVC capacity, half of the available bandwidth can never be consumed, which is similar to the 7705 SAR having no per-VLAN egress shapers.

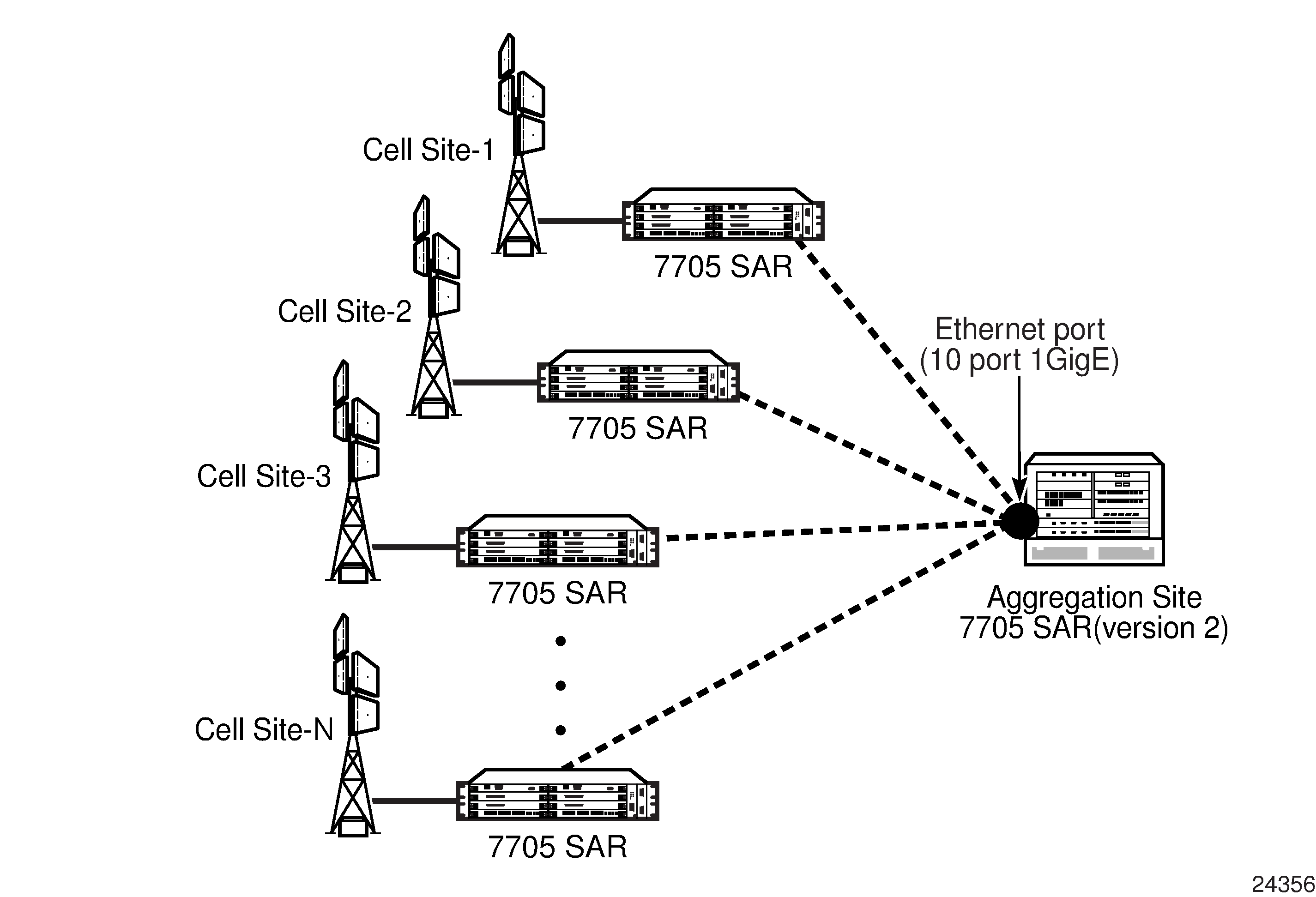

VLAN shapers for aggregation site

Another typical use of per-VLAN shapers at network egress is shown in VLAN shapers in aggregation site scenario. The figure shows a hub-and-spoke mobile backhaul network where EVCs leased from a transport provider are groomed to a single port, typically a 10-Gigabit Ethernet or a 1-Gigabit Ethernet port, at the hand-off point at the hub site. Traffic from different cell sites is handed off to the aggregation node over a single port, where each cell site is uniquely identified by the VLAN assigned to it.

In the network egress direction of the aggregation node, per-VLAN shaping is required to ensure traffic to different cell sites is shaped at the EVC rate. The EVC for each cell site would typically have a different rate. Therefore, every VLAN feeding into a particular EVC needs to be shaped at its own rate. For example, compare a relatively small cell site (Cell Site-1) at 20 Mb/s rate with a relatively large cell site (Cell Site-2) at 200 Mb/s rate. Without the granularity of per-VLAN shaping, shaping only at the per-port level cannot ensure that an individual EVC does not exceed its capacity.

Per-customer aggregate shapers (multiservice site) on Gen-2 hardware

This section provides information about per-customer aggregate shapers for Gen-2 adapter cards and platforms. For information about Gen-3 adapter cards and platforms, see QoS for Gen-3 adapter cards and platforms.

A per-customer aggregate shaper is an aggregate shaper into which multiple SAP aggregate shapers can feed. The SAPs can be shaped at a desired rate called the Multiservice Site (MSS) aggregate rate. At ingress, SAPs that are bound to a per-customer aggregate shaper can span a whole Ethernet MDA meaning that SAPs mapped to the same MSS can reside on any port on a given Ethernet MDA.

At egress, SAPs that are bound to a per-customer aggregate shaper can only span a port. Toward the fabric at ingress and toward the port at egress, multiple per-customer aggregate shapers are shaped at their respective configured rates to ensure fair sharing of available bandwidth among different per-customer aggregate shapers. Deep ingress queuing capability ensures that traffic bursts are absorbed rather than dropped. Multi-tier shapers are based on an end-to-end backpressure mechanism that uses the following order (egress is given as an example):

per-port egress rate (if configured), backpressures to

per-customer aggregate shapers, backpressures to

per-SAP aggregate shapers, backpressures to

per-CoS queues (in the scheduling priority order)

To configure per-customer aggregate shaping, a shaper policy must be created and shaper groups must be created within that shaper policy. For access ingress per-customer aggregate shaping, a shaper policy must be assigned to an Ethernet MDA and SAPs on that Ethernet MDA must be bound to a shaper group within the shaper policy bound to that Ethernet MDA. For access egress per-customer aggregate shaping, a shaper policy must be assigned to a port and SAPs on that port must be bound to a shaper group within the shaper policy bound to that port. The unshaped SAP shaper group within the policy provides the shaper rate for all the unshaped SAPs (4-priority scheduled SAPs). For each shaped SAP, however, an ingress or egress shaper group can be specified. For more information about shaper policies, see Applying a shaper QoS policy and shaper groups.

The access ingress shaper policy is configured at the MDA level for fixed platforms. The default value for an access ingress shaper policy for each MDA and module is blank, as configured using the no shaper-policy command. On all 7705 SAR fixed platforms (with the exception of the 7705 SAR-X), when no MSS is configured, the existing access ingress aggregate rate is used as the shaper rate for the bulk of access ingress traffic. In order to use MSS, a shaper policy must be assigned to the access ingress interface of one MDA, and the shaper policy change is cascaded to all MDAs and modules in the chassis.

Before the access ingress shaper policy is assigned, the config system qos access-ingress-aggregate-rate 10000000 unshaped-sap-cir max command must be configured. Once a shaper policy is assigned to an access ingress MDA, the values configured using the access-ingress-aggregate-rate command cannot be changed.

On all 7705 SAR fixed platforms (with the exception of the 7705 SAR-X), when a shaper policy is assigned to an Ethernet MDA for access ingress aggregate shaping, it is automatically assigned to all the Ethernet MDAs in that chassis. The shaper group members contained in the shaper policy span all the Ethernet MDAs. SAPs on different Ethernet MDAs configured with the same ingress shaper group will share the shaper group rate.

Once the first MSS is configured, traffic from the designated SAPs is mapped to the MSS and shaped at the configured rate. The remainder of the traffic is then shaped according to the configured unshaped SAP rate. When a second MSS is added, SAPs that are mapped to the second MSS are shaped at the configured rate and traffic is arbitrated between the first MSS, the second MSS and unshaped SAP traffic.

In the access egress direction, the default shaper policy is assigned to each MSS-capable port. Ports that cannot support MSS are assigned a blank value, as configured using the no shaper-policy command. The default egress shaper group is assigned to each egress SAP that supports MSS. If the SAP does not support MSS, the egress SAP is assigned a blank value, as configured using the no shaper-group command.

MSS support

The following cards, modules, and platforms support MSS:

6-port Ethernet 10Gbps Adapter card

8-port Gigabit Ethernet Adapter card

10-port 1GigE/1-port 10GigE X-Adapter card

Packet Microwave Adapter card

6-port SAR-M Ethernet module

7705 SAR-A

7705 SAR-Ax

7705 SAR-H

7705 SAR-Hc

7705 SAR-M

7705 SAR-Wx

7705 SAR-X

-

4-port SAR-H Fast Ethernet module

-

Fast Ethernet ports on the 7705 SAR-A

MSS and LAG interaction on the 7705 SAR-8 Shelf V2 and 7705 SAR-18

A SAP that uses a LAG can include two or more ports from the same adapter card or two different adapter cards.

In the access egress direction, each port can be assigned a shaper policy for access and can have shaper groups configured with different shaping rates. If a shaper group is not defined, the default shaper group is used. The port egress shaper policy, when configured on a LAG, must be configured on the primary LAG member. This shaper policy is propagated to each of the LAG port members, ensuring that each LAG port member has the same shaper policy.

The following egress MSS restrictions ensure that both active and standby LAG members have the same configuration:

When a SAP is created using a LAG, whether the LAG has any port members and whether the egress scheduler mode is 4-priority or 16-priority, the default shaper group is automatically assigned to the SAP.

Shaper groups cannot be changed from the default if they are assigned to SAPs that use LAGs with no port members.

The last LAG port member cannot be deleted from a LAG that is used by any SAP that is assigned a non-default shaper group.

The shaper policy or shaper group is not checked when the first port is added as a member of a LAG. When a second port is added as a member of a LAG, it can only be added if the shaper policy on the second port is the same as the shaper policy on the first member port of the LAG.

The shaper group of a LAG SAP can be changed to a non-default shaper group only if the new shaper group exists in the shaper policy used by the active LAG port member.

A shaper group cannot be deleted if it is assigned to unshaped SAPs (unshaped-sap-shaper-group command) or if it is used by at least one LAG SAP or non-LAG SAP.

The shaper policy assigned to a port cannot be changed unless all of the SAPs on that port are assigned to the default shaper group.

In the ingress direction, there can be two different shaper policies on two different adapter cards for the two port members in a LAG. When assigning a shaper group to an ingress LAG SAP, each shaper policy assigned to the LAG port MDAs must contain that shaper group or the shaper group cannot be assigned. In addition, after a LAG activity switch occurs, the CIR/PIR configuration from the subgroup of the policy of the adapter card of the newly active member will be used.

The following ingress MSS restrictions allow the configuration of shaper groups for LAG SAPs, but the router ignores shaper groups that do not meet the restrictions:

When a SAP is created using a LAG, whether the LAG has any port members and whether the ingress scheduler mode is 4-priority or 16-priority, the default shaper group is automatically assigned to the SAP.

Shaper groups cannot be changed from the default if they are assigned to SAPs that use LAGs with no port members.

The last LAG port member cannot be deleted from a LAG that is used by any SAP that is assigned a non-default shaper group.

The shaper policy or shaper group is not checked when the first port is added as a member of a LAG. When a second port is added as a member of a LAG, all SAPs using the LAG are checked to ensure that any non-default shaper groups already configured on the SAPs are part of the shaper policy assigned to the adapter card of the second port. If the check fails, the port member is rejected.

The shaper group of a LAG SAP can be changed to a non-default shaper group only if the new shaper group exists in the shaper policies used by all adapter cards of all LAG port members.

A shaper group cannot be deleted if it is assigned to unshaped SAPs (unshaped-sap-shaper-group command) or if it is used by at least one LAG SAP or non-LAG SAP.

The shaper policy assigned to an adapter card cannot be changed unless all of the SAPs on that adapter card are assigned to the default shaper group.

QoS for hybrid ports on Gen-2 hardware

This section provides information about QoS for hybrid ports on Gen-2 adapter cards and platforms. For information about Gen-3 adapter cards and platforms, see QoS for Gen-3 adapter cards and platforms.

In the ingress direction of a hybrid port, traffic management behavior is the same as it is for access and network ports. See Access ingress and Network ingress.

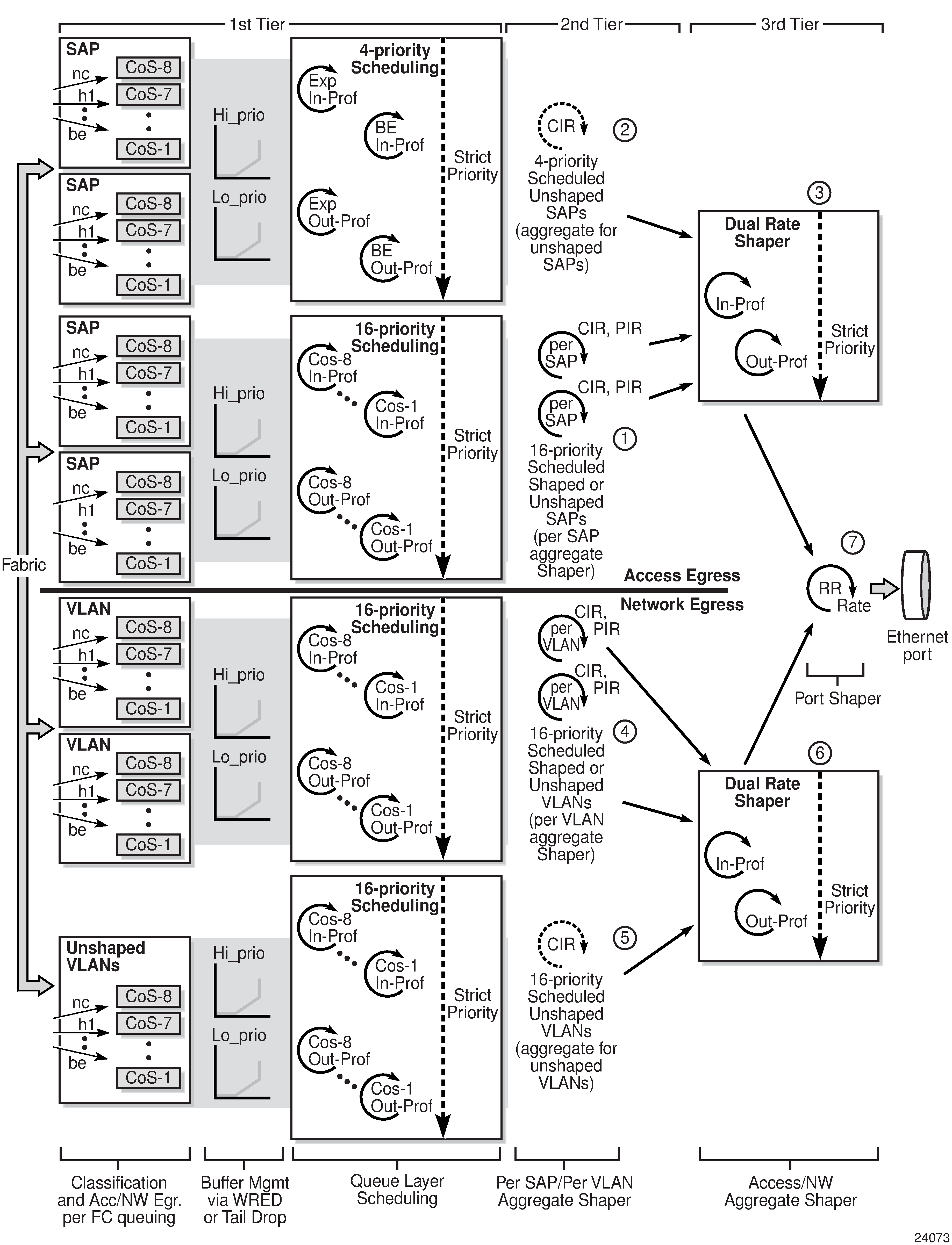

In the egress direction of a hybrid port, access and network aggregate shapers are used to arbitrate between the bulk (aggregate) of access and network traffic flows. As shown in Hybrid port egress shapers and schedulers on Gen-2 hardware, on the access side (above the solid line), both the access egress SAP aggregates (#1) and the unshaped SAP shaper (#2) feed into the access egress aggregate shaper (#3). On the network side (below the solid line), both the per-VLAN shapers (#4) and the unshaped interface shaper (#5) feed into the network egress aggregate shaper (#6). Then, the access and the network aggregate shapers are arbitrated in a dual-rate manner, in accordance with their respective configured committed and peak rates (#7). As a last step, the egress-rate for the port (when configured) applies backpressure to both the access and the network aggregate shapers, which apply backpressure all the way to the FC queues belonging to both access and network traffic.

As part of the hybrid port traffic management solution, access and network second-tier shapers are bound to access and network aggregate shapers, respectively. The hybrid port egress datapath can be visualized as access and network datapaths that coexist separately up until the access and network aggregate shapers at Tier 3 (#3 and #6).

In the figure, the top half is identical to access egress traffic management, where CoS queues (Tier 1) feed into either per-SAP shapers for shaped SAPs (#1) or a single second-tier shaper for all unshaped SAPs (#2). Up to the end of the second-tier, per-SAP aggregate shapers, the access egress datapath is maintained in the same manner as an Ethernet port in access mode. The same logic applies for network egress. The bottom half of the figure shows the datapath from the CoS queues to the per-VLAN shapers, which is identical to the datapath for any other Ethernet port in network mode.

The main difference between hybrid mode and access and network modes is shown when the access and the network traffic is arbitrated toward the port (Tier 3). At this point, a new set of dual-rate shapers (called shaper groups) are introduced: one shaper for the aggregate (bulk) of the access traffic (#3) and another shaper for the aggregate of the network traffic (#6), to ensure rate-based arbitration among access and network traffic.

Depending on the use and the application, the committed rate for any one mode of flow may need to be fine-tuned to minimize delay, jitter and loss. In addition, through the use of egress-rate limiting, a fourth level of shaping can be achieved.

When egress-rate is configured (under config>port>ethernet), the following events occur:

egress-rate applies backpressure to the access and network aggregate shapers

as a result, the aggregate shapers apply backpressure to the per-SAP and per-VLAN aggregate shapers

access aggregate shapers apply backpressure to the per-SAP aggregate shapers and the unshaped SAP aggregate shaper

network aggregate shapers apply backpressure to the per-VLAN aggregate shapers and the unshaped VLAN aggregate shaper

as a result, the per-SAP and per-VLAN aggregate shapers apply backpressure to their respective CoS queues

QoS for Gen-3 adapter cards and platforms

Third-generation (Gen-3) Ethernet adapter cards and Ethernet ports on Gen-3 platforms support 4-priority scheduling.

The main differences between Gen-3 hardware and Gen-2 hardware are that on Gen-3 hardware:

SAPs are shaped (that is, no unshaped SAPs)

SAPs and VLANs are shaped by 4-priority schedulers, not 16-priority schedulers

4-priority scheduling is done on a per-SAP basis

backpressure is applied according to relative priority across VLANs and interfaces. That is, scheduling is carried out in priority order, ignoring per-VLAN and per-interface boundaries. Conforming, expedited traffic across all queues is scheduled regardless of the VLAN boundaries. After all the conforming, expedited traffic across all queues has been serviced, the servicing of conforming, best effort traffic begins.

See Scheduling modes for a summary of scheduler mode support. For information about adapter card generations, see the ‟Evolution of Ethernet Adapter Cards, Modules, and Platforms” section in the 7705 SAR Interface Configuration Guide.

The following figure describes the access, network, and hybrid port scheduling behavior for Gen-3 hardware and compares it with the scheduling behavior of Gen-2 hardware.

|

Port type |

Gen-3 hardware with 4-priority mode |

Gen-2 hardware with 4-priority mode |

|

|---|---|---|---|

|

Access |

Within a SAP |

EXP over BE |

EXP over BE |

|

Default configuration |

Simple round-robin (RR) scheduling among SAPs |

EXP (across all queues, no SAP boundaries) over BE |

|

|

H-QoS and MSS aggregate shapers |

RR among aggregates based on PIR and CIR (SAP at tier 2, MSS at tier 3) |

N/A |

|

|

Network |

Default configuration (8 queues per port) |

EXP over BE |

EXP over BE |

|

Per-VLAN shaper |

EXP over BE |

RR among VLAN shapers based on PIR and CIR |

|

|

Hybrid |

Default configuration (8 queues per port) |

EXP over BE |

EXP (across all SAPs and network queues) over BE |

|

Per-VLAN shaper |

RR among VLAN shapers based on PIR and CIR |

RR among VLAN shapers based on PIR and CIR |

|

In summary, the following updates to Gen-3 scheduling are implemented:

enabled CIR-based shaping for:

per-SAP aggregate ingress and egress shapers

per-customer aggregate ingress and egress shapers

per-VLAN shaper at network egress when port is in hybrid mode

access and network aggregate shapers for hybrid ports

disabled backpressure to the FC queues dependent on the relative priority among all VLAN-bound IP Interfaces at:

access ingress and access egress when port is in access mode

access ingress and access egress when port is in hybrid mode

network ingress

network egress when port is in hybrid mode

6-port SAR-M Ethernet module

The egress datapath shapers on a 6-port SAR-M Ethernet module operate on the same frame size as any other shaper. These egress datapath shapers are:

-

per-queue shapers

-

per-SAP aggregate shapers

-

per-customer aggregate (MSS) shapers

The egress port shaper on a 6-port SAR-M Ethernet module does not account for the 4-byte FCS. Packet byte offset can be used to make adjustments to match the desired operational rate or eliminate the implications of FCS. See Packet byte offset for more information.

4-priority scheduling behavior on Gen-3 hardware

- 4-priority scheduling at access ingress (Gen-3 hardware) (access ingress)

- 4-priority scheduling at access egress (Gen-3 hardware) (access egress)

- 4-priority scheduling at network ingress (Gen-3 hardware): per-destination mode (network ingress, destination mode)

- 4-priority scheduling at network ingress (Gen-3 hardware): aggregate mode (network ingress, aggregate mode)

For network egress traffic through a network port on Gen-3 hardware, the behavior of 4-priority scheduling is as follows: traffic priority is determined at the queue-level scheduler, which is based on the queue PIR and CIR and the queue type. The queue-level priority is carried through the various shaping stages and is used by the 4-priority Gen-3 VLAN scheduler at network egress. See 4-priority scheduling at network egress (Gen-3 hardware) on a network port and its accompanying description.

For hybrid ports, both access and network egress traffic use 4-priority scheduling that is similar to 4-priority scheduling on Gen-2 hardware. See 4-priority scheduling for hybrid port egress (Gen-3 hardware) and its accompanying description.

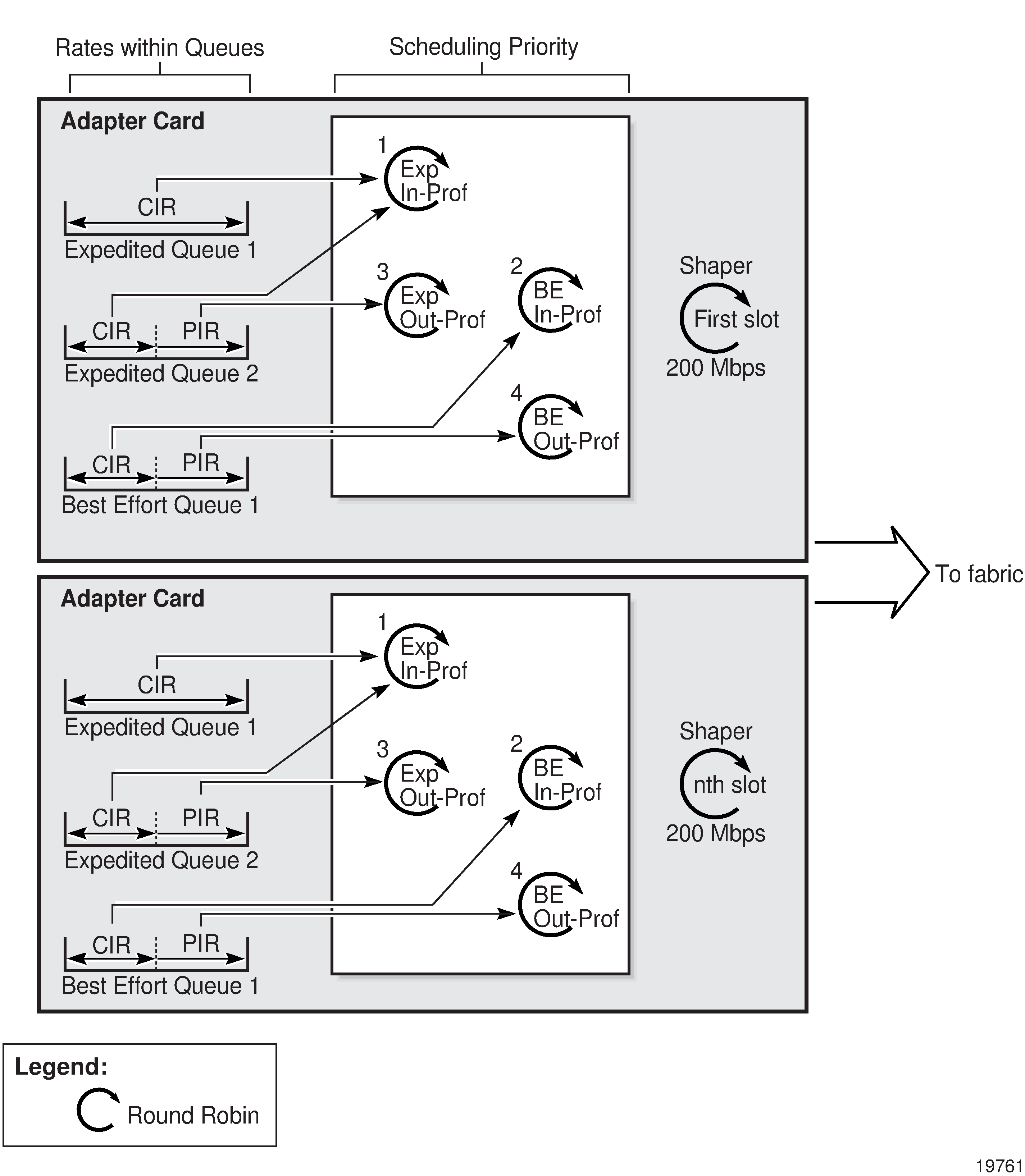

In the following figure, the shaper groups all belong within one shaper policy and only one shaper policy is assigned to an ingress adapter card. Each SAP can be associated with one shaper group. Multiple SAPs can be associated with the same shaper group. All the access ingress traffic flows to the access ingress fabric shaper, in-profile (conforming) traffic first, then out-of-profile (non-conforming) traffic. Network ingress traffic functions similarly.

The 4-priority schedulers on Gen-2 and Gen-3 hardware are very similar, except that 4-priority scheduling on Gen-3 hardware is done on a per-SAP basis.

The following figure shows 4-priority scheduling for access egress on Gen-3 hardware. QoS behavior for access egress is similar to QoS behavior for access ingress.

The 4-priority schedulers on Gen-2 and Gen-3 hardware are very similar, except that 4-priority scheduling on Gen-3 hardware is done on a per-SAP basis.

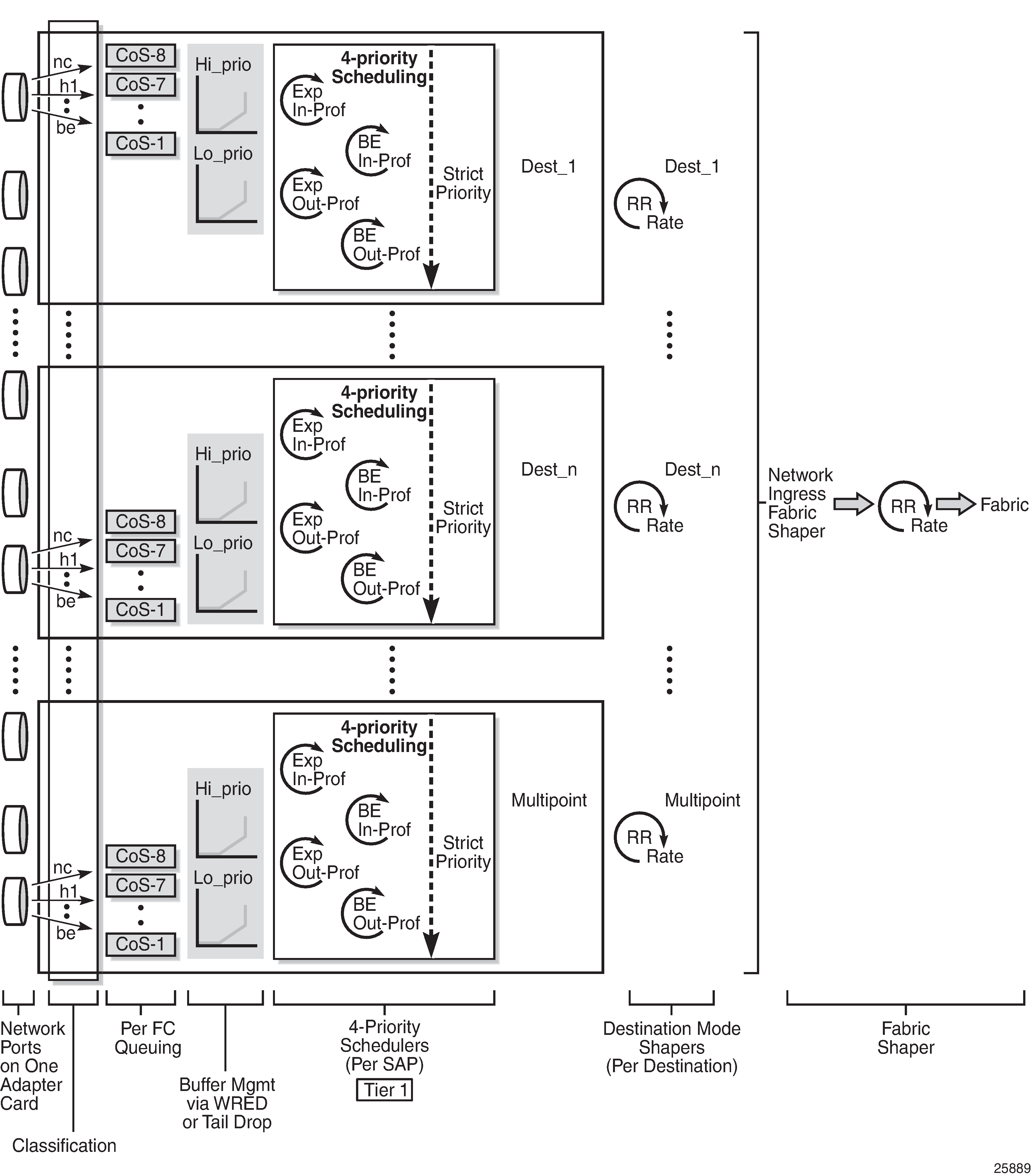

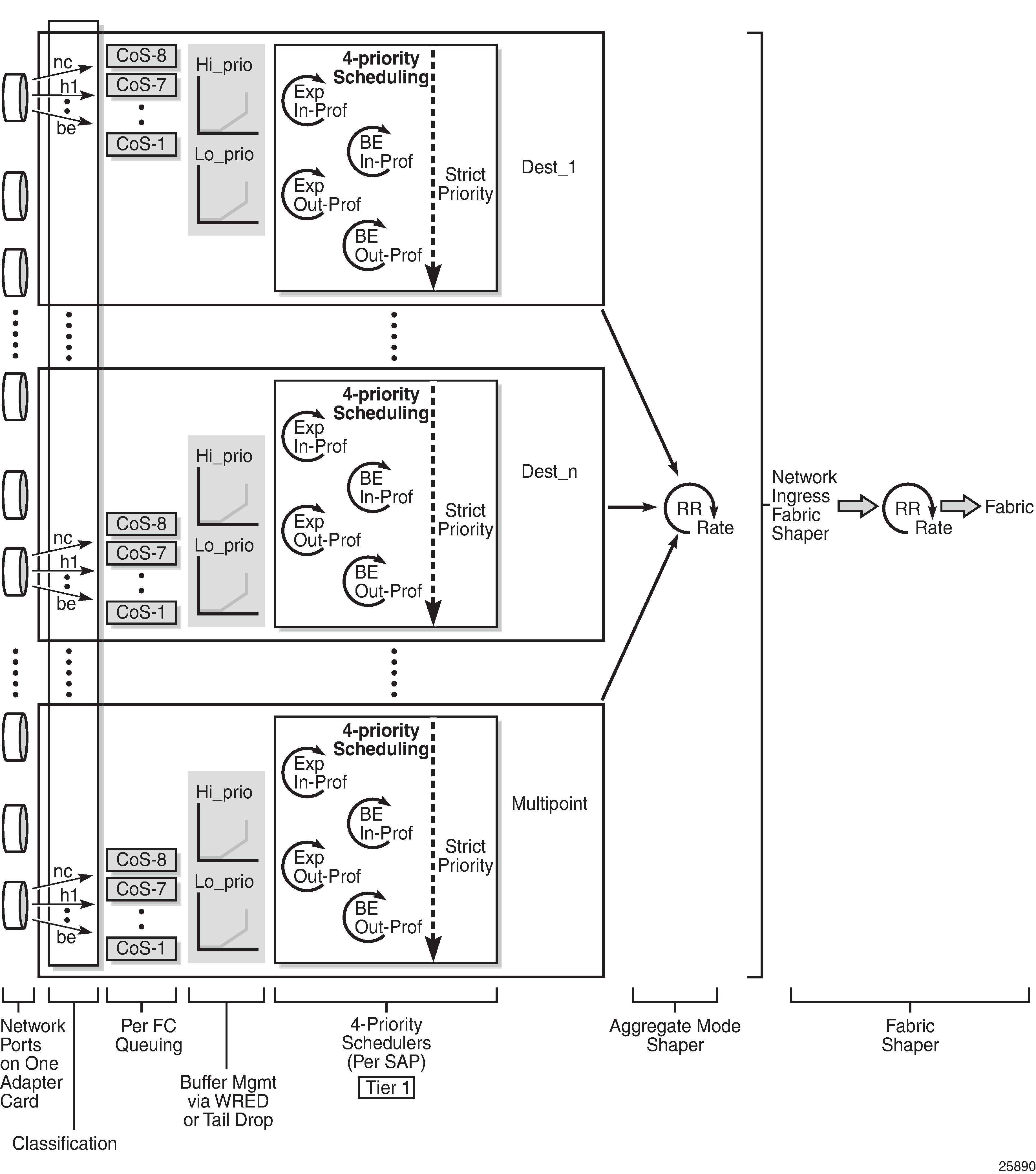

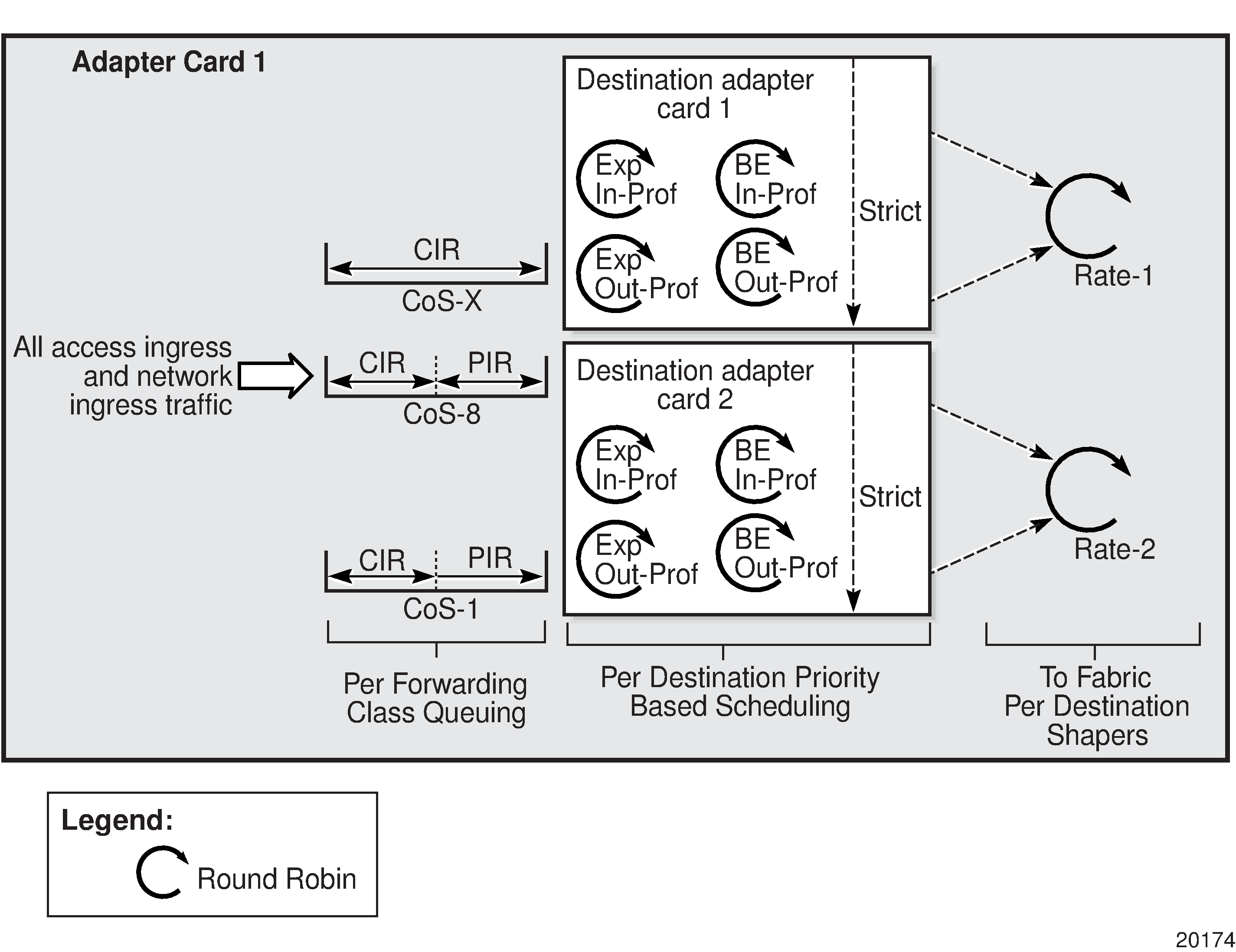

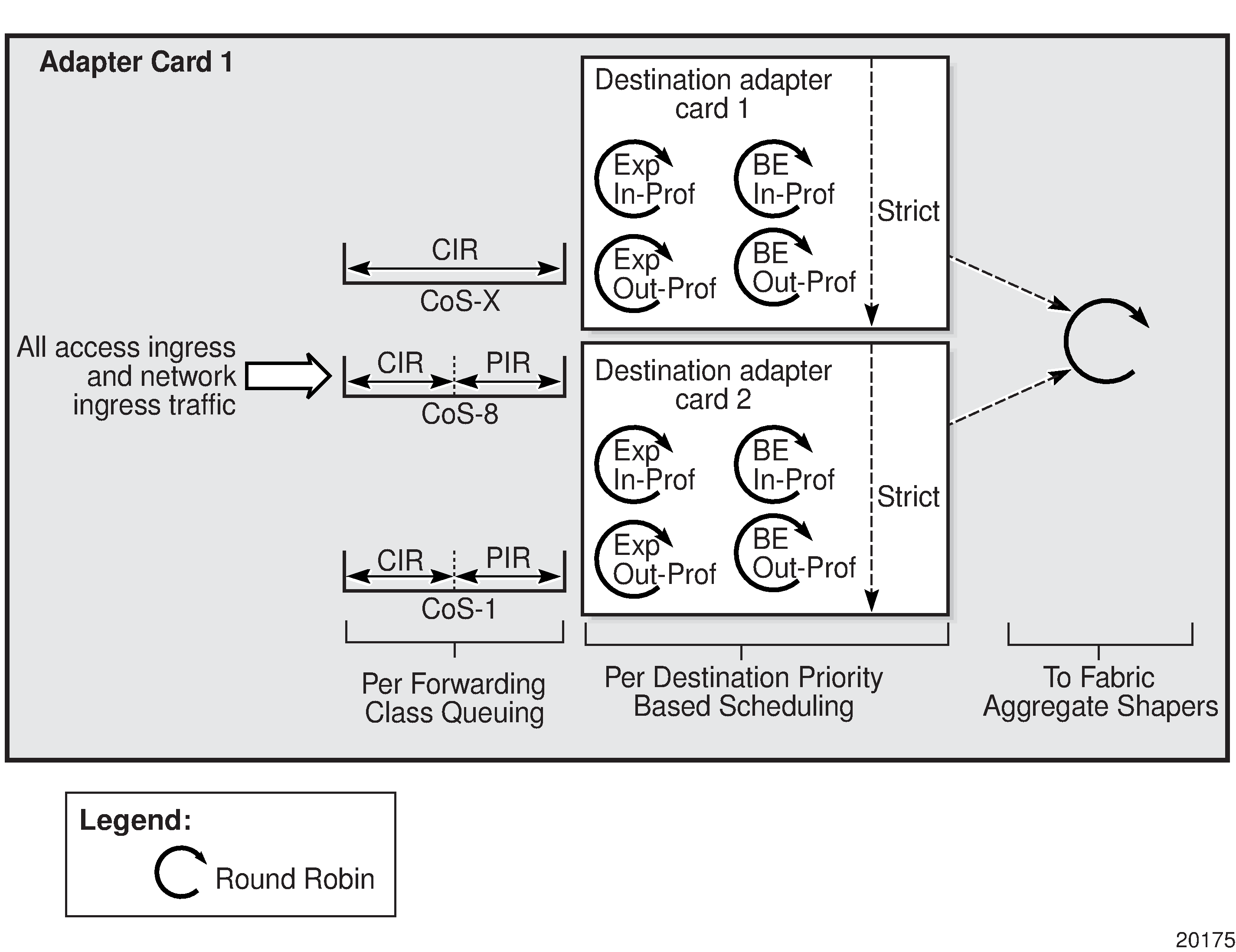

4-priority scheduling at network ingress (Gen-3 hardware): per-destination mode and 4-priority scheduling at network ingress (Gen-3 hardware): aggregate mode show network ingress scheduling for per-destination and aggregate modes, which are configured under the fabric-profile command. Traffic arriving on a network port is examined for its destination MDA and directed to the QoS block that sends traffic to the appropriate MDA. There is one set of queues for each block, and an additional set for multipoint traffic.

In the following figure, there is one per-destination shaper for each destination MDA.

In the following figure, there is a single shaper to handle all the traffic.

The following figure shows 4-priority scheduling for Gen-3 hardware at network egress. Queue-level CIR and PIR values and the queue type are determined at queuing and provide the scheduling priority for a specific flow across all shapers toward the egress port (#1 in the figure). At the per-VLAN aggregate level (#2), only a single rate—the total aggregate rate (PIR)—can be configured; CIR configuration is not supported at the per-VLAN aggregate-shaper level for network egress traffic. All VLANs are aggregated and scheduled by a 4-priority aggregate scheduler (#3). The flow is then fed to the port shaper and processed at the egress rate. In case of congestion, the port shaper provides backpressure, resulting in the buffering of traffic by individual FC queues until the congested state ends.

The following figure shows 4-priority scheduling for Gen-3 hardware where ports are in hybrid mode. The QoS behavior for both access and network egress traffic is similar except that the access egress path includes tier 3, per-customer aggregate shapers. Access and network shapers prepare and present traffic to the port shaper, which arbitrates between access and network traffic.

The 4-priority schedulers on the Gen-2 and Gen-3 hardware are very similar, except that 4-priority scheduling on Gen-3 hardware is done on a per-SAP or a per-VLAN basis (for access egress and network egress, respectively).

Gen-3 hardware and LAG

When a Gen-3-based port and a Gen-2-based port are attached to a LAG SAP (also referred to as mix-and-match LAG), configuring scheduler mode for the LAG SAP is required because it is used by Gen-2 ports, but it is ignored by the Gen-3 port.

For more information, see the ‟LAG Support on Third-Generation Ethernet Adapter Cards, Ports, and Platforms” section in the 7705 SAR Interface Configuration Guide.

QoS on a ring adapter card or module

This section contains overview information as well as information about the following topics:

Network QoS and network queue policies on a ring adapter card or module

Considerations for using ring adapter card or module QoS policies

The following figure shows a simplified diagram of the ports on a 2-port 10GigE (Ethernet) Adapter card (also known as a ring adapter card). The ports can also be conceptualized the same way for a 2-port 10GigE (Ethernet) module. A ring adapter card or module has physical Ethernet ports used for Ethernet bridging in a ring network (labeled Port 1 and Port 2 in the figure). These ports are referred to as the ring ports because they connect to the Ethernet ring. The ring ports operate on the Layer 2 bridging domain side of the ring adapter card or module, as does the add/drop port, which is an internal port on the card or module.

On the Layer 3 IP domain side of a ring adapter card or module, there is a virtual port (v-port) and a fabric port. The v-port is also an internal port. Its function is to help control traffic on the IP domain side of a ring adapter card or module.

To manage ring and add/drop traffic mapping to queues in the Layer 2 bridging domain, a ring type network QoS policy can be configured for the ring at the adapter card level (under the config>card>mda context). To manage ring and add/drop traffic queuing and scheduling in the Layer 2 bridging domain, network queue QoS policies can be configured for the ring ports and the add-drop port.

To manage add/drop traffic classification and remarking in the Layer 3 IP domain, IP-interface type network QoS policies can be configured for router interfaces on the v-port. To manage add/drop traffic queuing and scheduling in the Layer 3 IP domain, network queue QoS policies can be configured for the v-port and at network ingress at the adapter card level (under the config>card>mda context).

Network and network queue QoS policy types

All ports on a ring adapter card or module are possible congestion points and therefore can have network queue QoS policies applied to them.

In the bridging domain, a single ring type network QoS policy can be applied at the adapter card level and operates on the ring ports and the add/drop port. In the IP domain, IP interface type network QoS policies can be applied to router interfaces.

Network QoS policies are created using the config>qos>network command, which includes the network-policy-type keyword to specify the type of policy:

ring (for bridging domain policies)

ip-interface (for network ingress and network egress IP domain policies)

default (for setting the policy to policy 1, the system default policy)

When the policy has been created, its default action and classification mapping can be configured.

Network QoS and network queue policies on a ring adapter card or module

Network QoS policies are applied to the ring ports and the add/drop port using the qos-policy command found under the config>card>mda context. These ports are not explicitly specified in the command.

Network queue QoS policies are applied to the ring ports and the v-port using the queue-policy command found under the config>port context. Similarly, a network queue policy is applied to the add/drop port using the add-drop-port- queue-policy command, found under the config>card>mda context. The add/drop port is not explicitly specified in this command.

The CLI commands for applying QoS policies are listed in this guide. The CLI command descriptions are in the 7705 SAR Interface Configuration Guide.

Considerations for using ring adapter card or module QoS policies

The following notes apply to configuring and applying QoS policies to a ring adapter card or module, as well as other adapter cards:

The ring ports and the add/drop port cannot use a network queue policy that is being used by the v-port or the network ingress port or any other port on other cards, and vice versa. This does not apply to the default network queue policy.

If a network-queue policy is assigned to a ring port or the add/drop port, all queues that are configured in the network-queue policy are created regardless of any FC mapping to the queues. All FC queue mapping in the network-queue policy is meaningless and is ignored.

If the QoS policy has a dot1p value mapped to a queue that is not configured in the network-queue policy, the traffic of this dot1p value is sent to queue 1.

If a dot1q-to-queue mapping is defined in a network QoS policy, and if the queue is not configured on any ring port or the add/drop port, all traffic received from a port will be sent out from queue 1 on the other two ports. For example, if traffic is received on port 1, it will be sent out on port 2 and/or the add/drop port.

Upon provisioning an MDA slot for a ring adapter card or module (config>card>mda> mda-type) an additional eight network ingress queues are allocated to account for the network queues needed for the add/drop port. When the ring adapter card or module is deprovisioned, the eight queues are deallocated.

The number of ingress network queues is shown by using the tools>dump> system-resource command.

QoS for IPSec traffic

For specific information about QoS for IPSec traffic, see the ‟QoS” section in the ‟IPSec” chapter in the 7705 SAR Services Guide.

QoS for network group encryption traffic

The 7705 SAR provides priority and scheduling for traffic into the encryption and decryption engines on nodes that support network group encryption (NGE). This applies to traffic at network ingress or network egress.

For specific information, see the ‟QoS for NGE Traffic” section in the ‟Network Group Encryption” chapter in the 7705 SAR Services Guide.

Access ingress

This section contains the following topics for traffic flow in the access ingress direction:

Access ingress traffic classification

Traffic classification identifies a traffic flow and maps the packets belonging to that flow to a preset forwarding class, so that the flow can receive the required special treatment. Up to eight forwarding classes are supported for traffic classification. See Default forwarding classes for a list of these forwarding classes.

For TDM channel groups, all of the traffic is mapped to a single forwarding class. Similarly, for ATM VCs, each VC is linked to one forwarding class. On Ethernet ports and VLANs, up to eight forwarding classes can be configured based on 802.1p (dot1p) bits or DSCP bits classification. On PPP/MLPPP, FR (for Ipipes), or cHDLC SAPs, up to eight forwarding classes can be configured based on DSCP bits classification. FR (for Fpipes) and HDLC SAPs are mapped to one forwarding class.

If an Ethernet port is set to null encapsulation, the dot1p value has no meaning and cannot be used for classification purposes.

If a port or SAP is set to qinq encapsulation, use the match-qinq-dot1p top | bottom command to indicate which qtag contains the dot1p bits that are used for classification purposes. The match-qinq-dot1p command is found under the config>service context. See the 7705 SAR Services Guide, ‟VLL Services Command Reference”, for details.

After the classification takes place, forwarding classes are mapped to queues as described in the sections that follow.

Traffic classification types

The various traffic classification methods used on the 7705 SAR are described in the following table. A list of classification rules follows the table.

Traffic classification based on... |

Description |

|---|---|

a channel group (n ✕ DS0) |

Applies to 16-port T1/E1 ASAP Adapter card and 32-port T1/E1 ASAP Adapter card ports, 2-port OC3/STM1 Channelized Adapter card ports, 12-port Serial Data Interface card ports, 4-port T1/E1 and RS-232 Combination module ports, and 6-port E&M Adapter card ports in structured or unstructured circuit emulation mode. In this mode, a number of DS0s are transported within the payload of the same Circuit Emulation over Packet Switched Networks (CESoPSN) packet, Circuit Emulation over Ethernet (CESoETH) packet, or Structure-Agnostic TDM over Packet (SAToP) packet. Therefore, the timeslots transporting the same type of traffic are classified all at once. |

an ATM VCI |

On ATM-configured ports, any virtual connection regardless of service category is mapped to the configured forwarding class. One-to-one mapping is the only supported option. VP- or VC-based classifications are both supported. A VC with a specified VPI and VCI is mapped to the configured forwarding class. A VP connection with a specified VPI is mapped to the configured forwarding class. |

an ATM service category |

Similar ATM service categories can be mapped against the same forwarding class. Traffic from a given VC with a specified service category is mapped to the configured forwarding class. VC selection is based on the ATM VC identifier. |

an Ethernet port |

All the traffic from an access ingress Ethernet port is mapped to the selected forwarding class. More granular classification can be performed based on dot1p or DSCP bits of the incoming packets. Classification rules applied to traffic flows on Ethernet ports function in the same way as access/filter lists. There can be multiple tiers of classification rules associated with an Ethernet port. In this case, classification is performed based on priority of classifier. The order of the priorities is described in Hierarchy of classification rules. |

an Ethernet VLAN (dot1q or qinq) |

Traffic from an access Ethernet VLAN (dot1q or qinq) interface can be mapped to a forwarding class. Each VLAN can be mapped to one forwarding class. |

IEEE 802.1p bits (dot1p) |

The dot1p bits in the Ethernet/VLAN ingress packet headers are used to map the traffic to up to eight forwarding classes. |

PPP/MLPPP, FR (for Ipipes), and cHDLC SAPs |

Traffic from an access ingress SAP is mapped to the selected forwarding class. More granular classification can be performed based on DSCP bits of the incoming packets. |

FR (for Fpipes) and HDLC SAPs |

Traffic from an access ingress SAP is mapped to the selected (default) forwarding class. |

DSCP bits |

When the Ethernet payload is IP, ingress traffic can be mapped to a maximum of eight forwarding classes based on DSCP bit values. DSCP-based classification supports untagged, single-tagged, double-tagged, and triple-tagged Ethernet frames. If an ingress frame has more than three VLAN tags, then dot1q or qinq dot1p-based classification must be used. |

Multi-field classifiers |

Traffic is classified based on any IP criteria currently supported by the 7705 SAR filter policies; for example, source and destination IP address, source and destination port, whether or not the packet is fragmented, ICMP code, and TCP state. For information about multi-field classification, see the 7705 SAR Router Configuration Guide, ‟Multi-field Classification (MFC)” and ‟IP, MAC, and VLAN Filter Entry Commands”. |

Hierarchy of classification rules

The following table shows classification options for various access entities (SAP identifiers) and service types. For example, traffic from a TDM port using a TDM (Cpipe) PW maps to one FC (all traffic has the same CoS). Traffic from an Ethernet port using a Epipe PW can be classified to as many as eight FCs based on DSCP classification rules, while traffic from a SAP with dot1q or qinq encapsulation can be classified to up to eight FCs based on dot1p or DSCP rules.

For Ethernet traffic, dot1p-based classification for dot1q or QinQ SAPs takes precedence over DSCP-based classification. For null-encapsulated Ethernet ports, only DSCP-based classification applies. In either case, when defining classification rules, a more specific match rule is always preferred to a general match rule.

For more information about hierarchy rules, see Forwarding class and enqueuing priority classification hierarchy based on rule type in the Service ingress QoS policies section.

Access type (SAP) |

Service type |

|||||||

|---|---|---|---|---|---|---|---|---|

TDM PW |

ATM PW |

FR PW |

HDLC PW |

Ethernet PW |

IP PW |

VPLS |

VPRN |

|

TDM port |

1 FC |

|

|

|

|

|

|

|

Channel group |

1 FC |

|

|

|

|

|

|

|

ATM virtual connection identifier |

|

1 FC |

|

|

|

|

1 FC |

|

FR |

|

|

1 FC |

|

|

DSCP, up to 8 FCs |

|

|

HDLC |

|

|

|

1 FC |

|

|

|

|

PPP / MLPPP |

|

|

|

|

|

DSCP, up to 8 FCs |

|

DSCP, up to 8 FCs |

cHDLC |

|

|

|

|

|

DSCP, up to 8 FCs |

|

|

Ethernet port |

|

|

|

|

DSCP, up to 8 FCs |

DSCP, up to 8 FCs |

DSCP, up to 8 FCs |

DSCP, up to 8 FCs |

Dot1q encapsulation |

|

|

|

|

Dot1p or DSCP, up to 8 FCs |

Dot1p or DSCP, up to 8 FCs |

Dot1p or DSCP, up to 8 FCs |

Dot1p or DSCP, up to 8 FCs |

QinQ encapsulation |

|

|

|

|

Dot1p or DSCP, up to 8 FCs |

Dot1p or DSCP, up to 8 FCs |

Dot1p or DSCP, up to 8 FCs |

Dot1p or DSCP, up to 8 FCs |

Discard probability of classified traffic

When the traffic is mapped against a forwarding class, the discard probability for the traffic can be configured as high or low priority at ingress. When the traffic is further classified as high or low priority, different congestion management schemes could be applied based on this priority. For example WRED curves can then be run against the high- and low-priority traffic separately, as described in Slope policies (WRED and RED).

This ability to specify the discard probability is very significant because it controls the amount of traffic that is discarded under congestion or high usage. If you know the characteristics of your traffic, particularly the burst characteristics, the ability to change the discard probability can be used to great advantage. The objective is to customize the properties of the random discard functionality such that the minimal amount of data is discarded.

Access ingress queues

After the traffic is classified to different forwarding classes, the next step is to create the ingress queues and bind forwarding classes to these queues.

There is no restriction of a one-to-one association between a forwarding class and a queue. That is, more than one forwarding class can be mapped to the same queue. This capability is beneficial in that it allows a bulk-sum amount of resources to be allocated to traffic flows of a similar nature. For example, in the case of 3G UMTS services, HSDPA and OAM traffic are both considered BE in nature. However, HSDPA traffic can be mapped to a better forwarding class (such as L2) while OAM traffic can remain mapped to a BE forwarding class. But they both can be mapped to a single queue to control the total amount of resources for the aggregate of the two flows.

A large but finite amount of memory is available for the queues. Within this memory space, many queues can be created. The queues are defined by user-configurable parameters. This flexibility and complexity is necessary in order to create services that offer optimal quality of service and is much better than a restrictive and fixed buffer implementation alternative.

Memory allocation is optimized to guarantee the CBS for each queue. The allocated queue space beyond the CBS that is bounded by the MBS depends on the usage of buffer space and existing guarantees to queues (that is, the CBS). The CBS is defaulted to 8 kB (for 512 byte buffer size) or 18 kB (for 2304 byte buffer size) for all access ingress queues on the 7705 SAR. With a small default queue depth (CBS) allocated for each queue, all services at full scale are guaranteed to have buffers for queuing. The default value would need to be altered to meet the requirements of a specific traffic flow or flows.

Access ingress queuing and scheduling

Traffic management on the 7705 SAR uses a packet-based implementation of the dual leaky bucket model. Each queue has a guaranteed space limited with CBS and a maximum depth limited with MBS. New packets are queued as they arrive. Any packet that causes the MBS to be exceeded is discarded.

The packets in the queue are serviced by two different profiled (rate-based) schedulers, the In-Profile and Out-of-Profile schedulers, where CIR traffic is scheduled before PIR traffic. These two schedulers empty the queue continuously.

For 4-priority scheduling, rate-based schedulers (CIR and PIR) are combined with queue-type schedulers (EXP or BE). For 16-priority scheduling, the rate-based schedulers are combined with the strict priority schedulers (CoS-8 queue first to CoS-1 queue last).

Access ingress scheduling is supported on the adapter cards and ports listed in the following table. The supported scheduling modes are 4-priority scheduling and 16-priority scheduling. The table shows which scheduling mode each card and port supports at access ingress.

This section also contains information about the following topics:

Adapter card or port |

4-priority |

16-priority |

|---|---|---|

8-port Gigabit Ethernet Adapter card |

✓ |

✓ |

Packet Microwave Adapter card |

✓ |

✓ |

6-port Ethernet 10Gbps Adapter card 1 |

✓ |

|

10-port 1GigE/1-port 10GigE X-Adapter card (in 10-port 1GigE mode) |

✓ |

✓ |

4-port SAR-H Fast Ethernet module |

✓ |

|

6-port SAR-M Ethernet module |

✓ |

✓ |

Ethernet ports on the 7705 SAR-A |

✓ |

✓ |

Ethernet ports on the 7705 SAR-Ax |

✓ |

✓ |

Ethernet ports on the 7705 SAR-H |

✓ |

✓ |

Ethernet ports on the 7705 SAR-Hc |

✓ |

✓ |

Ethernet ports on the 7705 SAR-M |

✓ |

✓ |

Ethernet ports on the 7705 SAR-Wx |

✓ |

✓ |

Ethernet ports on the 7705 SAR-X1 |

✓ |

|

16-port T1/E1 ASAP Adapter card |

✓ |

|

32-port T1/E1 ASAP Adapter card |

✓ |

|

2-port OC3/STM1 Channelized Adapter card |

✓ |

|

4-port OC3/STM1 Clear Channel Adapter card |

✓ |

|

4-port OC3/STM1 / 1-port OC12/STM4 Adapter card |

✓ |

|

4-port DS3/E3 Adapter card |

✓ |

|

T1/E1 ASAP ports on the 7705 SAR-A |

✓ |

|

T1/E1 ASAP ports on the 7705 SAR-M |

✓ |

|

TDM ports on the 7705 SAR-X |

✓ |

|

12-port Serial Data Interface card |

✓ |

|

6-port E&M Adapter card |

✓ |

|

6-port FXS Adapter card |

✓ |

|

8-port FXO Adapter card |

✓ |

|

8-port Voice & Teleprotection card |

✓ |

|

8-port C37.94 Teleprotection card |

✓ |

|

Integrated Services card |

✓ |

- 4-priority scheduler for Gen-3 adapter card or platform.

Profiled (rate-based) scheduling

Each queue is serviced based on the user-configured CIR and PIR values. If the packets that are collected by a scheduler from a queue are flowing at a rate that is less than or equal to the CIR value, the packets are scheduled as in-profile. Packets with a flow rate that exceeds the CIR value but is less than the PIR value are scheduled as out-of-profile. 4-priority scheduling depicts this behavior by the ‟In-Prof” and ‟Out-Prof” labels. This behavior is comparable to the dual leaky bucket implementation in ATM networks. With in-profile and out-of-profile scheduling, traffic that flows at rates up to the traffic contract (that is, CIR) from all the queues is serviced prior to traffic that flows at rates exceeding the traffic contract. This mode of operation ensures that service-level agreements (SLAs) are honored and traffic that is committed to be transported is switched prior to traffic that exceeds the contract agreement.

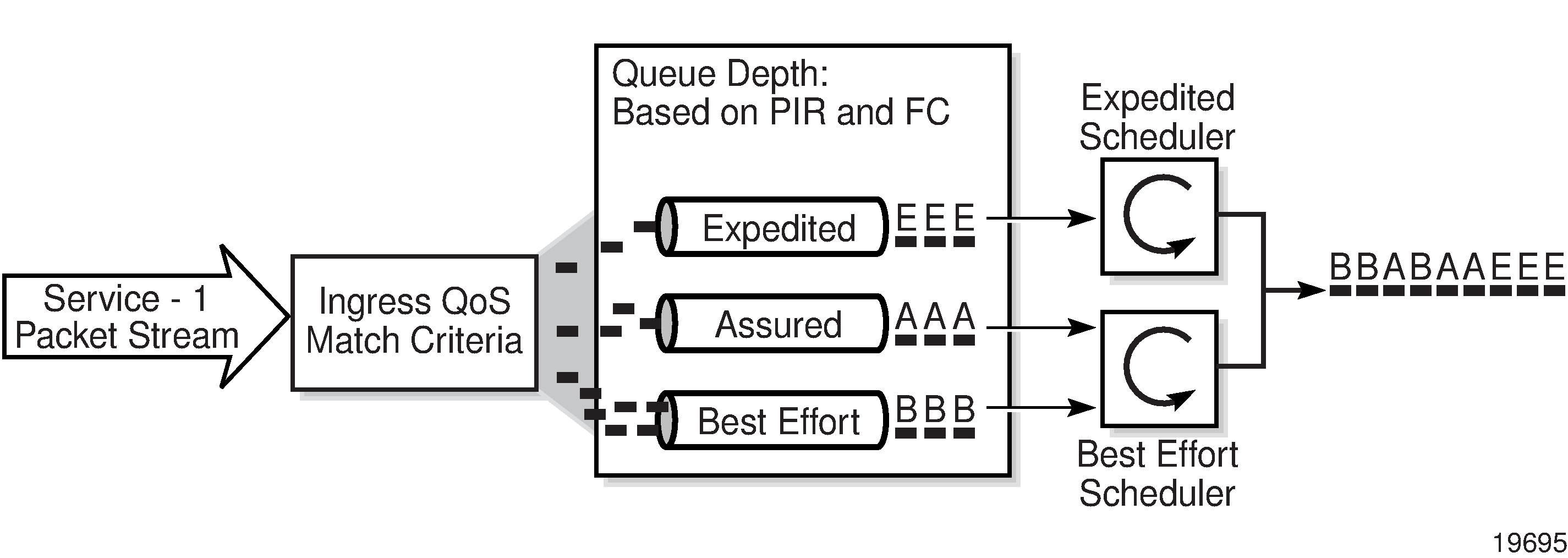

Queue-type scheduling

As well as profiled scheduling described above, queue-type scheduling is supported at access ingress. Queues are divided into two categories, those that are serviced by the Expedited scheduler and those that are serviced by the Best Effort scheduler.

The Expedited scheduler has precedence over the Best Effort scheduler. Therefore, at access ingress, CoS queues that are marked with an Expedited priority are serviced first. Then the Best Effort marked queues are serviced. In a default configuration, the Expedited scheduler services the following CoS queues before the Best Effort scheduler services the rest:

Expedited scheduler: NC, H1, EF, H2

Best Effort scheduler: L1, AF, L2, BE

If a packet with an Expedited forwarding class arrives while a Best Effort marked queue is being serviced, the Expedited scheduler takes over and services the Expedited marked CoS queue as soon as the packet from the Best Effort scheduler is serviced.

The schedulers at access ingress in the 7705 SAR service the group of all Expedited queues exhaustively ahead of the group of all Best Effort queues. This means that all expedited queues have to be empty before any packet from a Best Effort queue is serviced.

The following basic rules apply to the queue-type scheduling of CoS queues:

Queues marked for Expedited scheduling are serviced in a round-robin fashion before any queues marked as Best Effort (in a default configuration, these would be queues CoS-8 through CoS-5).

These Expedited queues are serviced exhaustively within the round robin. For example, if in a default configuration there are two packets scheduled for service in both CoS-8 and CoS-5, one packet from CoS-8 is serviced, then one packet from CoS-5 is serviced, and then the scheduler returns back to CoS-8, until all the packets are serviced.

After the Expedited scheduler has serviced all the packets in the queues marked for Expedited scheduling, the Best Effort scheduler starts serving the queues marked as Best Effort. The same principle described in step 2 is followed, until all the packets in the Best Effort queues are serviced.

If a packet arrives at any of the queues marked for Expedited scheduling while the scheduler is servicing a packet from a Best Effort queue, the Best Effort scheduler finishes servicing the current packet and then the Expedited scheduler immediately activates to service the packet in the Expedited queue. If there are no other packets to be serviced in any of the Expedited queues, the Best Effort scheduler resumes servicing the packets in the Best Effort queues. If the queues are configured according to the tables and defaults described in this guide, CoS-4 will be scheduled prior to CoS-1 among queues marked as Best Effort.

After one cycle is completed across all the queues marked as Best Effort, the next pass is started until all the packets in all the queues marked as Best Effort are serviced, or a packet arrives to a queue marked as Expedited and is serviced as described in step 2.

4-priority scheduling

With 4-priority scheduling, profiled scheduling and queue-type scheduling are combined and the combination is applied to all of the access ingress queues. The profile and queue-type schedulers are combined and applied to the queues to provide maximum flexibility and scalability that meet the stringent QoS requirements of modern network applications. See Profiled (rate-based) scheduling and Queue-type scheduling for information about these types of scheduling.

Packets with a flow rate that is less than or equal to the CIR value of a queue are scheduled as in-profile. Packets with a flow rate that exceeds the CIR value but is less than the PIR value of a queue are scheduled as out-of-profile.

The scheduling cycle for 4-priority scheduling of CoS queues is shown in 4-priority scheduling. The following basic steps apply:

-

In-profile traffic from Expedited queues is serviced in round-robin fashion up to the CIR value. When a queue exceeds its configured CIR value, its state is changed to out-of-profile.

-