LDP Point-to-Point LSPs

This chapter provides information about Label Distribution Protocol (LDP) point-to-point Label Switched Paths (LSPs)

Topics in this chapter include:

Applicability

This chapter is applicable to SR Linux 25.7.R1. There are no prerequisites or conditions on the hardware for this configuration.

Overview

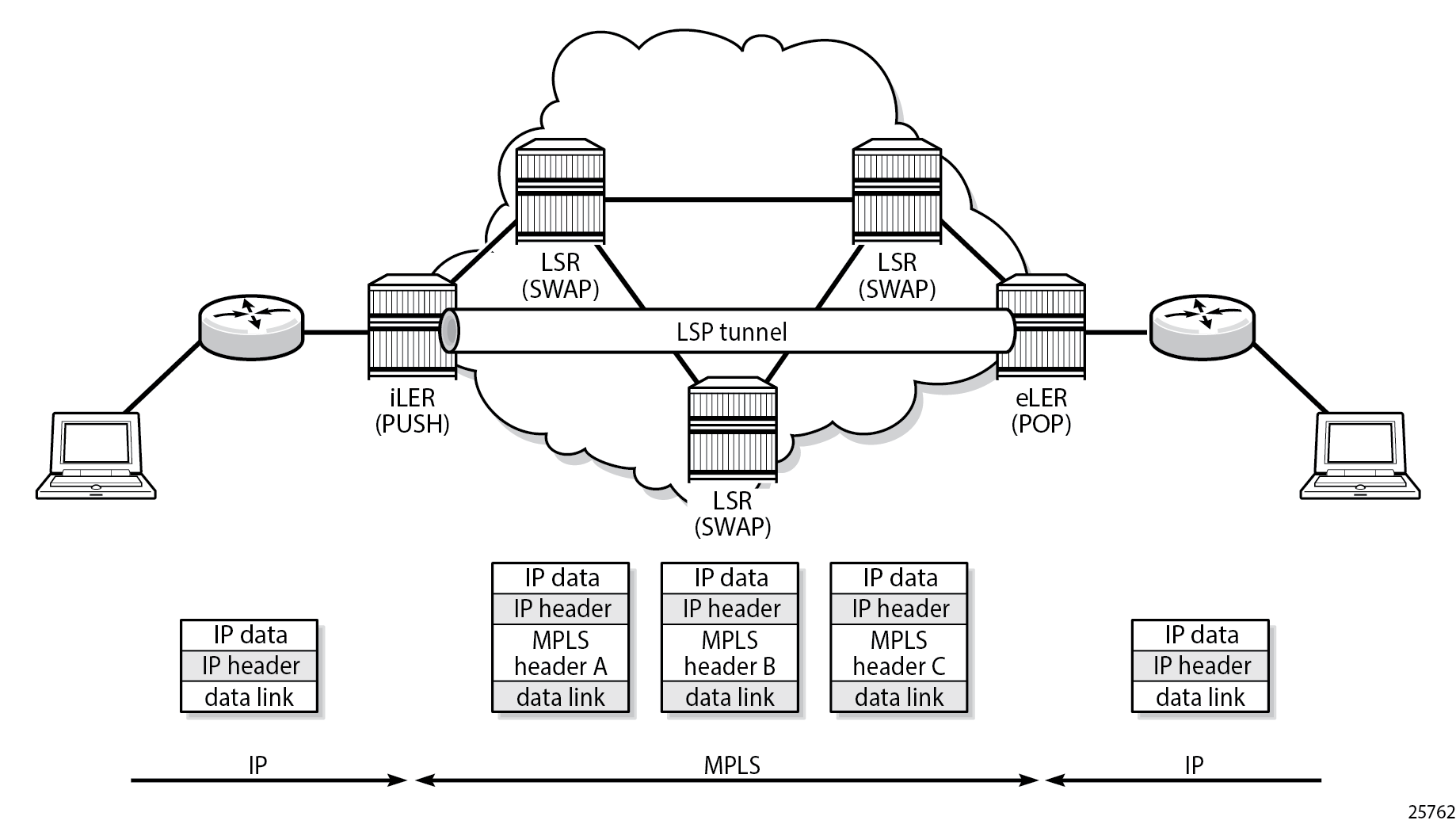

Because of the connectionless nature of the network layer protocol, IP packets travel through the network on a hop-by-hop basis with routing decisions made at each node. As a result, hyper aggregation of data on specific links may occur and it may impact the provider's ability to provide guaranteed service levels across the network end-to-end. To address these shortcomings, Multi-Protocol Label Switching (MPLS) was developed.

MPLS provides the capability to establish connection-oriented paths, called Label Switched Paths (LSPs), over a connectionless (IP) network. The LSP offers a mechanism to engineer network traffic independently from the underlying network routing protocol (mostly IP) to improve the network resiliency and recovery options and to permit delivery of services that are not readily supported by conventional IP routing techniques, such as Layer 2 Virtual Private Networks (VPNs). These benefits are essential for today's communication network explaining the wide deployment base of the MPLS technology.

RFC 3031 specifies the MPLS architecture whereas this chapter describes the configuration and troubleshooting of point-to-point LSPs on SR Linux.

Packet forwarding

- analyzing the packet header

- referencing the local routing table to find the longest prefix match based on the destination address in the IP header

- sending out the packet on the corresponding interface

The first function partitions the entire set of incoming packets into a set of Forwarding Equivalence Classes (FECs). All packets mapped to a particular FEC are forwarded along the same path to the same destination. The second function maps each FEC to a next hop destination router. Each router along the path performs these actions.

In MPLS, the assignment of a packet to a particular FEC is done only when the packet enters the network. The FEC is mapped to an LSP, which must be established before the packets can be forwarded.

An MPLS egress label, representing the FEC to which the packet is assigned, is attached to the packet (push operation) and the labeled packet is forwarded to the next hop router along that LSP path.

At subsequent hops, the packet IP header is not analyzed. Instead, the ingress label is used as an index into a table which specifies the next hop and a new egress label. The ingress label is replaced with the new egress label (swap operation), and the packet is forwarded to the specified next hop.

At the MPLS network egress, the ingress label is removed from the packet (pop operation). If this router is the destination of the packet (based on the remaining packet), the packet is handed to the receiving application, such as a MAC VRF. If this router is not the destination of the packet, the packet is sent into a new MPLS tunnel or forwarded by conventional IP forwarding toward the Layer 3 destination.

Terminology

- The MPLS router at the head-end of an LSP is called the ingress Label Edge Router (ILER)

- The MPLS router at the tail-end of an LSP is called the egress Label Edge Router (ELER)

The ILER receives unlabeled packets from outside the MPLS domain, applies MPLS egress labels to the packets, and forwards the labeled packets into the MPLS domain.

The ELER receives labeled packets from the MPLS domain, removes the ingress labels, and forwards unlabeled packets outside the MPLS domain. The ELER can signal an implicit-null label. This informs the previous hop to send MPLS packets without an outer egress label. This is known as Penultimate Hop Popping (PHP).

A Label Switching Router (LSR) is a device internal to an MPLS network, with all interfaces inside the MPLS domain. These devices switch labeled packets inside the MPLS domain. In the core of the network, LSRs ignore the packet IP header and simply forward the packet using the MPLS label swapping mechanism.

LSP establishment

The LSP must be established before any packets can be forwarded. To set up an LSP, labels need to be distributed for the path. Labels are usually distributed by a downstream router in the upstream direction (relative to the data flow). Label distribution can be static or via a protocol such as LDP. For static P2P LSPs, see Static MPLS LSPs.

LDP (RFC 5036) can be considered as an extension to the network Interior Gateway Protocol (IGP). As routers become aware of new destination networks, they advertise labels in the upstream direction that allow upstream routers to reach the destination.

LDP loopfree alternate (LFA) allows for computing backup paths and advertising the backup labels before a failure takes place. In this way traffic can flow almost continuously, without waiting for routing protocol convergence; see MPLS LDP LFA using ISIS as IGP.

Example topology

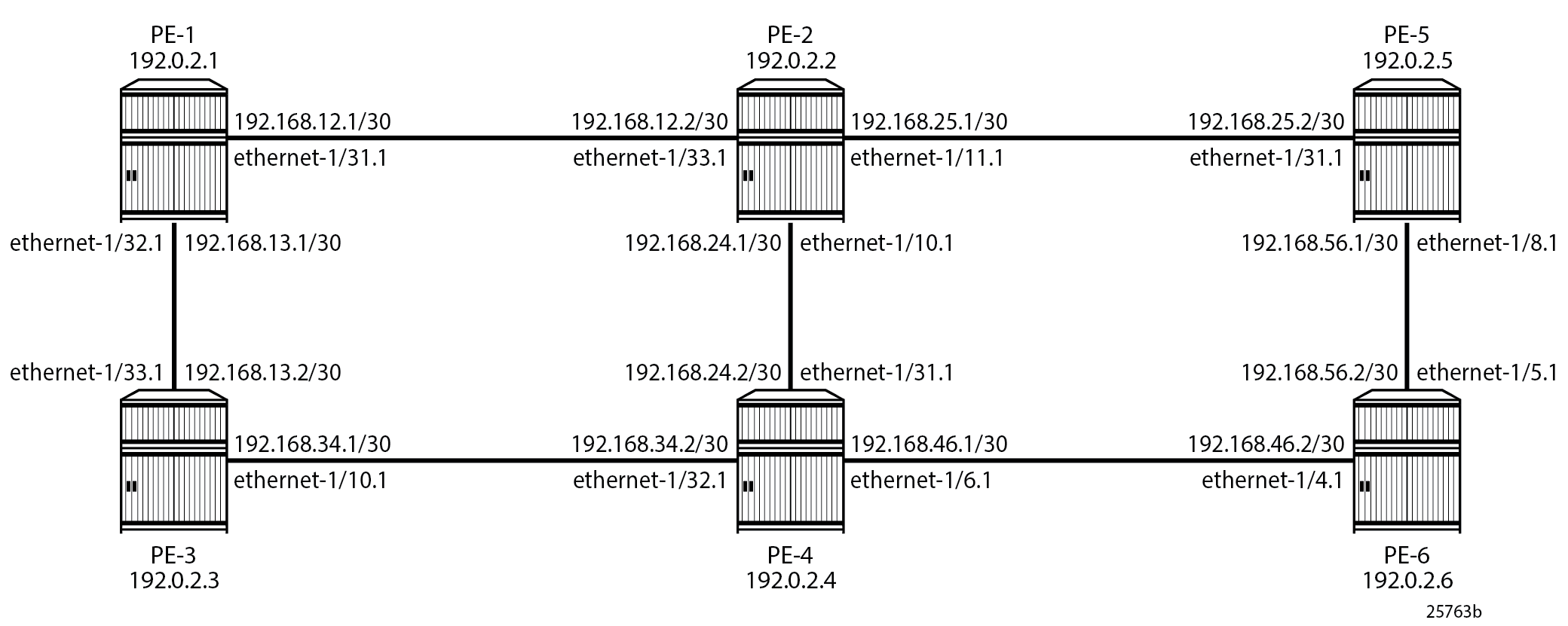

MPLS example topology shows the example topology consisting of six SR Linux nodes located in a single autonomous system.

Configuration

The configuration of dynamic MPLS LSPs requires a correctly working Interior Gateway Protocol (IGP). Intermediate System to Intermediate System (IS-IS) or Open Shortest Path First (OSPF) can be used as IGP.

LDP is a simple Label Distribution Protocol with basic MPLS functionality (no traffic engineering). It supports LFA for fast rerouting; see MPLS LDP LFA using ISIS as IGP. LDP relies on the underlying routing information provided by an IGP to forward labeled packets. Each LDP configured LSR originates a label for its system address and a label for each FEC for which it has a next hop that is external to the MPLS domain, without the explicit need to manually configure the LSPs. When deviations from this default behavior are needed, import and export policies can be applied.

The configuration is as simple as enabling the LDP protocol instance and adding all network interfaces, for each node. The configuration for IPv4 on node PE-1 is as follows; similar configurations apply on the other nodes. Configuration for IPv6 is also supported, but not described in this chapter.

# on PE-1:

enter candidate

system mpls label-ranges {

dynamic dlb-ldp {

start-label 20000

end-label 29999

}

enter candidate

network-instance default protocols ldp {

admin-state enable

dynamic-label-block dlb-ldp

fec-resolution {

longest-prefix true # optional; default: exact match

}

discovery {

interfaces {

interface ethernet-1/31.1 {

ipv4 {

admin-state enable

}

}

interface ethernet-1/32.1 {

ipv4 {

admin-state enable

}

}

}

}The LDP label range must be explicitly configured on all nodes as dynamic in the system mpls label-ranges context. The FEC resolution is based on the longest prefix match, with longest-prefix true in the network-instance default protocols ldp fec-resolution context. By default, SR Linux supports /32 IPv4 and /128 IPv6 FEC resolution using IGP routes. When longest-prefix match is enabled for IPv4 and IPv6 FEC resolution, IPv4 and IPv6 prefix FECs can be resolved by less-specific routes in the route table, as long as the prefix bits of the route match the prefix bits of the FEC. The IP route with the longest prefix match is the route that is used to resolve the FEC.

On each node, the local network interfaces that are part of the MPLS network must be configured in the network-instance default protocols ldp discovery interfaces context.

# On PE-1:

--{ running }--[ ]--

A:admin@PE-1# show / network-instance default protocols ldp summary

=========================================================================================================================================================

Net-Inst default LDP Summary

---------------------------------------------------------------------------------------------------------------------------------------------------------

Admin State : enable

FEC Match Longest Prefix : true

Dynamic Label Block : dlb-ldp

Dynamic Label Block Status : available

Graceful Restart Enabled : false

GR Max Reconnect Time : 120

GR Max Recovery Time : 120

Max Multipaths : 1

IPv4 Oper State : up

IPv4 Last oper state change : 2025-08-25T11:58:41.600Z

IPv4 LSR ID : 192.0.2.1 # different for each node

IPv6 Oper State : down # this setup does not have IPv6 configuration

IPv6 Oper Down Reason : no-system-ipv6-address

IPv6 Last oper state change : 2025-08-25T11:58:39.900Z

IPv6 LSR ID : ::

Session Keepalive Holdtime : 180

Session Keepalive Interval : 60

---------------------------------------------------------------------------------------------------------------------------------------------------------

=========================================================================================================================================================# On PE-1:

--{ running }--[ ]--

A:admin@PE-1# show / network-instance default protocols ldp neighbor

=========================================================================================================================================================

Net-Inst default LDP neighbors

---------------------------------------------------------------------------------------------------------------------------------------------------------

+-----------------------------------------------------------------------------------------------------------------------------+

| Interface Peer LDP ID Nbr Address Local Address Proposed Negotiated Remaining |

| Holdtime Holdtime Holdtime |

+=============================================================================================================================+

| ethernet-1/31.1 192.0.2.2:0 192.168.12.2 192.168.12.1 15 15 12 |

| ethernet-1/32.1 192.0.2.3:0 192.168.13.2 192.168.13.1 15 15 11 |

+-----------------------------------------------------------------------------------------------------------------------------+

=========================================================================================================================================================The LDP tunnels that are automatically set up to the remote destinations can be verified as follows:

# On PE-1:

--{ running }--[ ]--

A:admin@PE-1# show / network-instance default tunnel-table all

---------------------------------------------------------------------------------------------------------------------------------------------------------

IPv4 tunnel table of network-instance "default"

---------------------------------------------------------------------------------------------------------------------------------------------------------

+----------------------+--------+----------------+----------+-----+----------+---------+--------------------------+--------------+--------------+

| IPv4 Prefix | Encaps | Tunnel Type | Tunnel | FIB | Metric | Prefere | Last Update | Next-hop | Next-hop |

| | Type | | ID | | | nce | | (Type) | |

+======================+========+================+==========+=====+==========+=========+==========================+==============+==============+

| 192.0.2.2/32 | mpls | ldp | 65537 | Y | 10 | 9 | 2025-08-25T11:59:08.272Z | 192.168.12.2 | ethernet- |

| | | | | | | | | (mpls) | 1/31.1 |

| 192.0.2.3/32 | mpls | ldp | 65538 | Y | 10 | 9 | 2025-08-25T11:59:27.962Z | 192.168.13.2 | ethernet- |

| | | | | | | | | (mpls) | 1/32.1 |

| 192.0.2.4/32 | mpls | ldp | 65539 | Y | 20 | 9 | 2025-08-25T11:59:55.797Z | 192.168.12.2 | ethernet- |

| | | | | | | | | (mpls) | 1/31.1 |

| 192.0.2.5/32 | mpls | ldp | 65540 | Y | 20 | 9 | 2025-08-25T12:00:14.802Z | 192.168.12.2 | ethernet- |

| | | | | | | | | (mpls) | 1/31.1 |

| 192.0.2.6/32 | mpls | ldp | 65541 | Y | 30 | 9 | 2025-08-25T12:00:32.542Z | 192.168.12.2 | ethernet- |

| | | | | | | | | (mpls) | 1/31.1 |

+----------------------+--------+----------------+----------+-----+----------+---------+--------------------------+--------------+--------------+

---------------------------------------------------------------------------------------------------------------------------------------------------------

5 LDP tunnels, 5 active, 0 inactive

---------------------------------------------------------------------------------------------------------------------------------------------------------

---------------------------------------------------------------------------------------------------------------------------------------------------------

IPv6 tunnel table of network-instance "default"

---------------------------------------------------------------------------------------------------------------------------------------------------------

<no_entries>

---------------------------------------------------------------------------------------------------------------------------------------------------------

---------------------------------------------------------------------------------------------------------------------------------------------------------Five LDP tunnels start on each node, one to each remote node. A node does not have an LDP tunnel to itself. As a result of the SPF computation on PE-1, only the LDP tunnel from PE-1 to PE-3 (IPv4 prefix 192.0.2.3/32) starts at the ethernet-1/32.1 subinterface on PE-1 (to PE-3). The LDP tunnels from PE-1 to all other nodes start at the ethernet-1/31.1 subinterface on PE-1 (to PE-2). The SPF computation on the other nodes governs the LDP tunnels that start on them.

The LDP tunnel from PE-1 to PE-6 (tunnel ID 65541) uses egress label 20005 and passes through PE-2. This can be verified as follows:

# On PE-1:

--{ running }--[ ]--

A:admin@PE-1# info from state with-context / network-instance default tunnel-table ipv4 tunnel * type * owner * id * |

as table | filter fields encapsulation-type metric preference next-hop-group

+-------------+-------------------+--------------------------+-------------+-----------+------------------+-----------+-----------+--------------------+

| Network- | Ipv4-prefix | Type | Owner | Id | Encapsulation- | Metric | Preferenc | Next-hop-group |

| instance | | | | | type | | e | |

+=============+===================+==========================+=============+===========+==================+===========+===========+====================+

| default | 192.0.2.2/32 | ldp | ldp_mgr | 65537 | mpls | 10 | 9 | 28227483 |

| default | 192.0.2.3/32 | ldp | ldp_mgr | 65538 | mpls | 10 | 9 | 28227484 |

| default | 192.0.2.4/32 | ldp | ldp_mgr | 65539 | mpls | 20 | 9 | 28227485 |

| default | 192.0.2.5/32 | ldp | ldp_mgr | 65540 | mpls | 20 | 9 | 28227486 |

| default | 192.0.2.6/32 | ldp | ldp_mgr | 65541 | mpls | 30 | 9 | 28227487 |

+-------------+-------------------+--------------------------+-------------+-----------+------------------+-----------+-----------+--------------------+

--{ running }--[ ]--

A:admin@PE-1# info from state with-context / network-instance default tunnel-table

network-instance default {

tunnel-table {

---snip---

ipv4 {

---snip---

tunnel 192.0.2.6/32 type ldp owner ldp_mgr id 65541 {

encapsulation-type mpls

next-hop-group 28227487

metric 30

preference 9

last-app-update "2025-08-25T12:00:32.542Z (3 minutes ago)"

resource-allocation-failed false

fib-programming {

last-successful-operation-type add

last-successful-operation-timestamp "2025-08-25T12:00:32.543Z (3 minutes ago)"

pending-operation-type none

last-failed-operation-type none

}

}

statistics {

active-tunnels 5

total-tunnels 5

}

tunnel-summary {

tunnel-type ldp {

active-tunnels 5

}

}

}

--{ running }--[ ]--

A:admin@PE-1# info from state with-context / network-instance default route-table next-hop-group *

network-instance default {

route-table {

---snip---

next-hop-group 28227487 {

backup-next-hop-group 0

backup-active false

fib-programming {

last-successful-operation-type add

last-successful-operation-timestamp "2025-08-25T12:00:32.543Z (7 minutes ago)"

pending-operation-type none

last-failed-operation-type none

}

next-hop 0 {

next-hop 28227474

resolved not-applicable

resource-allocation-failed false

}

}

--{ running }--[ ]--

A:admin@PE-1# info from state with-context / network-instance default route-table next-hop * |

as table | filter fields type ip-address subinterface mpls-encapsulation/pushed-mpls-label-stack

+--------------------------+----------------------+--------------------+--------------------------+--------------------------+--------------------------+

| Network-instance | Index | Type | Ip-address | Subinterface | Mpls-encapsulation |

| | | | | | pushed-mpls-label-stack |

+==========================+======================+====================+==========================+==========================+==========================+

| ---snip--- # non MPLS |

| default | 28227470 | mpls | 192.168.12.2 | ethernet-1/31.1 | 20000 |

| default | 28227471 | mpls | 192.168.13.2 | ethernet-1/32.1 | 20000 |

| default | 28227472 | mpls | 192.168.12.2 | ethernet-1/31.1 | 20003 |

| default | 28227473 | mpls | 192.168.12.2 | ethernet-1/31.1 | 20004 |

| default | 28227474 | mpls | 192.168.12.2 | ethernet-1/31.1 | 20005 |

+--------------------------+----------------------+--------------------+--------------------------+--------------------------+--------------------------+

--{ running }--[ ]--

A:admin@PE-1# info from state with-context / network-instance default route-table next-hop *

network-instance default {

route-table {

---snip---

next-hop 28227474 {

resource-allocation-failed false

type mpls

ip-address 192.168.12.2

subinterface ethernet-1/31.1

mpls-encapsulation {

entropy-label-transmit false

pushed-mpls-label-stack [

20005

]

}

}On PE-1, the LDP tunnel to PE-6 (tunnel ID 65541) has next-hop-group 28227487, which uses next-hop 28227474 that specifies pushed-mpls-label-stack [20005] and ip-address 192.168.12.2 via subinterface ethernet-1/31.1.

The MPLS ingress label processing on each node through which the LDP tunnel passes can be verified as follows:

# On PE-2:

--{ running }--[ ]--

A:admin@PE-2# show / network-instance default route-table mpls

+---------+-----------+-------------+-----------------+------------------------+----------------------+------------------+

| Label | Operation | Type | Next Net-Inst | Next-hop IP (Type) | Next-hop | Next-hop MPLS |

| | | | | | Subinterface | labels |

+=========+===========+=============+=================+========================+======================+==================+

| 20000 | POP | ldp | default | | | |

| 20001 | SWAP | ldp | N/A | 192.168.12.1 (mpls) | ethernet-1/33.1 | 20000 |

| 20002 | SWAP | ldp | N/A | 192.168.12.1 (mpls) | ethernet-1/33.1 | 20002 |

| 20003 | SWAP | ldp | N/A | 192.168.24.2 (mpls) | ethernet-1/10.1 | 20000 |

| 20004 | SWAP | ldp | N/A | 192.168.25.2 (mpls) | ethernet-1/11.1 | 20000 |

| 20005 | SWAP | ldp | N/A | 192.168.24.2 (mpls) | ethernet-1/10.1 | 20005 |

+---------+-----------+-------------+-----------------+------------------------+----------------------+------------------+

--{ running }--[ ]--

A:admin@PE-2# info from state with-context / network-instance default route-table mpls label-entry * |

as table | filter fields operation entry-type next-hop-group

+------------------------------------------------------------------------+-------------+-----------+-----------------------------+----------------------+

| Network-instance | Label-value | Operation | Entry-type | Next-hop-group |

+========================================================================+=============+===========+=============================+======================+

| default | 20000 | pop | ldp | |

| default | 20001 | swap | ldp | 28209398 |

| default | 20002 | swap | ldp | 28209399 |

| default | 20003 | swap | ldp | 28209400 |

| default | 20004 | swap | ldp | 28209401 |

| default | 20005 | swap | ldp | 28209402 |

+------------------------------------------------------------------------+-------------+-----------+-----------------------------+----------------------+When PE-2 receives a packet labeled with (ingress) label 20000, PE-2 pops the label and processes the packet locally. When PE-2 receives a packet labeled with (ingress) label 20001, PE-2 swaps the label to the next-hop MPLS (egress) label 20000 and forwards the packet to PE-1 (next-hop IP 192.168.12.1) via next-hop subinterface ethernet-1/33.1. When PE-2 receives a packet labeled with (ingress) label 20003, PE-2 swaps the label to the next-hop MPLS (egress) label 20000 and forwards the packet to PE-4 (next-hop IP 192.168.24.2) via next-hop subinterface ethernet-1/10.1.

PE-2 receives a packet from PE-1, which is labeled with (ingress) label 20005. PE-2 swaps the label to the next-hop MPLS (egress) label 20005 and forwards the packet to PE-4 (next-hop IP 192.168.24.2) via next-hop subinterface ethernet-1/10.1.

# On PE-4:

--{ running }--[ ]--

A:admin@PE-4# show / network-instance default route-table mpls

+---------+-----------+-------------+-----------------+------------------------+----------------------+------------------+

| Label | Operation | Type | Next Net-Inst | Next-hop IP (Type) | Next-hop | Next-hop MPLS |

| | | | | | Subinterface | labels |

+=========+===========+=============+=================+========================+======================+==================+

| 20000 | POP | ldp | default | | | |

| 20001 | SWAP | ldp | N/A | 192.168.24.1 (mpls) | ethernet-1/31.1 | 20000 |

| 20002 | SWAP | ldp | N/A | 192.168.24.1 (mpls) | ethernet-1/31.1 | 20001 |

| 20003 | SWAP | ldp | N/A | 192.168.34.1 (mpls) | ethernet-1/32.1 | 20000 |

| 20004 | SWAP | ldp | N/A | 192.168.24.1 (mpls) | ethernet-1/31.1 | 20004 |

| 20005 | SWAP | ldp | N/A | 192.168.46.2 (mpls) | ethernet-1/6.1 | 20000 |

+---------+-----------+-------------+-----------------+------------------------+----------------------+------------------+PE-4 receives the packet from PE-2, which is labeled with (ingress) label 20005. PE-4 swaps the label to the next-hop MPLS (egress) label 20000 and forwards the packet to PE-6 (next-hop IP 192.168.46.2) via next-hop subinterface ethernet-1/6.1.

# On PE-6:

--{ running }--[ ]--

A:admin@PE-6# show / network-instance default route-table mpls

+---------+-----------+-------------+-----------------+------------------------+----------------------+------------------+

| Label | Operation | Type | Next Net-Inst | Next-hop IP (Type) | Next-hop | Next-hop MPLS |

| | | | | | Subinterface | labels |

+=========+===========+=============+=================+========================+======================+==================+

| 20000 | POP | ldp | default | | | |

| 20001 | SWAP | ldp | N/A | 192.168.56.1 (mpls) | ethernet-1/5.1 | 20000 |

| 20002 | SWAP | ldp | N/A | 192.168.46.1 (mpls) | ethernet-1/4.1 | 20002 |

| 20003 | SWAP | ldp | N/A | 192.168.46.1 (mpls) | ethernet-1/4.1 | 20001 |

| 20004 | SWAP | ldp | N/A | 192.168.46.1 (mpls) | ethernet-1/4.1 | 20003 |

| 20005 | SWAP | ldp | N/A | 192.168.46.1 (mpls) | ethernet-1/4.1 | 20000 |

+---------+-----------+-------------+-----------------+------------------------+----------------------+------------------+PE-6 receives the packet from PE-4, which is labeled with (ingress) label 20000. PE-6 pops the label and processes the packet locally.

The (egress) next hops can be verified as follows:

# On PE-1:

--{ running }--[ ]--

A:admin@PE-1# show / network-instance default route-table next-hop

---------------------------------------------------------------------------------------------------------------------------------------------------------

Next-hop route table of network instance default

---------------------------------------------------------------------------------------------------------------------------------------------------------

---snip--- # non MPLS

Index : 28227470

Next-hop : 192.168.12.2

Type : mpls

MPLS label stack: [20000]

Index : 28227471

Next-hop : 192.168.13.2

Type : mpls

MPLS label stack: [20000]

Index : 28227472

Next-hop : 192.168.12.2

Type : mpls

MPLS label stack: [20003]

Index : 28227473

Next-hop : 192.168.12.2

Type : mpls

MPLS label stack: [20004]

Index : 28227474

Next-hop : 192.168.12.2

Type : mpls

MPLS label stack: [20005]

--{ running }--[ ]--

A:admin@PE-1# info from state with-context / network-instance default route-table next-hop * |

as table | filter fields type ip-address subinterface mpls-encapsulation/pushed-mpls-label-stack

+--------------------------+----------------------+--------------------+--------------------------+--------------------------+--------------------------+

| Network-instance | Index | Type | Ip-address | Subinterface | Mpls-encapsulation |

| | | | | | pushed-mpls-label-stack |

+==========================+======================+====================+==========================+==========================+==========================+

| ---snip--- # non MPLS |

| default | 28227470 | mpls | 192.168.12.2 | ethernet-1/31.1 | 20000 |

| default | 28227471 | mpls | 192.168.13.2 | ethernet-1/32.1 | 20000 |

| default | 28227472 | mpls | 192.168.12.2 | ethernet-1/31.1 | 20003 |

| default | 28227473 | mpls | 192.168.12.2 | ethernet-1/31.1 | 20004 |

| default | 28227474 | mpls | 192.168.12.2 | ethernet-1/31.1 | 20005 |

+--------------------------+----------------------+--------------------+--------------------------+--------------------------+--------------------------+In the preceding output, the (egress) next hops that relate with LDP tunnels are those with MPLS label stack: [<LDP label>]. PE-1 learns the (egress) labels that it must use to reach a destination, from the FEC prefixes that its LDP peers (interfaces on PE-2 and PE-3) advertise. This information can be obtained as follows:

# On PE-1:

--{ running }--[ ]--

A:admin@PE-1# info from state with-context / network-instance default protocols ldp ipv4 bindings received-address

network-instance default {

protocols {

ldp {

ipv4 {

bindings {

received-address {

peer 192.0.2.2 label-space-id 0 {

ip-address [

192.0.2.2

192.168.12.2

192.168.24.1

192.168.25.1

]

}

peer 192.0.2.3 label-space-id 0 {

ip-address [

192.0.2.3

192.168.13.2

192.168.34.1

]

}

--{ running }--[ ]--

A:admin@PE-1# info from state with-context / network-instance default protocols ldp ipv4 bindings received-prefix-fec

network-instance default {

protocols {

ldp {

ipv4 {

bindings {

received-prefix-fec {

prefix-fec 192.0.2.2/32 lsr-id 192.0.2.2 label-space-id 0 {

label 20000

entropy-label-transmit false

ingress-lsr-fec true

used-in-forwarding true

next-hop 1 {

next-hop 192.168.12.2

next-hop-type primary

interface ethernet-1/31.1

}

}

prefix-fec 192.0.2.2/32 lsr-id 192.0.2.3 label-space-id 0 {

label 20002

entropy-label-transmit false

ingress-lsr-fec true

used-in-forwarding false

}

prefix-fec 192.0.2.3/32 lsr-id 192.0.2.2 label-space-id 0 {

label 20002

entropy-label-transmit false

ingress-lsr-fec true

used-in-forwarding false

}

prefix-fec 192.0.2.3/32 lsr-id 192.0.2.3 label-space-id 0 {

label 20000

entropy-label-transmit false

ingress-lsr-fec true

used-in-forwarding true

next-hop 1 {

next-hop 192.168.13.2

next-hop-type primary

interface ethernet-1/32.1

}

}

prefix-fec 192.0.2.4/32 lsr-id 192.0.2.2 label-space-id 0 {

label 20003

entropy-label-transmit false

ingress-lsr-fec true

used-in-forwarding true

next-hop 1 {

next-hop 192.168.12.2

next-hop-type primary

interface ethernet-1/31.1

}

}

prefix-fec 192.0.2.4/32 lsr-id 192.0.2.3 label-space-id 0 {

label 20003

entropy-label-transmit false

ingress-lsr-fec true

used-in-forwarding false

}

prefix-fec 192.0.2.5/32 lsr-id 192.0.2.2 label-space-id 0 {

label 20004

entropy-label-transmit false

ingress-lsr-fec true

used-in-forwarding true

next-hop 1 {

next-hop 192.168.12.2

next-hop-type primary

interface ethernet-1/31.1

}

}

prefix-fec 192.0.2.5/32 lsr-id 192.0.2.3 label-space-id 0 {

label 20004

entropy-label-transmit false

ingress-lsr-fec true

used-in-forwarding false

}

prefix-fec 192.0.2.6/32 lsr-id 192.0.2.2 label-space-id 0 {

label 20005

entropy-label-transmit false

ingress-lsr-fec true

used-in-forwarding true

next-hop 1 {

next-hop 192.168.12.2

next-hop-type primary

interface ethernet-1/31.1

}

}

prefix-fec 192.0.2.6/32 lsr-id 192.0.2.3 label-space-id 0 {

label 20005

entropy-label-transmit false

ingress-lsr-fec true

used-in-forwarding false

}

--{ running }--[ ]--

A:admin@PE-1# info from state with-context / network-instance default protocols ldp ipv4 bindings received-prefix-fec prefix-fec * lsr-id * label-space-id 0 |

as table | filter fields *

+---------------+---------------+---------------+---------------+---------------+---------------+---------------+---------------+---------------+

| Network- | Fec | Lsr-id | Label-space- | Label | Entropy- | Ingress-lsr- | Used-in- | Not-used- |

| instance | | | id | | label- | fec | forwarding | reason |

| | | | | | transmit | | | |

+===============+===============+===============+===============+===============+===============+===============+===============+===============+

| default | 192.0.2.2/32 | 192.0.2.2 | 0 | 20000 | false | true | true | |

| default | 192.0.2.2/32 | 192.0.2.3 | 0 | 20002 | false | true | false | |

| default | 192.0.2.3/32 | 192.0.2.2 | 0 | 20002 | false | true | false | |

| default | 192.0.2.3/32 | 192.0.2.3 | 0 | 20000 | false | true | true | |

| default | 192.0.2.4/32 | 192.0.2.2 | 0 | 20003 | false | true | true | |

| default | 192.0.2.4/32 | 192.0.2.3 | 0 | 20003 | false | true | false | |

| default | 192.0.2.5/32 | 192.0.2.2 | 0 | 20004 | false | true | true | |

| default | 192.0.2.5/32 | 192.0.2.3 | 0 | 20004 | false | true | false | |

| default | 192.0.2.6/32 | 192.0.2.2 | 0 | 20005 | false | true | true | |

| default | 192.0.2.6/32 | 192.0.2.3 | 0 | 20005 | false | true | false | |

+---------------+---------------+---------------+---------------+---------------+---------------+---------------+---------------+---------------+

--{ running }--[ ]--

A:admin@PE-1# info from state with-context / network-instance default protocols ldp ipv4 bindings received-prefix-fec prefix-fec * lsr-id * label-space-id 0 next-hop * |

as table | filter fields *

+-------------+------------------+-------------+------------------------+----------+-------------+-------------+-----------------------+-------------+

| Network- | Prefix-fec fec | Prefix-fec | Prefix-fec label- | Index | Next-hop | Next-hop- | Interface | Outer-label |

| instance | | lsr-id | space-id | | | type | | |

+=============+==================+=============+========================+==========+=============+=============+=======================+=============+

| default | 192.0.2.2/32 | 192.0.2.2 | 0 | 1 | 192.168.12. | primary | ethernet-1/31.1 | |

| | | | | | 2 | | | |

| default | 192.0.2.3/32 | 192.0.2.3 | 0 | 1 | 192.168.13. | primary | ethernet-1/32.1 | |

| | | | | | 2 | | | |

| default | 192.0.2.4/32 | 192.0.2.2 | 0 | 1 | 192.168.12. | primary | ethernet-1/31.1 | |

| | | | | | 2 | | | |

| default | 192.0.2.5/32 | 192.0.2.2 | 0 | 1 | 192.168.12. | primary | ethernet-1/31.1 | |

| | | | | | 2 | | | |

| default | 192.0.2.6/32 | 192.0.2.2 | 0 | 1 | 192.168.12. | primary | ethernet-1/31.1 | |

| | | | | | 2 | | | |

+-------------+------------------+-------------+------------------------+----------+-------------+-------------+-----------------------+-------------+PE-1 receives IP addresses and FEC prefixes from its directly connected (PE-2 and PE-3) LDP peers (peer or lsr-id). PE-1 may receive the same FEC prefix with (typically) different MPLS labels from all (or some) of its LDP peers. For each FEC prefix, PE-1 uses only the one marked with used-in-forwarding true: it labels the IP packet with the corresponding (egress) label and sends the labeled packet to the corresponding next hop 1.

Each node receives five FEC prefixes (from each of its LDP peers), which correspond with the system addresses of the remote nodes .

--{ running }--[ ]--

A:admin@PE-1# info from state with-context / network-instance default protocols ldp peers

network-instance default {

protocols {

ldp {

peers {

session-keepalive-holdtime 180

session-keepalive-interval 60

peer 192.0.2.2 label-space-id 0 {

---snip---

end-of-lib {

ipv4-prefix-fecs {

sent true

received true

}

---snip---

}

statistics {

---snip---

address-statistics {

ipv4 {

received-addresses 4 # own system IP + 3 peer interface IPs

advertised-addresses 3 # own system IP + 2 peer interface IPs

}

---snip---

}

fec-statistics {

ipv4-prefix {

received-fecs 5 # From each remote PE (5): 1 FEC (system)

advertised-fecs 5 # To each remote PE (5): 1 FEC (system)

}

---snip---

}

}

}

peer 192.0.2.3 label-space-id 0 {

---snip---

end-of-lib {

ipv4-prefix-fecs {

sent true

received true

}

---snip---

}

statistics {

---snip---

address-statistics {

ipv4 {

received-addresses 3 # own system IP + 2 peer interface IPs

advertised-addresses 3 # own system IP + 2 peer interface IPs

}

---snip---

}

fec-statistics {

ipv4-prefix {

received-fecs 5 # From each remote PE (5): 1 FEC (system)

advertised-fecs 5 # To each remote PE (5): 1 FEC (system)

}

---snip---

}

}

}

--{ running }--[ ]--

A:admin@PE-1# info from state with-context / network-instance default protocols ldp statistics ipv4

network-instance default {

protocols {

ldp {

statistics {

ipv4 {

total-discovery-interfaces 2

total-discovery-targets 0

total-interface-hello-adjacencies 2

total-targeted-hello-adjacencies 0

total-peers 2 # PE-2 and PE-3

}

--{ running }--[ ]--

A:admin@PE-1# info from state with-context / network-instance default protocols ldp statistics fec-statistics ipv4-prefix

network-instance default {

protocols {

ldp {

statistics {

fec-statistics {

ipv4-prefix {

received-fecs 10

advertised-fecs 10

}Penultimate hop popping

To signal PHP with LDP, null-label implicit must be configured in the network-instance default protocols ldp context on the ELER, as follows:

# on PE-6:

enter candidate

network-instance default protocols ldp {

null-label implicitBefore the null-label implicit configuration on PE-6, PE-6 advertises label 20000 for FEC prefix 192.0.2.6/32 to all remote nodes that belong to the MPLS network. Only PE-4 and PE-5 use (egress) label 20000 for FEC prefix 192.0.2.6/32 toward PE-6.

# On PE-6:

--{ running }--[ ]--

A:admin@PE-6# info from state with-context / network-instance default protocols ldp ipv4 bindings advertised-prefix-fec

network-instance default {

protocols {

ldp {

ipv4 {

bindings {

advertised-prefix-fec {

---snip---

prefix-fec 192.0.2.6/32 lsr-id 192.0.2.4 label-space-id 0 {

egress-lsr-fec true

label 20000

label-type pop

}

prefix-fec 192.0.2.6/32 lsr-id 192.0.2.5 label-space-id 0 {

egress-lsr-fec true

label 20000

label-type pop

}# On PE-4: # similar on PE-5 with other next-hop 1

--{ running }--[ ]--

A:admin@PE-4# info from state with-context / network-instance default protocols ldp ipv4 bindings received-prefix-fec

network-instance default {

protocols {

ldp {

ipv4 {

bindings {

received-prefix-fec {

---snip---

prefix-fec 192.0.2.6/32 lsr-id 192.0.2.6 label-space-id 0 {

label 20000

entropy-label-transmit false

ingress-lsr-fec true

used-in-forwarding true

next-hop 1 {

next-hop 192.168.46.2

next-hop-type primary

interface ethernet-1/6.1

}

}# On PE-4: # similar on PE-5 for other next-hop

--{ running }--[ ]--

A:admin@PE-4# show / network-instance default route-table next-hop

---------------------------------------------------------------------------------------------------------------------------------------------------------

Next-hop route table of network instance default

---------------------------------------------------------------------------------------------------------------------------------------------------------

---snip---

Index : 28330675

Next-hop : 192.168.46.2

Type : mpls

MPLS label stack: [20000]# On PE-4: # similar on PE-5 with other next-hop

--{ running }--[ ]--

A:admin@PE-4# info from state with-context / network-instance default route-table next-hop *

network-instance default {

route-table {

---snip---

next-hop 28330675 {

resource-allocation-failed false

type mpls

ip-address 192.168.46.2

subinterface ethernet-1/6.1

mpls-encapsulation {

entropy-label-transmit false

pushed-mpls-label-stack [

20000

]

}

}# On PE-4: # similar on PE-5 with other Next-hop IP and other Next-hop Subinterface

--{ running }--[ ]--

A:admin@PE-4# show / network-instance default route-table mpls

+---------+-----------+-------------+-----------------+------------------------+----------------------+------------------+

| Label | Operation | Type | Next Net-Inst | Next-hop IP (Type) | Next-hop | Next-hop MPLS |

| | | | | | Subinterface | labels |

+=========+===========+=============+=================+========================+======================+==================+

| 20000 | POP | ldp | default | | | |

| 20001 | SWAP | ldp | N/A | 192.168.24.1 (mpls) | ethernet-1/31.1 | 20000 |

| 20002 | SWAP | ldp | N/A | 192.168.24.1 (mpls) | ethernet-1/31.1 | 20001 |

| 20003 | SWAP | ldp | N/A | 192.168.34.1 (mpls) | ethernet-1/32.1 | 20000 |

| 20004 | SWAP | ldp | N/A | 192.168.24.1 (mpls) | ethernet-1/31.1 | 20004 |

| 20005 | SWAP | ldp | N/A | 192.168.46.2 (mpls) | ethernet-1/6.1 | 20000 |

+---------+-----------+-------------+-----------------+------------------------+----------------------+------------------+All related labels are withdrawn and re-advertised immediately with label value IMPLICIT_NULL. The new label shows up on PE-4 and PE-5 as a swap from the ingress label to an egress label IMPLICIT_NULL, although label IMPLICIT_NULL is not pushed on to the frame.

# On PE-6:

--{ running }--[ ]--

A:admin@PE-6# info from state with-context / network-instance default protocols ldp ipv4 bindings advertised-prefix-fec

network-instance default {

protocols {

ldp {

ipv4 {

bindings {

advertised-prefix-fec {

---snip---

prefix-fec 192.0.2.6/32 lsr-id 192.0.2.4 label-space-id 0 {

egress-lsr-fec true

label IMPLICIT_NULL

label-type pop

}

prefix-fec 192.0.2.6/32 lsr-id 192.0.2.5 label-space-id 0 {

egress-lsr-fec true

label IMPLICIT_NULL

label-type pop

}# On PE-4: # similar on PE-5 with other next-hop 1

--{ running }--[ ]--

A:admin@PE-4# info from state with-context / network-instance default protocols ldp ipv4 bindings received-prefix-fec

network-instance default {

protocols {

ldp {

ipv4 {

bindings {

received-prefix-fec {

---snip---

prefix-fec 192.0.2.6/32 lsr-id 192.0.2.6 label-space-id 0 {

label IMPLICIT_NULL

entropy-label-transmit false

ingress-lsr-fec true

used-in-forwarding true

next-hop 1 {

next-hop 192.168.46.2

next-hop-type primary

interface ethernet-1/6.1

}

}# On PE-4: # similar on PE-5 for other next-hop

--{ running }--[ ]--

A:admin@PE-4# show / network-instance default route-table next-hop

---------------------------------------------------------------------------------------------------------------------------------------------------------

Next-hop route table of network instance default

---------------------------------------------------------------------------------------------------------------------------------------------------------

---snip---

Index : 28330676

Next-hop : 192.168.46.2

Type : mpls

MPLS label stack: ['IMPLICIT_NULL']# On PE-4: # similar on PE-5 for other next-hop

--{ running }--[ ]--

A:admin@PE-4# info from state with-context / network-instance default route-table next-hop *

network-instance default {

route-table {

---snip---

next-hop 28330676 {

resource-allocation-failed false

type mpls

ip-address 192.168.46.2

subinterface ethernet-1/6.1

mpls-encapsulation {

entropy-label-transmit false

pushed-mpls-label-stack [

IMPLICIT_NULL

]

}

}# On PE-4: # similar on PE-5 with other Next-hop IP and other Next-hop Subinterface

--{ running }--[ ]--

A:admin@PE-4# show / network-instance default route-table mpls

+---------+-----------+-------------+-----------------+------------------------+----------------------+------------------+

| Label | Operation | Type | Next Net-Inst | Next-hop IP (Type) | Next-hop | Next-hop MPLS |

| | | | | | Subinterface | labels |

+=========+===========+=============+=================+========================+======================+==================+

| 20000 | POP | ldp | default | | | |

| 20001 | SWAP | ldp | N/A | 192.168.24.1 (mpls) | ethernet-1/31.1 | 20000 |

| 20002 | SWAP | ldp | N/A | 192.168.24.1 (mpls) | ethernet-1/31.1 | 20001 |

| 20003 | SWAP | ldp | N/A | 192.168.34.1 (mpls) | ethernet-1/32.1 | 20000 |

| 20004 | SWAP | ldp | N/A | 192.168.24.1 (mpls) | ethernet-1/31.1 | 20004 |

| 20005 | SWAP | ldp | N/A | 192.168.46.2 (mpls) | ethernet-1/6.1 | IMPLICIT_NULL |

+---------+-----------+-------------+-----------------+------------------------+----------------------+------------------+Import and export policies

In each node, the default label handling behavior is to originate label bindings for the system address and to propagate all received FECs. If this is not the desired behavior, import or export policies can be applied. An LDP import policy impacts inbound filtering; an LDP export policy impacts outbound filtering. An export policy may be configured to control the set of LDP label bindings advertised by the LER (sending to LDP peers). As such, export policies are used to include additional FECs rather than filtering FECs from those advertised. An import policy can be used to control for which FECs a router generates labels (accepting from LDP peers). This functionality is not unique to LDP; it can be used for IS-IS and OSPF as well as others.

The policy can be global or LDP peer FEC prefix filtering, both for import and export. LDP peer FEC prefix filtering uses a similar policy context as the LDP global policies and works in addition to these global policies.

# On any LDP configured node:

enter candidate

network-instance default protocols ldp {

tree flat | grep prefix-

network-instance protocols ldp export-prefix-policy

network-instance protocols ldp import-prefix-policy

network-instance protocols ldp peers peer export-prefix-policy

network-instance protocols ldp peers peer import-prefix-policyExport policy

By default, a node does not generate labels for its directly connected (local) interfaces. To change this behavior, an export policy is created and applied to the LDP instance or to the peers peer context of the LDP instance (applicable per peer). There is no configuration difference in defining an import and export policy.

A policy contains a list of statements. A statement typically contains matching criteria (however, it is not required in cases where everything matches) and a corresponding action. Statements without an action are considered incomplete and are rendered inactive. When processing the policy, the router executes the specified action on the first matching statement; it does not process any further matches. For this reason, statements must be sequenced correctly from most to least specific.

As an example, the local interface 192.168.24.0/30 is exported. The configuration of the LDP export policy to include local interface 192.168.24.0/30 is as follows.

# on all nodes:

enter candidate

routing-policy {

prefix-set export-prefix-set-test {

prefix 192.168.24.0/30 mask-length-range exact { }

}

policy export-fec-test {

default-action {

policy-result reject # local prefixes are not avertised, system addresses are still advertised

}

statement export-statement-test {

match {

prefix {

prefix-set export-prefix-set-test

}

}

action {

policy-result accept

}

}

}The LDP export policy is applied to the LDP instance on the nodes, with the export-prefix-policy command in the network-instance default protocols ldp context.

# on all nodes:

enter candidate

network-instance default {

protocols {

ldp {

export-prefix-policy export-fec-test# On PE-2: # similar for PE-4 with other LDP peers

--{ running }--[ ]--

A:admin@PE-2# info from state with-context / network-instance default protocols ldp ipv4 bindings advertised-prefix-fec

network-instance default {

protocols {

ldp {

ipv4 {

bindings {

advertised-prefix-fec {

---snip--- # FEC prefixes for system addresses

prefix-fec 192.168.24.0/30 lsr-id 192.0.2.1 label-space-id 0 {

egress-lsr-fec true

label 20006

label-type pop

}

prefix-fec 192.168.24.0/30 lsr-id 192.0.2.4 label-space-id 0 {

egress-lsr-fec true

label 20006

label-type pop

}

prefix-fec 192.168.24.0/30 lsr-id 192.0.2.5 label-space-id 0 {

egress-lsr-fec true

label 20006

label-type pop

}When the export policy is applied, the active LDP binding table contains additional entries: the local interfaces of PE-2 or PE-4. In the following output, the entries for the system prefixes are snipped; only the additional prefix is shown:

# On PE-1:

--{ running }--[ ]--

A:admin@PE-1# info from state with-context / network-instance default protocols ldp ipv4 bindings received-prefix-fec

network-instance default {

protocols {

ldp {

ipv4 {

bindings {

received-prefix-fec {

---snip--- # FEC prefixes for system addresses

prefix-fec 192.168.24.0/30 lsr-id 192.0.2.2 label-space-id 0 {

label 20006

entropy-label-transmit false

ingress-lsr-fec true

used-in-forwarding false

not-used-reason rejected-on-rx

}

--{ running }--[ ]--

A:admin@PE-1# info from state with-context / network-instance default protocols ldp ipv4 bindings received-prefix-fec prefix-fec * lsr-id * label-space-id 0 |

as table | filter fields *

+---------------+---------------+---------------+---------------+---------------+---------------+---------------+---------------+---------------+

| Network- | Fec | Lsr-id | Label-space- | Label | Entropy- | Ingress-lsr- | Used-in- | Not-used- |

| instance | | | id | | label- | fec | forwarding | reason |

| | | | | | transmit | | | |

+===============+===============+===============+===============+===============+===============+===============+===============+===============+

| default | 192.0.2.2/32 | 192.0.2.2 | 0 | 20000 | false | true | true | |

| default | 192.0.2.2/32 | 192.0.2.3 | 0 | 20002 | false | true | false | |

| default | 192.0.2.3/32 | 192.0.2.2 | 0 | 20002 | false | true | false | |

| default | 192.0.2.3/32 | 192.0.2.3 | 0 | 20000 | false | true | true | |

| default | 192.0.2.4/32 | 192.0.2.2 | 0 | 20003 | false | true | true | |

| default | 192.0.2.4/32 | 192.0.2.3 | 0 | 20003 | false | true | false | |

| default | 192.0.2.5/32 | 192.0.2.2 | 0 | 20004 | false | true | true | |

| default | 192.0.2.5/32 | 192.0.2.3 | 0 | 20004 | false | true | false | |

| default | 192.0.2.6/32 | 192.0.2.2 | 0 | 20005 | false | true | true | |

| default | 192.0.2.6/32 | 192.0.2.3 | 0 | 20005 | false | true | false | |

| default | 192.168.24.0/ | 192.0.2.2 | 0 | 20006 | false | true | false | rejected-on- |

| | 30 | | | | | | | rx |

+---------------+---------------+---------------+---------------+---------------+---------------+---------------+---------------+---------------+PE-2 and PE-4 add an MPLS label for the local prefix.

# On PE-4: # similar on PE-2

--{ running }--[ ]--

A:admin@PE-4# network-instance default route-table mpls

+---------+-----------+-------------+-----------------+------------------------+----------------------+------------------+

| Label | Operation | Type | Next Net-Inst | Next-hop IP (Type) | Next-hop | Next-hop MPLS |

| | | | | | Subinterface | labels |

+=========+===========+=============+=================+========================+======================+==================+

| 20000 | POP | ldp | default | | | |

| 20001 | SWAP | ldp | N/A | 192.168.24.1 (mpls) | ethernet-1/31.1 | 20000 |

| 20002 | SWAP | ldp | N/A | 192.168.24.1 (mpls) | ethernet-1/31.1 | 20001 |

| 20003 | SWAP | ldp | N/A | 192.168.34.1 (mpls) | ethernet-1/32.1 | 20000 |

| 20004 | SWAP | ldp | N/A | 192.168.24.1 (mpls) | ethernet-1/31.1 | 20004 |

| 20005 | SWAP | ldp | N/A | 192.168.46.2 (mpls) | ethernet-1/6.1 | IMPLICIT_NULL |

| 20006 | POP | ldp | default | | | |

+---------+-----------+-------------+-----------------+------------------------+----------------------+------------------+Import policy

By default, a node propagates all received FECs. To change this behavior, an import policy is created and applied to the LDP instance or to the peers peer context of the LDP instance (applicable per peer). There is no configuration difference in defining an import and export policy.

As an example, the system interface 192.0.2.3/32 is not propagated. The configuration of the LDP import policy to exclude system or local interfaces is as follows:

# On all nodes:

enter candidate

routing-policy {

prefix-set import-prefix-set-test {

prefix 192.0.2.3/32 mask-length-range exact { }

}

policy import-fec-test {

default-action {

policy-result accept # received local prefixes and system addresses are propagated

}

statement import-statement-test {

match {

prefix {

prefix-set import-prefix-set-test

}

}

action {

policy-result reject

}

}

}The LDP import policy is applied to the LDP instance on the nodes, with the import-prefix-policy command in the network-instance default protocols ldp context.

# on all nodes:

enter candidate

network-instance default {

protocols {

ldp {

import-prefix-policy import-fec-testOnly PE-3 originates a label binding for this system interface, so only PE-3 advertises it. A node only advertises to its directly connected LDP peers (and to the LDP peers with which it has an LDP target binding). So, only PE-3 advertises system interface 192.0.2.3/32 to PE-1 and PE-4. Each node does not propagate the received system interface 192.0.2.3/32 to its LDP peers. So, only PE-1 and PE-4 receive it.

# On PE-3:

--{ running }--[ ]--

A:admin@PE-3# info from state with-context / network-instance default protocols ldp ipv4 bindings advertised-prefix-fec

network-instance default {

protocols {

ldp {

ipv4 {

bindings {

advertised-prefix-fec {

---snip---

prefix-fec 192.0.2.3/32 lsr-id 192.0.2.1 label-space-id 0 {

egress-lsr-fec true

label 20000

label-type pop

}

prefix-fec 192.0.2.3/32 lsr-id 192.0.2.4 label-space-id 0 {

egress-lsr-fec true

label 20000

label-type pop

}When the import policy is applied, the active LDP binding table contains less entries: the system interface of PE-3. Before the import policy is applied, the LDP binding table for PE-1 contains the following entries:

# On PE-1:

--{ running }--[ ]--

A:admin@PE-1# info from state with-context / network-instance default protocols ldp ipv4 bindings received-prefix-fec prefix-fec

network-instance default {

protocols {

ldp {

ipv4 {

bindings {

received-prefix-fec {

---snip--- # FEC prefixes for other system addresses

prefix-fec 192.0.2.3/32 lsr-id 192.0.2.2 label-space-id 0 {

label 20002

entropy-label-transmit false

ingress-lsr-fec true

used-in-forwarding false

}

prefix-fec 192.0.2.3/32 lsr-id 192.0.2.3 label-space-id 0 {

label 20000

entropy-label-transmit false

ingress-lsr-fec true

used-in-forwarding true

next-hop 1 {

next-hop 192.168.13.2

next-hop-type primary

interface ethernet-1/32.1

}

}

prefix-fec 192.168.24.0/30 lsr-id 192.0.2.2 label-space-id 0 {

label 20006

entropy-label-transmit false

ingress-lsr-fec true

used-in-forwarding false

not-used-reason rejected-on-rx

}

--{ running }--[ ]--

A:admin@PE-1# info from state with-context / network-instance default protocols ldp ipv4 bindings received-prefix-fec prefix-fec * lsr-id * label-space-id 0 |

as table | filter fields *

+---------------+---------------+---------------+---------------+---------------+---------------+---------------+---------------+---------------+

| Network- | Fec | Lsr-id | Label-space- | Label | Entropy- | Ingress-lsr- | Used-in- | Not-used- |

| instance | | | id | | label- | fec | forwarding | reason |

| | | | | | transmit | | | |

+===============+===============+===============+===============+===============+===============+===============+===============+===============+

| default | 192.0.2.2/32 | 192.0.2.2 | 0 | 20000 | false | true | true | |

| default | 192.0.2.2/32 | 192.0.2.3 | 0 | 20002 | false | true | false | |

| default | 192.0.2.3/32 | 192.0.2.2 | 0 | 20002 | false | true | false | |

| default | 192.0.2.3/32 | 192.0.2.3 | 0 | 20000 | false | true | true | |

| default | 192.0.2.4/32 | 192.0.2.2 | 0 | 20003 | false | true | true | |

| default | 192.0.2.4/32 | 192.0.2.3 | 0 | 20003 | false | true | false | |

| default | 192.0.2.5/32 | 192.0.2.2 | 0 | 20004 | false | true | true | |

| default | 192.0.2.5/32 | 192.0.2.3 | 0 | 20004 | false | true | false | |

| default | 192.0.2.6/32 | 192.0.2.2 | 0 | 20005 | false | true | true | |

| default | 192.0.2.6/32 | 192.0.2.3 | 0 | 20005 | false | true | false | |

| default | 192.168.24.0/ | 192.0.2.2 | 0 | 20006 | false | true | false | rejected-on- |

| | 30 | | | | | | | rx |

+---------------+---------------+---------------+---------------+---------------+---------------+---------------+---------------+---------------+# On PE-1:

--{ running }--[ ]--

A:admin@PE-1# info from state with-context / network-instance default protocols ldp ipv4 bindings received-prefix-fec

network-instance default {

protocols {

ldp {

ipv4 {

bindings {

received-prefix-fec {

---snip--- # FEC prefixes for other system addresses

prefix-fec 192.0.2.3/32 lsr-id 192.0.2.3 label-space-id 0 {

label 20000

entropy-label-transmit false

ingress-lsr-fec true

used-in-forwarding false

not-used-reason rejected-on-rx

}

prefix-fec 192.168.24.0/30 lsr-id 192.0.2.2 label-space-id 0 {

label 20006

entropy-label-transmit false

ingress-lsr-fec true

used-in-forwarding false

next-hop 1 {

next-hop 192.168.12.2

next-hop-type primary

interface ethernet-1/31.1

}

}

prefix-fec 192.168.24.0/30 lsr-id 192.0.2.3 label-space-id 0 {

label 20006

entropy-label-transmit false

ingress-lsr-fec true

used-in-forwarding false

}

--{ running }--[ ]--

A:admin@PE-1# info from state with-context / network-instance default protocols ldp ipv4 bindings received-prefix-fec prefix-fec * lsr-id * label-space-id 0 |

as table | filter fields *

+---------------+---------------+---------------+---------------+---------------+---------------+---------------+---------------+---------------+

| Network- | Fec | Lsr-id | Label-space- | Label | Entropy- | Ingress-lsr- | Used-in- | Not-used- |

| instance | | | id | | label- | fec | forwarding | reason |

| | | | | | transmit | | | |

+===============+===============+===============+===============+===============+===============+===============+===============+===============+

| default | 192.0.2.2/32 | 192.0.2.2 | 0 | 20000 | false | true | true | |

| default | 192.0.2.2/32 | 192.0.2.3 | 0 | 20002 | false | true | false | |

| default | 192.0.2.3/32 | 192.0.2.3 | 0 | 20000 | false | true | false | rejected-on- |

| | | | | | | | | rx |

| default | 192.0.2.4/32 | 192.0.2.2 | 0 | 20003 | false | true | true | |

| default | 192.0.2.4/32 | 192.0.2.3 | 0 | 20003 | false | true | false | |

| default | 192.0.2.5/32 | 192.0.2.2 | 0 | 20004 | false | true | true | |

| default | 192.0.2.5/32 | 192.0.2.3 | 0 | 20004 | false | true | false | |

| default | 192.0.2.6/32 | 192.0.2.2 | 0 | 20005 | false | true | true | |

| default | 192.0.2.6/32 | 192.0.2.3 | 0 | 20005 | false | true | false | |

| default | 192.168.24.0/ | 192.0.2.2 | 0 | 20006 | false | true | false | |

| | 30 | | | | | | | |

| default | 192.168.24.0/ | 192.0.2.3 | 0 | 20006 | false | true | false | |

| | 30 | | | | | | | |

+---------------+---------------+---------------+---------------+---------------+---------------+---------------+---------------+---------------+PE-4 and PE-1 lose the MPLS label (20003) for the 192.0.2.3/32 system address.

# On PE-4: # similar on PE-1

--{ running }--[ ]--

A:admin@PE-4# show / network-instance default route-table mpls

+---------+-----------+-------------+-----------------+------------------------+----------------------+------------------+

| Label | Operation | Type | Next Net-Inst | Next-hop IP (Type) | Next-hop | Next-hop MPLS |

| | | | | | Subinterface | labels |

+=========+===========+=============+=================+========================+======================+==================+

| 20000 | POP | ldp | default | | | |

| 20001 | SWAP | ldp | N/A | 192.168.24.1 (mpls) | ethernet-1/31.1 | 20000 |

| 20002 | SWAP | ldp | N/A | 192.168.24.1 (mpls) | ethernet-1/31.1 | 20001 |

| 20004 | SWAP | ldp | N/A | 192.168.24.1 (mpls) | ethernet-1/31.1 | 20004 |

| 20005 | SWAP | ldp | N/A | 192.168.46.2 (mpls) | ethernet-1/6.1 | IMPLICIT_NULL |

| 20006 | POP | ldp | default | | | |

+---------+-----------+-------------+-----------------+------------------------+----------------------+------------------+OAM

The following operations, administration, and maintenance operations can be launched on an LDP LSP:

-

oam lsp-ping

-

oam lsp-trace

As an example, an LSP ping is sent from PE-1 to PE-6:

# On PE-1:

--{ running }--[ ]--

A:admin@PE-1# tools oam lsp-ping ldp fec 192.0.2.6/32

/oam/lsp-ping/ldp/fec[prefix=192.0.2.6/32]:

Initiated LSP Ping to prefix 192.0.2.6/32 with session id 49152The result is not directly visible from the response, but can be verified as follows:

# On PE-1:

--{ running }--[ ]--

A:admin@PE-1# info from state with-context / oam lsp-ping ldp fec 192.0.2.6/32

oam {

lsp-ping {

ldp {

fec 192.0.2.6/32 {

session-id 49152 {

test-active false

statistics {

round-trip-time {

minimum 4520

maximum 4520

average 4520

standard-deviation 0

}

}

path-destination {

ip-address 127.0.0.1

}

sequence 1 {

probe-size 48

request-sent true

out-interface ethernet-1/31.1

reply {

received true

reply-sender 192.0.2.6

udp-data-length 40

mpls-ttl 255

round-trip-time 4520

return-code replying-router-is-egress-for-fec-at-stack-depth-n

return-subcode 1

}

}The ELER (PE-6) returns a reply. The reply includes the sender of the reply, the MPLS TTL (255), the round trip time (in microseconds), and a return-code.

LSP ping results remain present in the state. They can be cleared with:

# On PE-1:

--{ running }--[ ]--

A:admin@PE-1# tools oam lsp-ping ldp clear

/oam/lsp-ping:

LDP Ping data has been clearedAs an example, an LSP trace is sent from PE-1 to PE-6:

# On PE-1:

--{ running }--[ ]--

A:admin@PE-1# tools oam lsp-trace ldp fec 192.0.2.6/32

/oam/lsp-trace/ldp/fec[prefix=192.0.2.6/32]:

Initiated LSP Trace to prefix 192.0.2.6/32 with session id 49153The result can be verified as follows:

# On PE-1:

--{ running }--[ ]--

A:admin@PE-1# info from state with-context / oam lsp-trace ldp fec 192.0.2.6/32

oam {

lsp-trace {

ldp {

fec 192.0.2.6/32 {

session-id 49153 {

test-active false

path-destination {

ip-address 127.0.0.1

}

hop 1 {

probe 1 {

probe-size 76

probes-sent 1

reply {

received true

reply-sender 192.0.2.2

udp-data-length 60

mpls-ttl 1

round-trip-time 4348

return-code label-switched-at-stack-depth-n

return-subcode 1

}

downstream-detailed-mapping 1 {

mtu 1500

address-type ipv4-numbered

downstream-router-address 192.168.24.2

downstream-interface-address 192.168.24.2

mpls-label 1 {

label 20005

protocol ldp

}

}

}

}

hop 2 {

probe 1 {

probe-size 76

probes-sent 1

reply {

received true

reply-sender 192.0.2.4

udp-data-length 60

mpls-ttl 2

round-trip-time 4829

return-code label-switched-at-stack-depth-n

return-subcode 1

}

downstream-detailed-mapping 1 {

mtu 1500

address-type ipv4-numbered

downstream-router-address 192.168.46.2

downstream-interface-address 192.168.46.2

mpls-label 1 {

label 20000

protocol ldp

}

}

}

}

hop 3 {

probe 1 {

probe-size 76

probes-sent 1

reply {

received true

reply-sender 192.0.2.6

udp-data-length 32

mpls-ttl 3

round-trip-time 5102

return-code replying-router-is-egress-for-fec-at-stack-depth-n

return-subcode 1

}

}

}

--{ running }--[ ]--

A:admin@PE-1# info from state with-context / oam lsp-trace ldp fec * session-id * hop * probe * | as table

+--------------+--------------+--------------+--------------+--------------+--------------+--------------+--------------+--------------+--------------+

| Fec | Session-id | Hop | Probe-index | Probes-sent | Last-probe- | Reply reply- | Reply round- | Reply | Reply |

| | | | | | send- | sender | trip-time | return-code | return- |

| | | | | | failure- | | | | subcode |

| | | | | | reason | | | | |

+==============+==============+==============+==============+==============+==============+==============+==============+==============+==============+

| 192.0.2.6/32 | 49153 | 1 | 1 | 1 | | 192.0.2.2 | 4348 | label- | 1 |

| | | | | | | | | switched-at- | |

| | | | | | | | | stack- | |

| | | | | | | | | depth-n | |

| 192.0.2.6/32 | 49153 | 2 | 1 | 1 | | 192.0.2.4 | 4829 | label- | 1 |

| | | | | | | | | switched-at- | |

| | | | | | | | | stack- | |

| | | | | | | | | depth-n | |

| 192.0.2.6/32 | 49153 | 3 | 1 | 1 | | 192.0.2.6 | 5102 | replying- | 1 |

| | | | | | | | | router-is- | |

| | | | | | | | | egress-for- | |

| | | | | | | | | fec-at- | |

| | | | | | | | | stack- | |

| | | | | | | | | depth-n | |

+--------------+--------------+--------------+--------------+--------------+--------------+--------------+--------------+--------------+--------------+The LSP trace has three hops: PE-6 is reached from PE-1 via PE-2 and PE-4.

The ELER (PE-6) and the LSRs (PE-2 and PE-4) return a reply. The reply includes the sender of the reply, the MPLS TTL (a sequence number that increases with 1 on each hop along the LSP trace), the round trip time (in microseconds), and a return-code. The ELER has another return-code than the LSRs.

The LSRs have additional downstream (toward the destination) detailed mapping information. The downstream detailed mapping information includes the maximum transfer unit (MTU), the type of IP address, the downstream router address and router interface address, and the label and protocol that is used to reach the next hop.

LSP trace results remain present in the state. They can be cleared with:

# On PE-1:

--{ running }--[ ]--

A:admin@PE-1# tools oam lsp-trace ldp clear

/oam/lsp-trace:

LDP Trace data has been clearedLDP statistics

LDP related statistics on each node can be verified as follows:

# On PE-1:

--{ running }--[ ]--

A:admin@PE-1# show / network-instance default protocols ldp statistics

=========================================================================================================================================================

Net-Inst default LDP statistics

---------------------------------------------------------------------------------------------------------------------------------------------------------

IPv4 Total Discovery Interfaces : 2 # PE-2 and PE-3

IPv6 Total Discovery Interfaces : 0

IPv4 Total Discovery Targets : 0

IPv6 Total Discovery Targets : 0

IPv4 Total Interface Hello Adjacencies : 2

IPv6 Total Interface Hello Adjacencies : 0

IPv4 Total Targeted Hello Adjacencies : 0

IPv6 Total Targeted Hello Adjacencies : 0

IPv4 Total Peers : 2

IPv6 Total Peers : 0

IPv4 Received Prefix FECs : 11 # From PE-2: 4 FECs (system) + 1 FEC (non-system); From PE-3: 5 FECs (system) + 1 FEC (non-system)

IPv4 Advertised Prefix FECs : 11 # To PE-2: 4 FECs (system) + 1 FEC (non-system); To PE-3: 5 FECs (system) + 1 FEC (non-system)

IPv6 Received Prefix FECs : 0

IPv6 Advertised Prefix FECs : 0

Bad Ldp Identifier : 0

Bad Protocol Version : 0

Bad Pdu Length : 0

Bad Message Length : 0

Unknown Tlv : 0

Bad Tlv Length : 0

Malformed Tlv Value : 0

Session Rejected No Hello : 0

Session Rejected Parameters Adv Mode : 0

Session Rejected Parameters Max Pdu Length: 0

Session Rejected Parameters Label Range : 0

---------------------------------------------------------------------------------------------------------------------------------------------------------

=========================================================================================================================================================# on PE-1:

--{ running }--[ ]--

A:admin@PE-1# info from state with-context / network-instance default protocols ldp statistics

network-instance default {

protocols {

ldp {

statistics {

ipv4 {

total-discovery-interfaces 2 # PE-2 and PE-3

total-discovery-targets 0

total-interface-hello-adjacencies 2

total-targeted-hello-adjacencies 0

total-peers 2

}

ipv6 {

total-discovery-interfaces 0

total-discovery-targets 0

total-interface-hello-adjacencies 0

total-targeted-hello-adjacencies 0

total-peers 0

}

fec-statistics {

ipv4-prefix {

received-fecs 11 # From PE-2: 4 FECs (system) + 1 FEC (non-system); From PE-3: 5 FECs (system) + 1 FEC (non-system)

advertised-fecs 11 # To PE-2: 4 FECs (system) + 1 FEC (non-system); To PE-3: 5 FECs (system) + 1 FEC (non-system)

}

ipv6-prefix {

received-fecs 0

advertised-fecs 0

}

}

protocol-errors {

bad-ldp-identifier 0

bad-protocol-version 0

bad-pdu-length 0

bad-message-length 0

unknown-tlv 0

bad-tlv-length 0

malformed-tlv-value 0

session-rejected-no-hello 0

session-rejected-parameters-adv-mode 0

session-rejected-parameters-max-pdu-length 0

session-rejected-parameters-label-range 0

}Parts can be filtered out with the following commands:

# On PE-1:

--{ running }--[ ]--

A:admin@PE-1# info from state with-context / network-instance default protocols ldp statistics ipv4

A:admin@PE-1# info from state with-context / network-instance default protocols ldp statistics ipv6

A:admin@PE-1# info from state with-context / network-instance default protocols ldp statistics fec-statistics

A:admin@PE-1# info from state with-context / network-instance default protocols ldp statistics fec-statistics ipv4-prefix

A:admin@PE-1# info from state with-context / network-instance default protocols ldp statistics fec-statistics ipv6-prefix# On PE-1:

--{ running }--[ ]--

A:admin@PE-1# info from state with-context / network-instance default protocols ldp peers

network-instance default {

protocols {

ldp {

peers {

session-keepalive-holdtime 180

session-keepalive-interval 60

peer 192.0.2.2 label-space-id 0 {

fec-limit 0

adjacency-type link

session-state operational

last-oper-state-change "2025-08-25T11:59:07.856Z (20 minutes ago)"

---snip---

session-holdtime {

peer-proposed 180

negotiated 180

remaining 142

}

statistics {

received-messages {

total-messages 35

address 1

address-withdraw 0

initialization 1

keepalive 19

label-abort-request 0

label-mapping 7 # after applying export policy

label-release 2 # after applying export and import policy

label-request 0

label-withdraw 2 # after applying export and import policy

notification 3

capability 0

}

sent-messages {

total-messages 35

address 1

address-withdraw 0

initialization 1

keepalive 19

label-abort-request 0

label-mapping 7 # after applying export and import policy

label-release 2 # after applying export and import policy

label-request 0

label-withdraw 2 # after applying export and import policy

notification 3

capability 0

}

address-statistics {

ipv4 {

received-addresses 4

advertised-addresses 3

}

ipv6 {

received-addresses 0

advertised-addresses 0

}

}

fec-statistics {

ipv4-prefix {

received-fecs 5

advertised-fecs 5

}

ipv6-prefix {

received-fecs 0

advertised-fecs 0

}

}

}

}

peer 192.0.2.3 label-space-id 0 {

---snip---

}On each node, LDP peer statistics can be filtered out for a single peer with the following command:

# On PE-1:

--{ running }--[ ]--

A:admin@PE-1# info from state with-context / network-instance default protocols ldp peers peer <LSR IS> label-space-id 0Debug

Debug logging can be configured for IS-IS and LDP as follows:

# On all nodes:

enter candidate

system logging {

file isis-log-messages {

directory /var/log/srlinux/file # default

format RSYSLOG_FileFormat # default

rotate 4 # default

size 10M # default

subsystem isis {

priority {

match-above debug

match-exact [ debug ]

}

}

}

file ldp-log-messages {

directory /var/log/srlinux/file # default

format RSYSLOG_FileFormat # default

rotate 4 # default

size 10M # default

subsystem ldp {

priority {

match-above debug

match-exact [ debug ]

}

}

}The log file can be verified as follows:

# On all nodes:

--{ running }--[ ]--

A:admin@PE-x# show / system logging file isis-log-messages

#########################################################################################################################################################

# /var/log/srlinux/file/*isis-log-messages* # presented with option: -c2

#########################################################################################################################################################

2025-08-25T13:47:29.949 sr_isis_mgr: isis|47200|W: In network-instance default, the level-1 IS-IS adjacency with system 0100.0000.0002, using interface ethernet-1/31.1, moved to state INIT.

2025-08-25T13:47:29.953 sr_isis_mgr: isis|47200|W: In network-instance default, the level-1 IS-IS adjacency with system 0100.0000.0002, using interface ethernet-1/31.1, moved to state UP.

2025-08-25T13:47:49.394 sr_isis_mgr: isis|47200|W: In network-instance default, the level-1 IS-IS adjacency with system 0100.0000.0003, using interface ethernet-1/32.1, moved to state INIT.

2025-08-25T13:47:49.399 sr_isis_mgr: isis|47200|W: In network-instance default, the level-1 IS-IS adjacency with system 0100.0000.0003, using interface ethernet-1/32.1, moved to state UP.

#########################################################################################################################################################

--{ running }--[ ]--

A:admin@PE-x# show / system logging file ldp-log-messages

#########################################################################################################################################################

# /var/log/srlinux/file/*ldp-log-messages* # presented with option: -c2

#########################################################################################################################################################

2025-08-25T13:58:41.626 sr_ldp_mgr: ldp|61030|I: In network-instance default, LDP is now up and functional on the following IPv4 interface ethernet-1/31.1.

2025-08-25T13:58:41.626 sr_ldp_mgr: ldp|61030|I: In network-instance default, LDP is now up and functional on the following IPv4 interface ethernet-1/32.1.

2025-08-25T13:58:41.626 sr_ldp_mgr: ldp|61030|I: In network-instance default, LDP-IPv4 is now up and functional.

2025-08-25T13:59:07.856 sr_ldp_mgr: ldp|61030|I: In network-instance default, an LDP session is now up and operational with peer 192.0.2.2:0.

2025-08-25T13:59:27.572 sr_ldp_mgr: ldp|61030|I: In network-instance default, an LDP session is now up and operational with peer 192.0.2.3:0.

#########################################################################################################################################################

--{ running }--[ ]--

A:admin@PE-1# bash

cd /var/log/srlinux/file

ls -lr

/var/log/srlinux/file

total 272

-rw-rw-rw-+ 1 syslog adm 266888 Aug 25 13:58 messages

-rw-rw-r--+ 1 syslog adm 839 Aug 25 13:59 ldp-log-messages

-rw-rw-r--+ 1 syslog adm 860 Aug 25 13:47 isis-log-messages

cat ldp-log-messages

2025-08-25T13:47:29.949527+02:00 PE-1 sr_isis_mgr: isis|47200|47231|00000|W: In network-instance default, the level-1 IS-IS adjacency with system 0100.0000.0002, using interface ethernet-1/31.1, moved to state INIT.

2025-08-25T13:47:29.953380+02:00 PE-1 sr_isis_mgr: isis|47200|47231|00001|W: In network-instance default, the level-1 IS-IS adjacency with system 0100.0000.0002, using interface ethernet-1/31.1, moved to state UP.

2025-08-25T13:47:49.394323+02:00 PE-1 sr_isis_mgr: isis|47200|47231|00002|W: In network-instance default, the level-1 IS-IS adjacency with system 0100.0000.0003, using interface ethernet-1/32.1, moved to state INIT.

2025-08-25T13:47:49.399233+02:00 PE-1 sr_isis_mgr: isis|47200|47231|00003|W: In network-instance default, the level-1 IS-IS adjacency with system 0100.0000.0003, using interface ethernet-1/32.1, moved to state UP.